Feed aggregator

WAIT Clause for DMLs in 26.2

Oracle 26ai 26.2 now introduces the WAIT (and NOWAIT) Clause for INSERT / UPDATE / DELETE / MERGE DMLs. We have had a WAIT Clause for SELECT FOR UPDATE statements but not for these "simple" DML statements.

This is my Video Demo : Wait Clause for DMLs in 26.2

Oracle Database 26ai Client and SQLNET.EXPIRE_TIME

We have been facing one issue at one of our customer where the Oracle Client connections remained opened for days blocking some avaloq JobNetz. We have been doing some tests and we could fortunately find a solution resolving the problem thanks to Oracle Database 26ai supporting now SQLNET.EXPIRE_TIME on the client side. Through this blog, I would like to share with you the problem and then the tests that have been performed helping us to conclude to a solution.

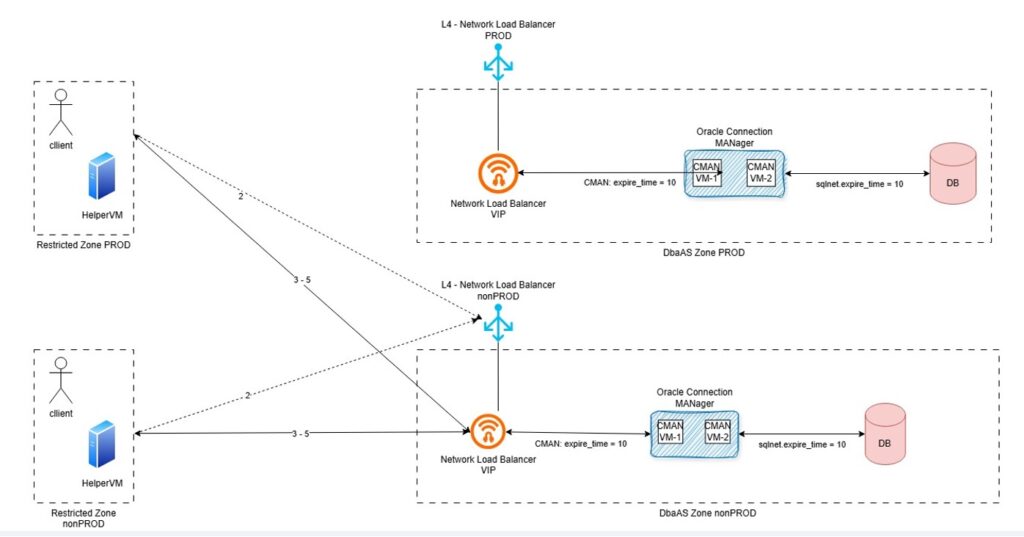

Environment and problem descriptionAt our customer environment, client connection run from the HelperVM does not establish database connections directly to the database listener. The connection goes through the Network Load Balancer, so called NLB, and the Oracle Connection Manager, so called CMAN.

The diagram below describes the database connection establishment process.

This is how it works.

- 1 – Client seeks for connection details (ideally, get the connection details from Oracle Directory Service)

- 2 – Client connects to Network Load Balancer

- 3 – Network Load Balancer “forwards” the request to Oracle Connection MANager using Virtual IP

- 4 – Oracle Connection MANager acts as a rule-based firewall and ensure the database target service is running on the “white listed targets (next_hop)

- 5 – Oracle Database establish connectivity upon credential validate (Oracle listener acts in between). The listener hands the connection over to the Oracle CMAN gateway process, which passes data back and forth between the client and the db-server and collects statistics.

We could observed per reverse engineering technique that the TCP connection established between the Oracle client and Oracle CMAN works upon Network Load Balancer Virtual IP.

The Network Load Balancer needs for Session persistence “statefullnes” to be enabled. This means that once the connection is established, the NLB “remembers” established connections and fails them over in case of planned downtime.

We have been facing some broken connectivity issue. Checking Linux socket connection with linux ss command (# ss -nop) we could see TCP connection hungs between client and NLB virtual IP (CNAME DNS entry). On CMAN and DB-Server side the connection were already cleaned up as per Dead Connection Detection configuration. We can see in the diagram that EXPIRE_TIME is setup with a value of 10 minutes on the CMAN side and the listener configuration from the VM Cluster database.

The connection was still opened on the client side because:

- The client was still waiting on a result which would never come

- TCP connection to the NLB Virtual IP was still existing albeit closed with the CMAN and database listener

- TCP connection to the NLB would remain alive for days

The problem is that by default Oracle client does not enable TCP Keepalive, which is an expected behavior. Oracle expects the keepalive to be set on the server side. The “Dead Connection Detection” will then be enforced for all clients.

TCP Keepalive should then be managed in our case on the client side. And we are currently running Oracle 19c Client.

EXPIRE_TIME handled on Oracle 19c ClientOracle 19c client does not come with SQLNET.EXPIRE_TIME aka “Oracle dead connection detection”, unless hacked over connection string hidden (unsupported) parameter ENABLE_BROKEN.

See following blog from a one of my former colleagues:

sqlnet-expire_time and enablebroken

And what about Oracle 26ai Client? Let’s do some test…

Installation of Oracle 26ai ClientOn the lab, I will use the VM called bastion to act as the client. The bastion has already an Oracle 19c Client installed. I’m going to installed new Oracle 26ai Client on it.

First we need to download the client version, which can be done from the following website:

https://www.oracle.com/database/technologies/oracle26ai-linux-downloads.html

I will install Oracle 26ai Client version in /opt/oracle.

[root@bastion oracle]# pwd /opt/oracle [root@bastion oracle]# ls -ld client* drwxr-xr-x. 52 oracle oinstall 4096 Jun 6 2024 client19c drwxr-xr-x. 47 oracle oinstall 4096 Nov 11 2025 client_21c [root@bastion oracle]#

I will first unzip the downloaded oracle zip file.

[oracle@bastion oracle]$ pwd /opt/oracle [oracle@bastion oracle]$ unzip -q LINUX.X64_2326100_client.zip

I will then rename the client installation directory:

[oracle@bastion oracle]$ ls -ld client* drwxr-xr-x. 5 oracle oinstall 90 Jan 17 13:59 client drwxr-xr-x. 52 oracle oinstall 4096 Jun 6 2024 client19c drwxr-xr-x. 47 oracle oinstall 4096 Nov 11 2025 client_21c [oracle@bastion oracle]$ mv client client26ai [oracle@bastion oracle]$ [oracle@bastion oracle]$ ls -ld client* drwxr-xr-x. 52 oracle oinstall 4096 Jun 6 2024 client19c drwxr-xr-x. 47 oracle oinstall 4096 Nov 11 2025 client_21c drwxr-xr-x. 5 oracle oinstall 90 Jan 17 13:59 client26ai [oracle@bastion oracle]$

I will prepare the response file for the command line installation.

[oracle@bastion client26ai]$ cp -p response/client_install.rsp response/client_install_custom.rsp [oracle@bastion client26ai]$ vi response/client_install_custom.rsp [oracle@bastion client26ai]$ diff response/client_install.rsp response/client_install_custom.rsp 22c22 UNIX_GROUP_NAME=oinstall 26c26 INVENTORY_LOCATION=/opt/oracle/oraInventory 30c30 ORACLE_HOME=/opt/oracle/client26ai 34c34 ORACLE_BASE=/opt/oracle 48c48 oracle.install.client.installType=Administrator [oracle@bastion client26ai]$

And I will run the Oracle 26ai Client installation.

[oracle@bastion client26ai]$ pwd /opt/oracle/client26ai [oracle@bastion client26ai]$ ls -ltrh total 24K -rwxrwx---. 1 oracle oinstall 500 Feb 6 2013 welcome.html -rwxr-xr-x. 1 oracle oinstall 8.7K Jan 17 12:38 runInstaller drwxr-xr-x. 4 oracle oinstall 4.0K Jan 17 12:38 install drwxr-xr-x. 15 oracle oinstall 4.0K Jan 17 13:37 stage drwxr-xr-x. 2 oracle oinstall 82 May 21 16:13 response [oracle@bastion client26ai]$ ./runInstaller -silent -responseFile /opt/oracle/client26ai/response/client_install_custom.rsp Starting Oracle Universal Installer... Checking Temp space: must be greater than 415 MB. Actual 5025 MB Passed Checking swap space: must be greater than 150 MB. Actual 4095 MB Passed Preparing to launch Oracle Universal Installer from /tmp/OraInstall2026-05-21_04-16-17PM. Please wait ... [WARNING] [INS-32016] The selected Oracle home contains directories or files. ACTION: To start with an empty Oracle home, either remove its contents or specify a different location. ********************************************* Package: compat-openssl10-1.0.2 (x86_64): This is a prerequisite condition to test whether the package "compat-openssl10-1.0.2 (x86_64)" is available on the system. Severity: IGNORABLE Overall status: VERIFICATION_FAILED Error message: PRVF-7532 : Package "compat-openssl10(x86_64)-1.0.2" is missing on node "bastion" Cause: A required package is either not installed or, if the package is a kernel module, is not loaded on the specified node. Action: Ensure that the required package is installed and available. ----------------------------------------------- [WARNING] [INS-13014] Target environment does not meet some optional requirements. CAUSE: Some of the optional prerequisites are not met. See logs for details. /opt/oraInventory/logs/installActions2026-05-21_04-16-17PM.log. ACTION: Identify the list of failed prerequisite checks from the log: /opt/oraInventory/logs/installActions2026-05-21_04-16-17PM.log. Then either from the log file or from installation manual find the appropriate configuration to meet the prerequisites and fix it manually. The response file for this session can be found at: /opt/oracle/client26ai/install/response/client_2026-05-21_04-16-17PM.rsp You can find the log of this install session at: /opt/oraInventory/logs/installActions2026-05-21_04-16-17PM.log The installation of Oracle Client 26ai was successful. Please check '/opt/oraInventory/logs/silentInstall2026-05-21_04-16-17PM.log' for more details. Successfully Setup Software with warning(s). [INS-10115] All configuration tools were previously ran successfully, no further configuration is required. [oracle@bastion client26ai]$

And the Oracle 26ai Client is now installed.

Prepare target databaseI will create a user on the target lab PDB, named TESTZ_TMR_003I, in order to establish sqlplus connection and test the EXPIRE_TIME configuration.

I will create a user test01 and grant the connect permissions.

[oracle@svl-oat ~]$ echo $ORACLE_SID

CDB001I

[oracle@svl-oat ~]$ sqlplus / as sysdba

SQL*Plus: Release 19.0.0.0.0 - Production on Thu May 21 14:30:39 2026

Version 19.23.0.0.0

Copyright (c) 1982, 2023, Oracle. All rights reserved.

Connected to:

Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production

Version 19.23.0.0.0

SQL> show pdbs

CON_ID CON_NAME OPEN MODE RESTRICTED

---------- ------------------------------ ---------- ----------

2 PDB$SEED READ ONLY NO

3 TESTZ_APP_006I READ WRITE NO

4 RCLON_TMR_003I MOUNTED

5 RCLON_TMR_002I MOUNTED

6 RCLON_TMR_001I MOUNTED

7 CLONZ_TMR_002I MOUNTED

8 TESTZ_TMR_003I READ WRITE NO

10 TESTZ_APP_004I READ WRITE NO

11 CLONZ_APP_001I READ WRITE NO

12 RCLON_APP_003I READ WRITE NO

SQL> alter session set container=TESTZ_TMR_003I;

Session altered.

SQL> create user test01 identified by "test_expire";

User created.

SQL> grant connect to test01;

Grant succeeded.

SQL>

Test connection with Oracle 26ai Client

I will first set the ORACLE_HOME variable on the appropriate client directory.

[oracle@bastion client26ai]$ echo $ORACLE_HOME /opt/oracle/client19c [oracle@bastion client26ai]$ export ORACLE_HOME=/opt/oracle/client26ai [oracle@bastion client26ai]$ echo $ORACLE_HOME /opt/oracle/client26ai [oracle@bastion client26ai]$

I will update the PATH variable to get tnsping and sqlplus binary from the appropriate Oracle 26ai Client.

[oracle@bastion client26ai]$ echo $PATH /usr/share/Modules/bin:/usr/local/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/opt/oracle/client19c/bin:/opt/oracle/sqlcl-24.1.0.087.0929//bin [oracle@bastion client26ai]$ export PATH=/opt/oracle/client26ai/bin [oracle@bastion client26ai]$ echo $PATH /opt/oracle/client26ai/bin [oracle@bastion client26ai]$

I will check that the appropriate tnsping and sqlplus is taken.

[oracle@bastion client26ai]$ which sqlplus /opt/oracle/client26ai/bin/sqlplus [oracle@bastion client26ai]$ which tnsping /opt/oracle/client26ai/bin/tnsping

I checked that the connection to target PDB is working.

[oracle@bastion ~]$ tnsping svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com TNS Ping Utility for Linux: Version 23.26.1.0.0 - Production on 21-MAY-2026 16:28:56 Copyright (c) 1997, 2026, Oracle. All rights reserved. Used parameter files: /opt/oracle/client19c/network/admin/sqlnet.ora Used EZCONNECT adapter to resolve the alias Attempting to contact (DESCRIPTION=(CONNECT_DATA=(SERVICE_NAME=testz_tmr_003i.db.jewlab.oraclevcn.com))(ADDRESS=(PROTOCOL=tcp)(HOST=X.X.1.135)(PORT=1521))) OK (0 msec) [oracle@bastion ~]$

The TNS_ADMIN used is from the 19c Oracle client directory, which is absolutely not a problem.

[oracle@bastion ~]$ echo $TNS_ADMIN /opt/oracle/client19c/network/admin [oracle@bastion ~]$Test sqlplus connection with Oracle 26ai Client

As I can see on my client side, I do not have any sqlplus connection running right now.

[opc@bastion ~]$ ss -nop | grep 1521 [opc@bastion ~]$

I will generate a sqlplus connection.

[oracle@bastion client26ai]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 23.26.1.0.0 - Production on Thu May 21 16:34:23 2026 Version 23.26.1.0.0 Copyright (c) 1982, 2025, Oracle. All rights reserved. Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

And I can see that I have got a sqlplus connection with no timer/keepalive.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:51404 X.X.1.135:1521 [opc@bastion ~]$

I will now configure Oracle Dead Connection Detection with SQLNET.EXPIRE_TIME parameter set in the client sqlnet.ora with a value of 1 minute.

[oracle@bastion ~]$ cd $TNS_ADMIN [oracle@bastion admin]$ /usr/bin/grep -i expire sqlnet.ora [oracle@bastion admin]$ [oracle@bastion admin]$ /usr/bin/vi sqlnet.ora [oracle@bastion admin]$ /usr/bin/grep -i expire sqlnet.ora SQLNET.EXPIRE_TIME=1 [oracle@bastion admin]$

I will run a new sqlplus connection.

[oracle@bastion admin]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 23.26.1.0.0 - Production on Thu May 21 16:41:06 2026 Version 23.26.1.0.0 Copyright (c) 1982, 2025, Oracle. All rights reserved. Last Successful login time: Thu May 21 2026 16:34:23 +02:00 Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

I can now see that I have got a connection configured with a timer and keep alive remaining of 38s.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:62868 X.X.1.135:1521 timer:(keepalive,38sec,0) [opc@bastion ~]$

Let’s configure the EXPIRE_TIME with a value of 15 minutes.

[oracle@bastion admin]$ /usr/bin/vi sqlnet.ora [oracle@bastion admin]$ /usr/bin/grep -i expire sqlnet.ora SQLNET.EXPIRE_TIME=15

I run a new sqlplus connection.

[oracle@bastion admin]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 23.26.1.0.0 - Production on Thu May 21 16:43:25 2026 Version 23.26.1.0.0 Copyright (c) 1982, 2025, Oracle. All rights reserved. Last Successful login time: Thu May 21 2026 16:42:29 +02:00 Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

And I now have got a connection configured with a timer and keep alive remaining of 14min.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:60630 X.X.1.135:1521 timer:(keepalive,14min,0) [opc@bastion ~]$

So, all good Oracle 26ai Client is supporting Dead Connection Detection with SQLNET.EXPIRE_TIME parameter.

Let’s test it with Oracle 19c ClientWe can easily confirm again that Oracle 19c Client does not support Dead Connection Detection on the client side.

Let’s move back to Oracle 19c Client home.

[oracle@bastion admin]$ export PATH=/opt/oracle/client19c/bin [oracle@bastion admin]$ which sqlplus /opt/oracle/client19c/bin/sqlplus

Run a sqlplus connection.

[oracle@bastion admin]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 19.0.0.0.0 - Production on Thu May 21 16:48:09 2026 Version 19.3.0.0.0 Copyright (c) 1982, 2019, Oracle. All rights reserved. Last Successful login time: Thu May 21 2026 16:46:07 +02:00 Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

And check connection configuration.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:64078 X.X.1.135:1521 [opc@bastion ~]$

There is no timer/keepalive handled with Oracle 19c Client.

To wrap up…Oracle Database 26ai Client is now supporting Dead Connection Detection on the client side. For our customer configuration this will help the client to check every X minutes (EXPIRE_TIME configured value) for Dead Connection. So if the CMAN and listener connections have already died and if for any reason the Network Load Balancer is still keeping the connection with the client, the client will close the connection after X minutes.

L’article Oracle Database 26ai Client and SQLNET.EXPIRE_TIME est apparu en premier sur dbi Blog.

Azure Bootcamp Switzerland 2026 edition

Today I attended the Azure Bootcamp Switzerland event in Bern. Here is a summary of what I saw and what I learned in the sessions.

The opening keynote was about Azure Sovereign Architecture where the presenter gave us an update on the current Azure/Microsoft projects. We also had an explanation on how sovereignty works.

Then I joined a session titled “Time Bombs In Entra ID – How Well Are Your Entra ID Apps Managed?”. The speaker explained to us how Azure App registration and service principal really work. He also gave us some advice on best practices when using this kind of Azure/Entra resource.

Before the lunch break I joined a session on how some architects resolved the “multiple teams needed to deploy something” problem. They automated the deployment with CI/CD and Terragrunt. They did a demo on how they use their code and how they make infrastructure changes with it.

After the lunch, I chose to go in a more network oriented presentation. The topic was how to get rid of VPN by using an Azure service called Global Secure Access. Even though I’m not convinced that we can get rid of VPNs, this option could be something for highly Microsoft infrastructure as it uses the Microsoft backbone for all the network routing.

The last two sessions I attended sessions on Azure Policy. The topics were first using code to deploy Azure policies, as it’s a better way to have them identical in multiple environments and as it as also faster than using the Azure interface, which is slow. The second one was about using conditional access as safer alternative for securing Azure tenants with policies. This method is quite interesting but requires a paid version of Entra to be activated.

Finally, for the closing keynote, we had a presentation about an application developed by a Swiss company that helps emergency services coordinate. It’s allowing call centers to locate and contact closest to scene savers and organize their deployment.

Once again, I’m glad that could attend this event. I learned quite a bunch of things and could also refresh my memory on some other topics. The sessions are long enough to detail a topic and the speakers are always performing well.

L’article Azure Bootcamp Switzerland 2026 edition est apparu en premier sur dbi Blog.

Reduce downtime when refreshing your non-production databases using Multitenant

You probably refresh your non-production Oracle databases with production data from time to time or on a regular basis. Without Multitenant, the most common procedure to do this refresh is a DUPLICATE FROM BACKUP with RMAN. The drawback is the unavailability of the database being refreshed during the DUPLICATE. You first need to remove the old version of the database, then start the DUPLICATE and wait until it’s finished. If you have Enterprise Edition and enough CPU, you can lower the time needed for the refresh by allocating a sufficient number of channels. But with a small number of CPU (which is normal for a non-production server), or eventually with Standard Edition (single channel RMAN operations only), a multi-TB database refresh can take several hours to complete. And if it fails for some reasons, you need to retry the refresh, extending even more the downtime.

Multitenant brought new possibilities for refreshing a database, and my favorite one is a CREATE PLUGGABLE DATABASE from a database link (DB link). It’s dead easy compared to a DUPLICATE FROM BACKUP on a non-CDB database. And you can lower the downtime to the very minimum. Here is how I did this for several projects.

How to lower the downtime to the minimum when refreshing a non-production PDB?You probably know that one of the advantage of a pluggable database is the easiness of changing its name. You just need to stop the PDB, rename it, and restart it. You can then use this technique to refresh a PDB under a temporary name and let the actual PDB available during the refresh. Once the refresh is finished, drop or rename the actual PDB, and rename the newest one to its target name. Even if your refresh takes hours, your downtime is limited to a couple of seconds/minutes.

Step 1: add an additional grant for source PDB’s administratorThe PDB administrator on the source database must have the CREATE PLUGGABLE DATABASE privilege:

ssh oracle@p01-srv-ora

. oraenv <<< P19PMT

sqlplus / as sysdba

Alter session set container=P19_ERP;

grant create pluggable database to SYSERP;

exitThe target server must have a TNS entry to the source PDB (production). If your source PDB and its container are protected by a Data Guard configuration, dont’t forget to add both addresses:

ssh root@t01-srv-ora

su – oracle

. oraenv <<< D19PMT

vi $ORACLE_HOME/network/admin/tnsnames.ora

…

P19_ERP =

(DESCRIPTION =

(LOAD_BALANCE = OFF)

(FAILOVER = ON)

(ADDRESS_LIST =

(ADDRESS = (PROTOCOL = TCP)(HOST = p01-srv-ora)(PORT = 1521))

(ADDRESS = (PROTOCOL = TCP)(HOST = p02-srv-ora)(PORT = 1521))

)

(CONNECT_DATA =

(SERVER = DEDICATED)

(SERVICE_NAME = P19_ERP)

)

)

tnsping P19_ERP

…

A DB link is required on the target container:

ssh root@t01-srv-ora

su – oracle

. oraenv <<< D19PMT

sqlplus / as sysdba

CREATE DATABASE LINK P19_ERP CONNECT TO SYSERP IDENTIFIED BY "*************" USING 'P19_ERP';

select count(*) from dual@P19_ERP;

COUNT(*)

----------

1

exitBasically, refresh will have 5 main tasks:

- create a new PDB with a temporary name _NEW on the target container from the source PDB

- start the new PDB for its correct registration in the container

- run an optional script for modifying production data (masking, disabling tasks, …)

- stop and rename the current PDB to _OLD, then start it again

- stop and rename the new PDB to its target name and start it again

Task 2 is needed because you cannot rename a PDB immediately after creation. You first need to open it, then close it for being able to change its name.

Let’s create 2 scripts on the target server, one shell script and one SQL script:

vi /home/oracle/scripts/refresh_D19_ERP.sh

#!/bin/bash

export ORACLE_SID=D19PMT

export REFRESH_LOG=/home/oracle/scripts/log/refresh_D19_ERP_`date +%d_%m_%Y-%H_%M_%S`.log

export ORACLE_HOME=`cat /etc/oratab | grep $ORACLE_SID | awk -F ':' '{print $2;}'`

date >> $REFRESH_LOG

$ORACLE_HOME/bin/sqlplus / as sysdba @/home/oracle/scripts/refresh_D19_ERP.sql >> $REFRESH_LOG

date >> $REFRESH_LOG

exit 0

vi /home/oracle/scripts/refresh_D19_ERP.sql

set timing on

show pdbs

alter pluggable database D19_ERP_OLD close immediate;

Drop pluggable database D19_ERP_OLD including datafiles;

show pdbs

create pluggable database D19_ERP_NEW from P19_ERP@P19_ERP ;

show pdbs

alter pluggable database D19_ERP_NEW open;

show pdbs

alter session set container=D19_ERP_NEW;

@/home/oracle/scripts/post_refresh_D19_ERP.sql

alter session set container=CDB$ROOT;

alter pluggable database D19_ERP close immediate;

alter pluggable database D19_ERP rename global_name to D19_ERP_OLD;

alter pluggable database D19_ERP_OLD open;

show pdbs

alter pluggable database D19_ERP_NEW close immediate;

alter pluggable database D19_ERP_NEW rename global_name to D19_ERP;

Alter pluggable database D19_ERP open;

Alter pluggable database D19_ERP save state;

show pdbs

exitIt does the job, although these are very basic scripts: further controls could be added to trap errors, manage services, and so on.

Step 5 : schedule the refreshScheduling can be done through the crontab, for example every evening at 11.30PM:

crontab -l | grep D19_ERP | grep refresh

30 23 * * * sh /home/oracle/scripts/refresh_D19_ERP.sh

This is definitely a smart solution as soon as you have enough space on disk to have 2 copies of the PDB. It’s quite reliable and ticks all the boxes where I deployed these scripts.

L’article Reduce downtime when refreshing your non-production databases using Multitenant est apparu en premier sur dbi Blog.

OGG-08502 Path not found error from OGG Receiver Service

Recently, after a successful migration to GoldenGate 26ai, a customer complained that he was seeing a lot of the following error in the ggserr.log file of a GoldenGate deployment (I replaced the names for the purpose of this blog).

2026-05-18T14:32:35.948+0200 ERROR OGG-08502. Oracle GoldenGate Receiver Service for Oracle: Path path21 not found.More precisely, in that case, path21 is a distribution path sending trail files from deployment ogg_test_02 to ogg_test_01. And the error shown above appeared in the log file of the ogg_test_01 deployment.

While this error did not seem to indicate any operational issue in the replication, after checking on multiple environments, I confirmed that it appears everywhere. So what is happening exactly ?

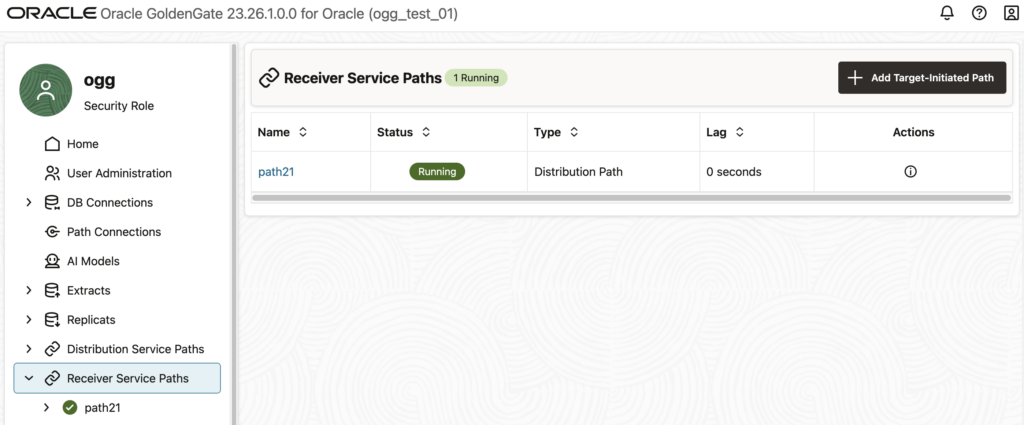

If you get this error and do not know where it comes from, log in to the web UI of the affected deployment, and go to the Receiver Service Paths tab. You should see a list of the distribution paths that are connecting to your deployments. The example below shows the path21 that is mentioned in the error.

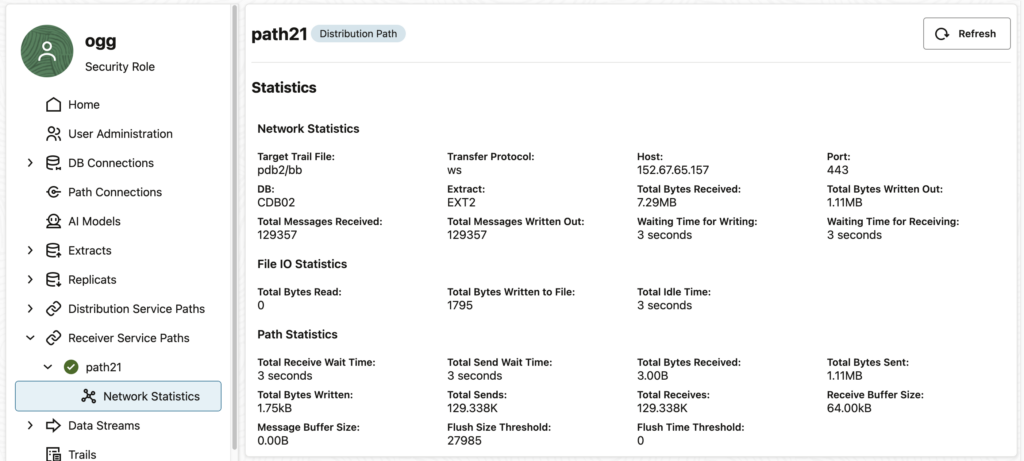

If you click on this path… Nothing happens ! And by “nothing”, I mean “nothing abnormal”. In fact, the statistics are properly displayed (see below), and there is no error shown to the user. However, if you look at your ggserr.log file you will see that the error given above appears.

At first glance, this might not seem like a huge issue, because if you don’t click on the receiver path, you will not get the error. However, in the log file of the customer, the error appeared regularly. Every minute, to be precise.

Why do I get this error even when I’m not accessing the web UI ?Luckily, when debugging this issue, I started by putting the target in a blackout in the Oracle Enterprise Manager. To my surprise, the error was gone during the blackout and reappeared right after.

In this case, the Enterprise Manager Plug-in for Oracle GoldenGate is monitoring the status of the deployment every minute and generates the error in the process.

When looking at the targets in the OEM, there is no error. Again, no operational impact.

Does it depend on the way you create the distribution path ?GoldenGate offers multiple ways of managing deployments : REST API, adminclient, or the web UI. Unfortunately, some bugs (and some features…) mean that you should avoid managing some objects with some of these tools (read why you shouldn’t create profiles through the adminclient, for instance).

In this specific case, all distribution path creation methods lead to the same error in the log file. It doesn’t matter whether you create the distribution path with the adminclient, the REST API or the web UI. They will all lead to this error.

Let’s dig a bit to see what is happening behind the scenes. By looking at the restapi.log file (read my blog on how to analyze REST API logs efficiently), we can see the full error:

2026-05-18 09:08:58.402+0000 ERROR|RestAPI.recvsrvr | Request #9: {

"context": {

"httpContextKey": 140097141801744,

"verbId": 2,

"verb": "GET",

"originalVerb": "GET",

"uri": "/services/v2/targets/path21",

"protocol": "http",

"headers": {

...

},

"host": "vmogg",

"securityEnabled": false,

"authorization": {

"authUserName": "ogg",

"authUserRole": "Security",

"authMode": "Cookie"

},

"requestId": 8,

"uriTemplate": "/services/{version}/targets/{path}",

"catalogUriTemplate": "/services/{version}/metadata-catalog/path"

},

"isScaRequest": true,

"content": null,

"parameters": {

"uri": {

"path": "path21",

"version": "v2"

},

"query": {

"WindowRef": "%2Fservices%2Fv2%2Fcontent%2F%23%2FrecvsrvrPaths%2Fpath21%2FpathNetworkStats"

}

}

}

Response: {

"context": {

...

},

"isScaResponse": true,

"content": {

"$schema": "api:standardResponse",

"links": [

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21",

"mediaType": "application/json"

},

{

"rel": "self",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21",

"mediaType": "application/json"

}

],

"messages": [

{

"$schema": "ogg:message",

"title": "Path path21 not found",

"code": "OGG-08502",

"severity": "ERROR",

"issued": "2026-05-18T09:08:58Z",

"type": "https://www.rfc-editor.org/rfc/rfc9110.html#name-status-codes"

}

]

}

}The issue comes from the following endpoint : /services/v2/targets/path21. It is described in the documentation under Retrieve an existing Oracle GoldenGate Collector Path. But looking at another endpoint described in Get a list of distribution paths, we get the following response:

{

"$schema": "api:standardResponse",

"links": [

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets",

"mediaType": "text/html"

},

{

"rel": "self",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets",

"mediaType": "text/html"

},

{

"rel": "describedby",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/metadata-catalog/targets",

"mediaType": "application/schema+json"

}

],

"messages": [],

"response": {

"$schema": "ogg:collection",

"items": [

{

"links": [

{

"rel": "parent",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets",

"mediaType": "application/json"

},

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21_ogg26dist2_7811",

"mediaType": "application/json"

}

],

"$schema": "ogg:collectionItem",

"name": "path21",

"status": "running",

"targetInitiated": false

}

]

}

}Here, we see that the endpoint associated with the path21 object is not recvsrvr/v2/targets/path21 but recvsrvr/v2/targets/path21_ogg26dist2_7811. And looking at this second endpoint, we do not get an error.

{

"$schema": "api:standardResponse",

"links": [

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21_ogg26dist2_7811",

"mediaType": "text/html"

},

{

"rel": "self",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21_ogg26dist2_7811",

"mediaType": "text/html"

},

{

"rel": "describedby",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/metadata-catalog/path",

"mediaType": "application/schema+json"

}

],

"messages": [],

"response": {

"name": "path21",

"status": "running",

"$schema": "ogg:distPath",

"source": {

"uri": "trail://localhost:7811/services/v2/sources?trail=pdb2/bb"

},

"target": {

"$schema": "ogg:distPathEndpoint",

"uri": "ws://vmogg/services/v2/targets?trail=pdb2/bb"

},

"options": {

"network": {

"appOptions": {

"appFlushBytes": 27985,

"appFlushSecs": 1

},

"socketOptions": {

"tcpOptions": {

"ipDscp": "DEFAULT",

"ipTos": "DEFAULT",

"tcpNoDelay": false,

"tcpQuickAck": true,

"tcpCork": false,

"tcpSndBuf": 16384,

"tcpRcvBuf": 131072

}

}

}

}

}

}The problem is that it was never decided for path21 to be referred to as path21_ogg26dist2_7811 internally. And it looks like GoldenGate does not know about it either… So until the bug is corrected, you will have to filter this OGG-08502 Path not found error out of the ggserr.log file if you use it for monitoring.

L’article OGG-08502 Path not found error from OGG Receiver Service est apparu en premier sur dbi Blog.

What being an external consultant really changes

When people think about consultants, they usually focus on expertise. “They bring experience, frameworks, and best practices.”

That’s true, of course. However, that is not the most impactful aspect of the role.

The real shift happens somewhere less visible: positioning. As an outsider, you don’t just join a team.

You become something different. Over time, I’ve come to think of it as operating within a “shadow team.”

This invisible layer changes how you navigate politics, truth, and influence.

Let’s unpack that.

As an employee, you’re clearly part of the organization.

However, when you’re an external consultant, it’s a different story.

You sit inside delivery teams while remaining outside the organization’s long-term structure. This dual positioning creates what I call a shadow team.

You collaborate closely with internal stakeholders, influence decisions without owning them, and observe dynamics that others are too immersed in to see.

You’re close enough to matter, yet distant enough to stay objective.

This reshapes everything.

Every organization has internal politics, including priorities, power structures, historical tensions, and unwritten rules. The larger the organization, the more politics there are.

Employees must live within that system.

Consultants, on the other hand, can often see the system more clearly because they aren’t fully bound by it.

This doesn’t mean you’re outside of politics, though.

It means:

You can identify misalignments more quickly, notice when decisions are driven by structure, not logic and spot friction between teams that others consider “normal.”

But here’s the key difference:

- You are less constrained by long-term consequences.

- An employee may avoid challenging a decision due to its potential impact on their career.

- However, a consultant can raise the concern because their role is to add clarity, not preserve equilibrium.

- Still, this doesn’t mean ignoring politics. It means navigating them consciously without being controlled by them.

One of the most powerful—and most fragile—assets of being an external consultant lies in the neutrality that people attribute to you.

You are not:

- Competing for a promotion

- Defending a department

- Protecting past decisions

This creates a rare opportunity. You can become a trusted bridge between stakeholders

When done right, people will:

- Share concerns they wouldn’t voice internally

- Ask for your opinion as a “safe” perspective

- Use you to validate or challenge ideas

However, neutrality is not automatic, it must be earned and can easily be lost.

You lose it when:

- You align too strongly with one stakeholder

- You start defending internal logic instead of questioning it

- You behave like an insider too quickly

The best consultants maintain a delicate balance:

They are close enough to build trust and distant enough to stay credible.

Truth vs. Diplomacy: walking the tightropeThis is where the role becomes truly challenging.

As a consultant, you are often expected to:

- Tell the truth

- Challenge assumptions

- Highlight risks

However, you are also expected to:

- Maintain relationships

- Respect stakeholders

- Keep the project moving forward

These two expectations often conflict with each other.

The naive approach: “Just be brutally honest.”

This approach quickly fails. Brutality destroys trust.

The safe approach: “Say what people want to hear.”

This makes you irrelevant.

The real skill is delivering truth in a way that can be heard.

That means:

- Frame issues in terms of impact, not fault.

- Ask questions instead of making accusations.

- Adapt your message to your audience.

For example, rather than saying, “This process isn’t working at all”

A more measured approach might be: “I see a few risks associated with this process. Could we go over them together?”

The observation is the same.

However, the outcome is different.

Being a consultant isn’t just about knowledge. It’s also about positioning. You have a clearer view, speak more freely, and connect across sides.

However, our profession is based on a paradox. We must be objective enough to provide sound advice, yet also be fully committed to the task at hand. Additionally, we must offer honest feedback without hurting the client’s feelings or losing their trust.

At dbi services, we’re passionate about striking that delicate balance, whether the subject is ECM or any other area of our expertise. Learn more about us here.

L’article What being an external consultant really changes est apparu en premier sur dbi Blog.

Instruction-Based Data Analysis with Sparrow and Local LLM

- Risk classification — categorize each position into low, medium, or high risk based on loss percentage

- Concentration risk — flag overweight positions above 20% portfolio weighting

- Portfolio aggregation — total valuation, weighted average P&L, best and worst performer

Install and configure OEM plug-in for GoldenGate

If you are licensed for the GoldenGate Management Pack, using the Enterprise Manager plug-in for GoldenGate improves monitoring and management of your deployments. And after migrating to the Microservices Architecture, you should definitely update your plug-in and rediscover all targets. Let’s see how to do all that here.

In this blog, I will use the latest version of the Enterprise Manager (24ai) and monitor GoldenGate 26ai deployments. The overall workflow is the same for other versions of the Enterprise Manager and GoldenGate, provided OGG is in the Microservices Architecture.

Here are the main steps to monitor GoldenGate targets from the Enterprise Manager:

- Update the catalog in the Enterprise Manager

- Deploy the plug-in on the management server

- Deploy the plug-in on the agent

- Configure the discovery module

- Promote the new targets

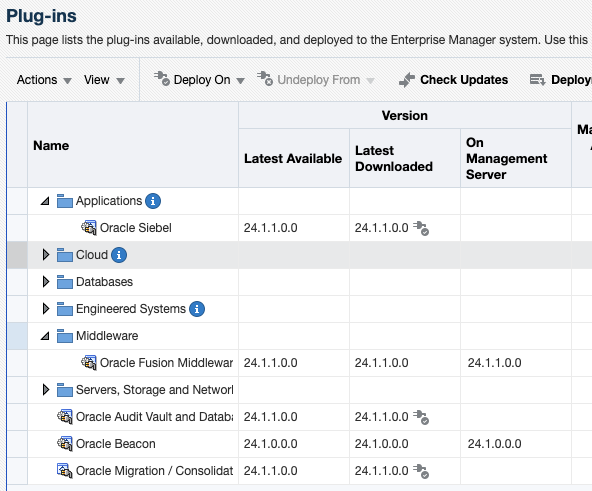

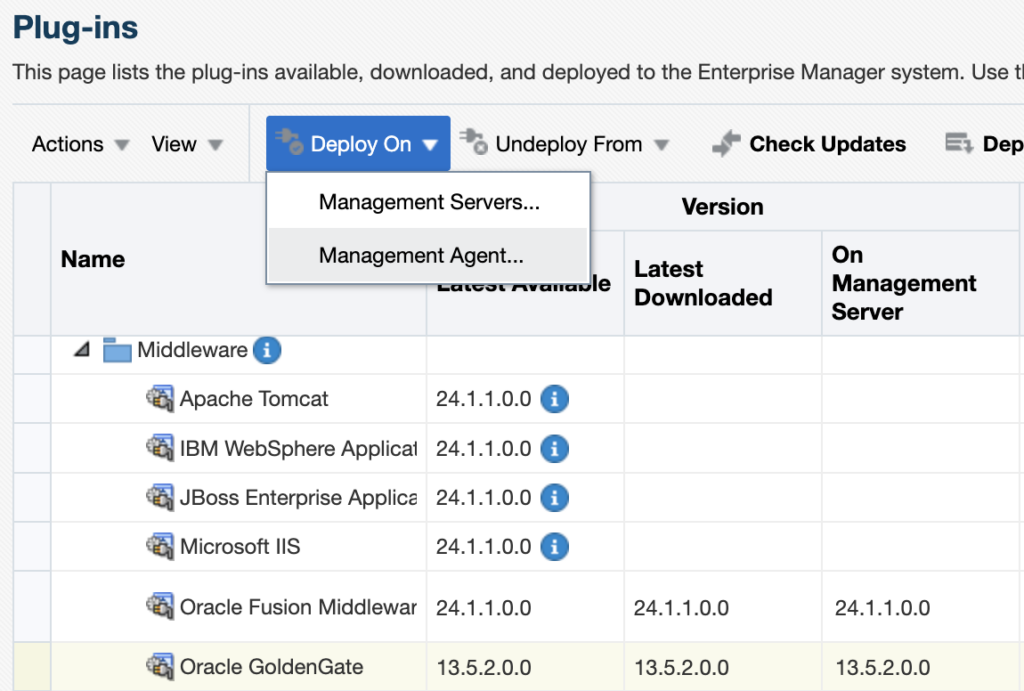

Before attempting to install the plug-in, make sure it is not already installed in your environment. To check this, go to Setup > Extensibility > Plug-ins, and expand the Middleware section. If you do not see any line named Oracle GoldenGate, it means the plug-in is not installed yet.

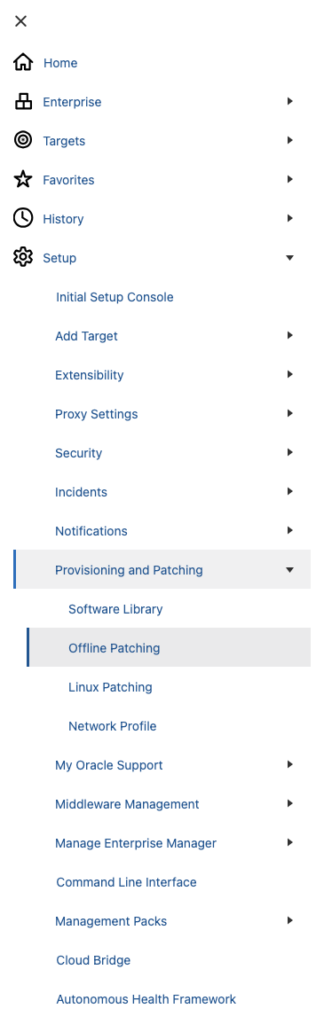

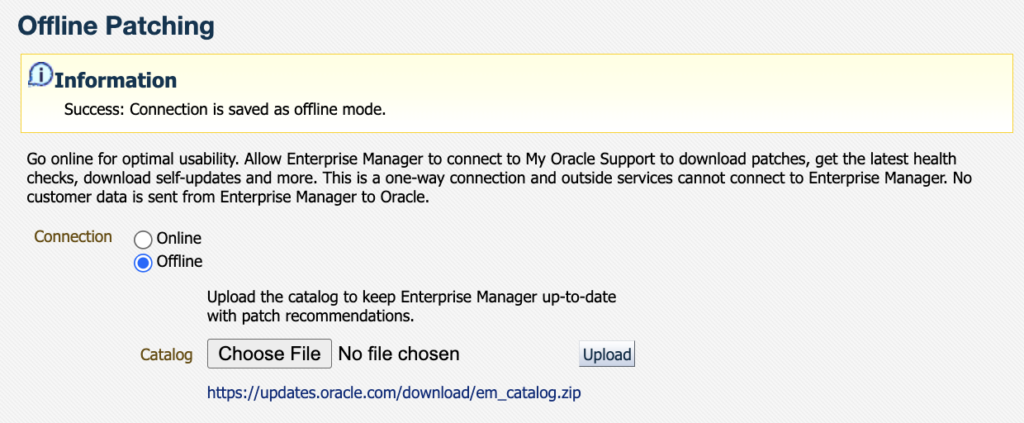

Since most OEM environments do not have access to the Oracle Support directly, we’ll download the plug-in in offline mode. To do so, go to the Setup > Provisioning and Patching > Offline Patching section.

Once in the Offline Patching section, make sure Offline is selected for the connection and download the catalog file as instructed from an environment with access to the Oracle support website. Transfer it to where you have access to the OEM UI, and upload it.

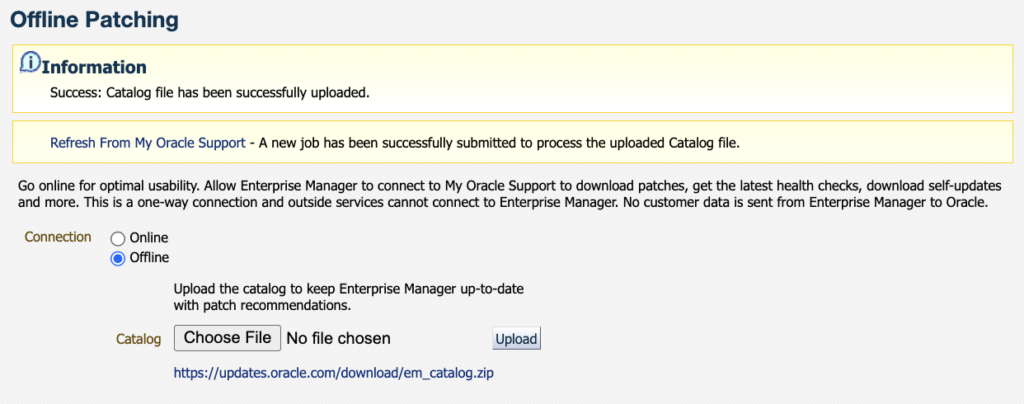

Once the catalog is uploaded, you should see the following information message.

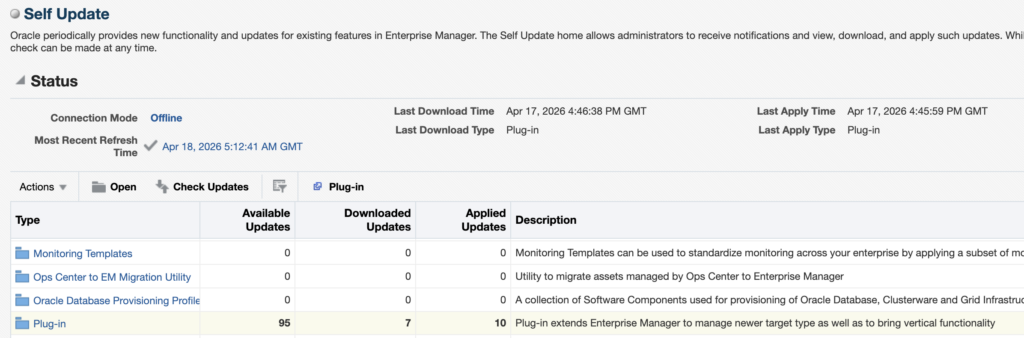

Then, go to Setup > Extensibility > Self Update and click on Check Updates.

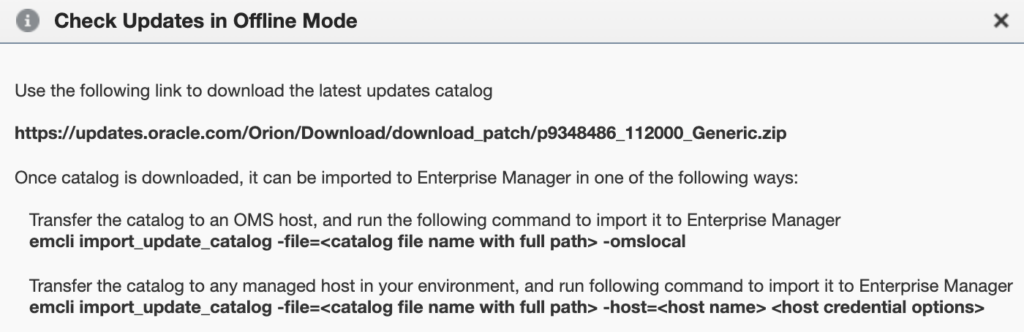

You should see the following pop-up appear, with a link from where you will be able to download the OEM Self Update catalog file. For reference, the one I had when writing this blog was the following : https://updates.oracle.com/Orion/Download/download_patch/p9348486_112000_Generic.zip

As instructed, transfer this patch to the OMS host and import it with emcli and the import_update_catalog action. You can also import it from another managed host. It should take around twenty seconds to import everything.

oracle@oem24:~/ [oem24] emcli import_update_catalog -file=/tmp/p9348486_112000_Generic.zip -omslocal

Processing catalog for Diagnostic Tools

Processing update: Diagnostic Tools - AHFFI 25.1.0.1.0 for Linux

Processing update: Diagnostic Tools - AHF 25.5.0.0.0 for HP

[...]

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of GoldenGate Microservices Architecture, in addition to the GoldenGate Classic Architecture

[...]

Successfully uploaded the Self Update catalog to Enterprise Manager. Use the Self Update Console to view and manage updates.

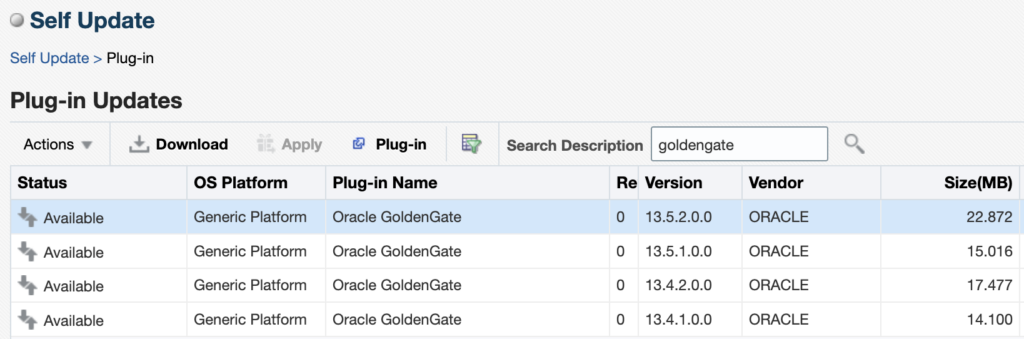

Time taken for import catalog is 17.289 seconds.When this is done, go back to the Self Update page, click on the Plug-In section. You will see the different versions of GoldenGate that are available. When I’m writing this blog, the latest version of the plug-in is 13.5.2.0.0 (the latest patch released in January 2026, 13.5.2.0.6, will be a topic for another blog). Click on the latest version and then on Download.

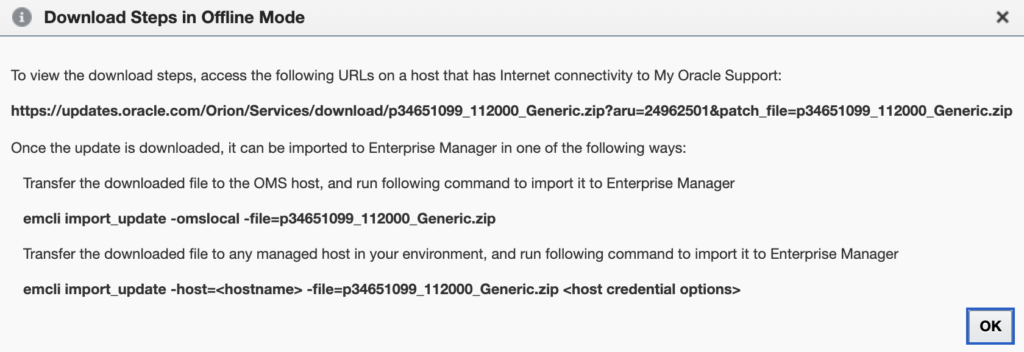

The following pop-up gives you the link from which you should download the plug-in update file. In my case, it was https://updates.oracle.com/Orion/Services/download/p34651099_112000_Generic.zip?aru=24962501&patch_file=p34651099_112000_Generic.zip.

Once the file is downloaded, import it in the same way as before with the catalog, but this time with the emcli import_update action.

oracle@oem24:~/ [oem24] emcli import_update -omslocal -file=/tmp/p34651099_112000_Generic.zip

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

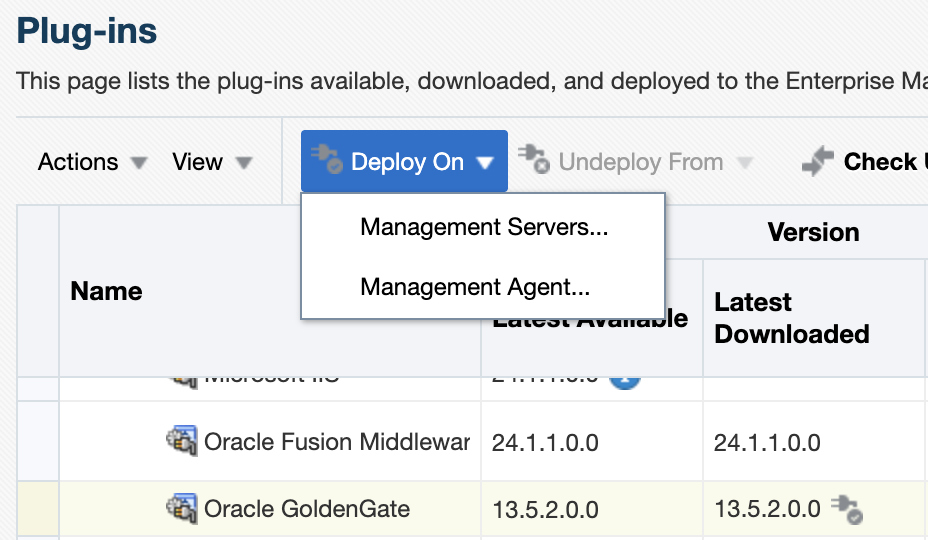

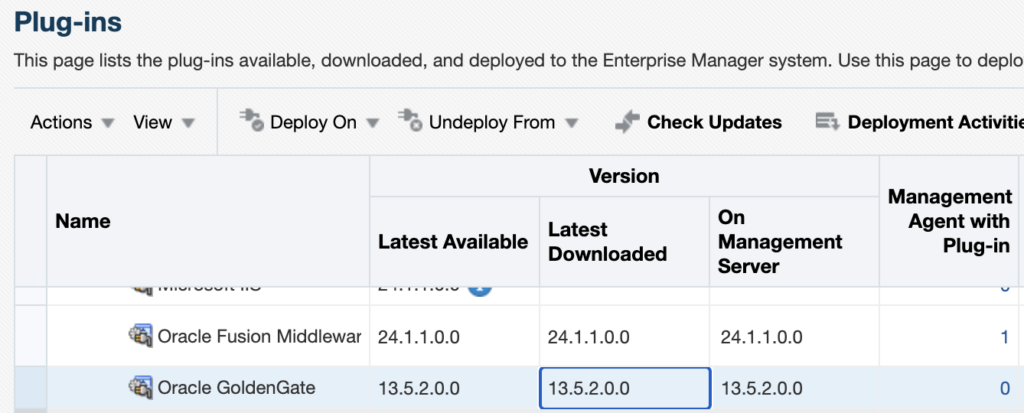

Successfully uploaded the update to Enterprise Manager. Use the Self Update Console to manage this update.Once this is done, go back to the Setup > Extensibility > Plug-in tab and expand the Middleware section. You should now see Oracle GoldenGate, and 13.5.2.0.0 as the downloaded version.

Warning : Deploying the plug-in on the management server will temporarily restart OMS components and briefly interrupt monitoring operations. To deploy the plug-in, you have two options:

- Deploying the plug-in from the web UI.

- Deploying the plug-in from the CLI.

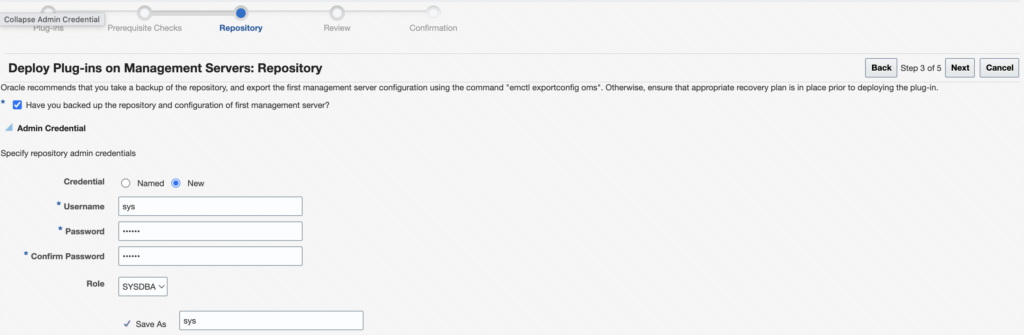

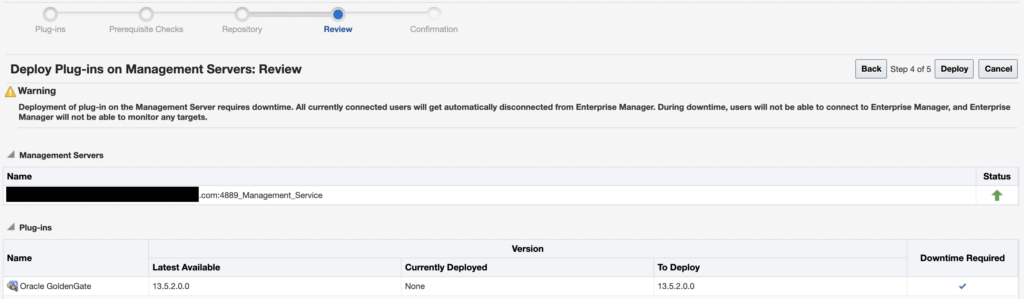

From the web UI, click on the Oracle GoldenGate plug-in, then on Deploy On, and deploy the plug-in on the Management Servers.

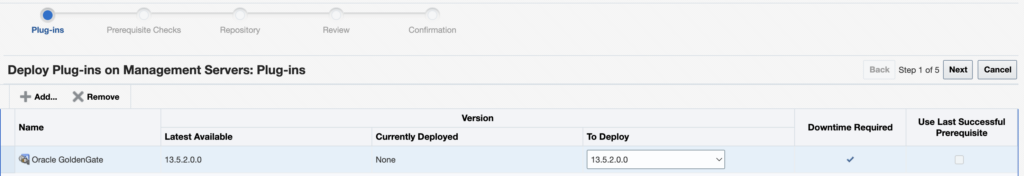

Make sure the correct version of the plug-in is chosen (13.5.2.0.0), and click on Next to run the prerequisite checks.

Once the checks are successfully completed, click on Next.

You should now select the repository credentials for the OEM. You should either use new credentials (if it’s a new environment) or use existing named credentials. Click on Next.

Once everything is done, click on Deploy.

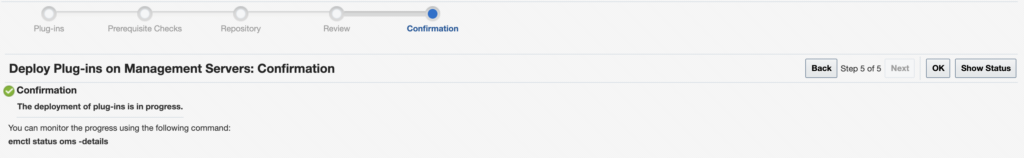

As instructed, you can check the status of the deployment with the emctl status oms -details command.

oracle@oem24:~/ [oem24] emctl status oms

Oracle Enterprise Manager 24ai Release 1

Copyright (c) 1996, 2024 Oracle Corporation. All rights reserved.

WebTier is Up

Oracle Management Server is Down

This is due to the following plug-ins being deployed on the management server or undeployed from it:

----------------------------------------

Plugin name: : Oracle GoldenGate

Version: : 13.5.2.0.0

ID: : oracle.fmw.gg

----------------------------------------Alternatively, you can deploy the plug-in with the following command, using the oracle.fmw.gg ID for the plug-in and the latest 13.5.2.0.0 version.

emcli deploy_plugin_on_server -plugin="oracle.fmw.gg:13.5.2.0.0"Once the plug-in is deployed on the Management Server, you can check again in the web UI : the latest version should be in the On Management Server section.

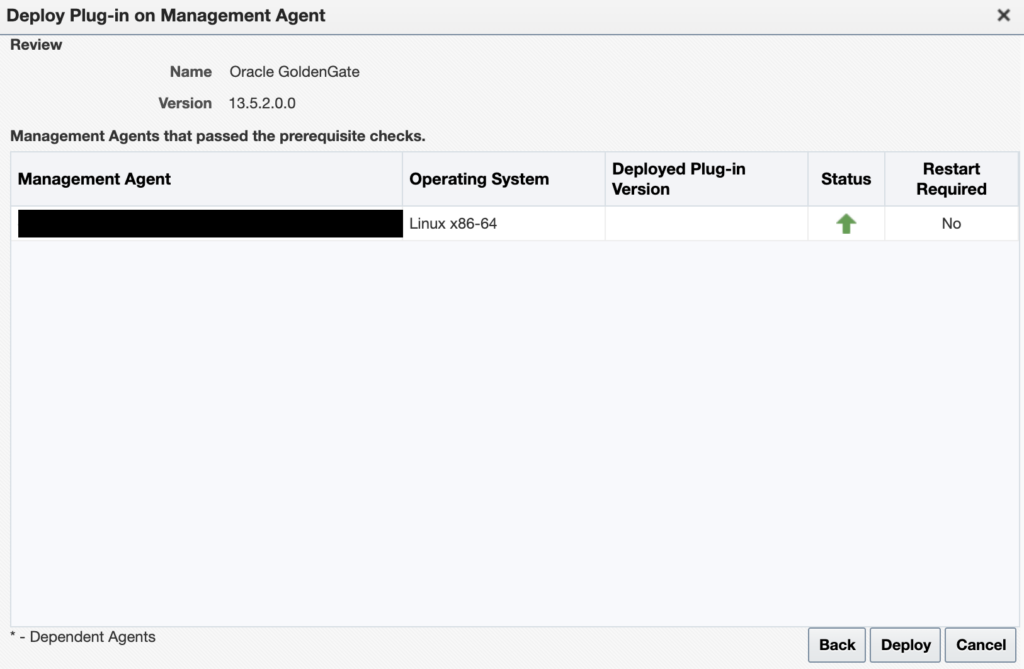

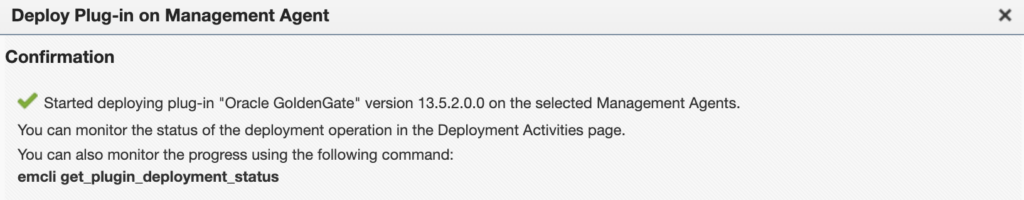

Deploy the plug-in on the agent

Deploy the plug-in on the agent

For each GoldenGate host where an OEM agent is running, deploy the plug-in. To do so, from the web UI, click on the Oracle GoldenGate plug-in, then on Deploy On, and select Management Agent.

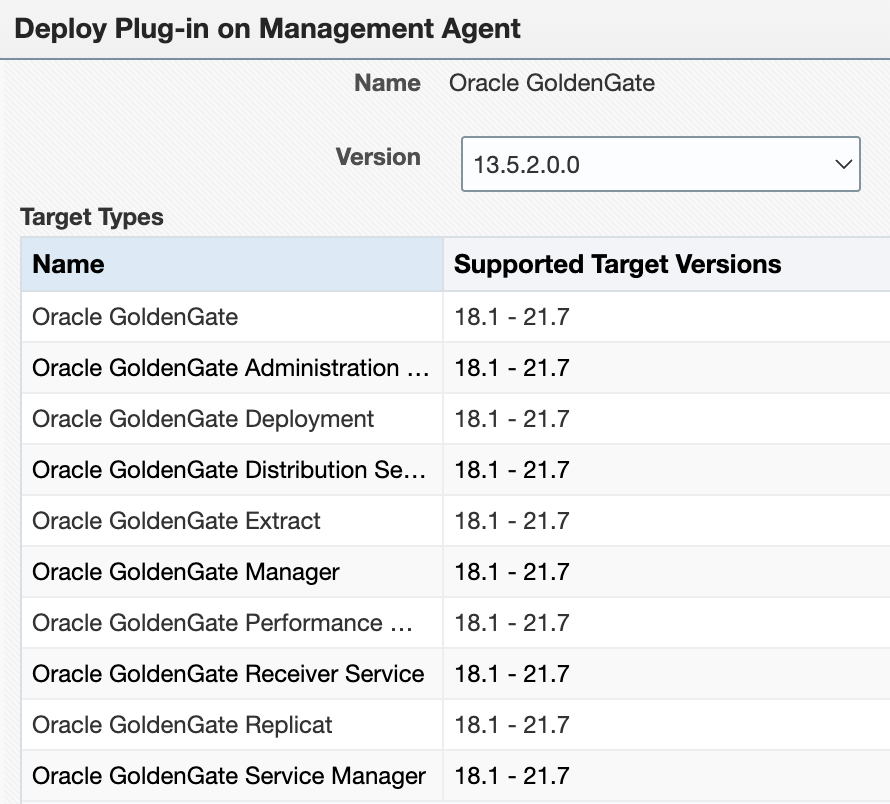

There is currently a bug with the Supported Target Versions. No matter your patch level, you will not see the latest versions of GoldenGate. Do not worry about this yet. Just make sure 13.5.2.0.0 is selected.

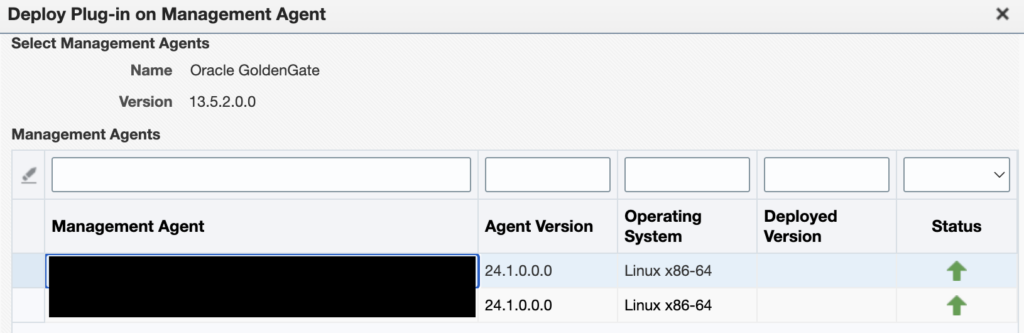

Then, select the agent on which you want to deploy the plug-in.

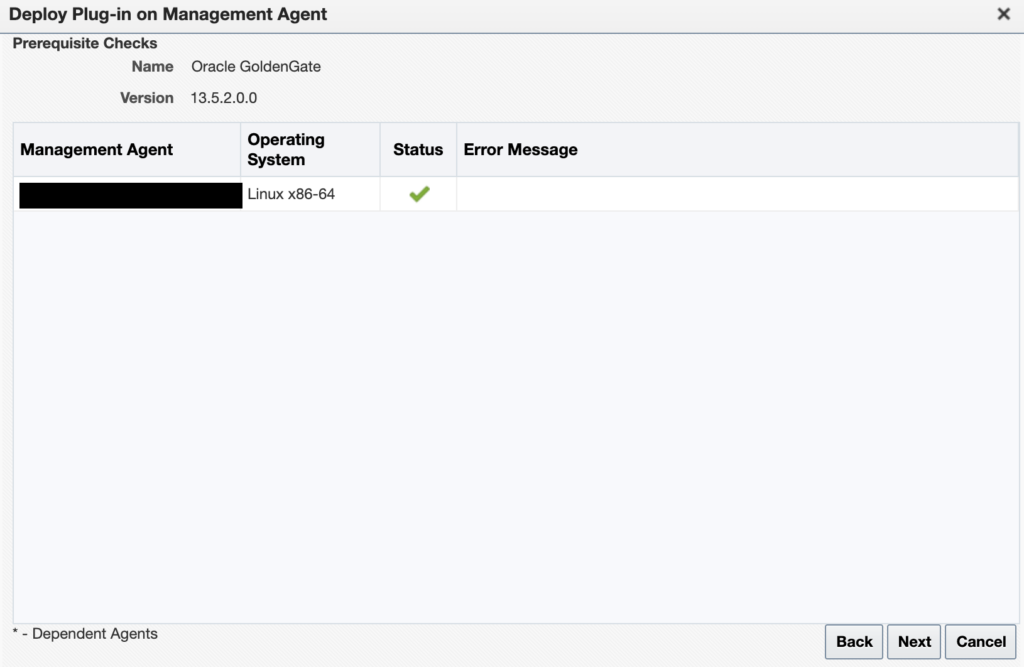

Let the prerequisite checks run…

And once everything is ready, click on Deploy.

You can check that everything is running properly with the emcli get_plugin_deployment_status command.

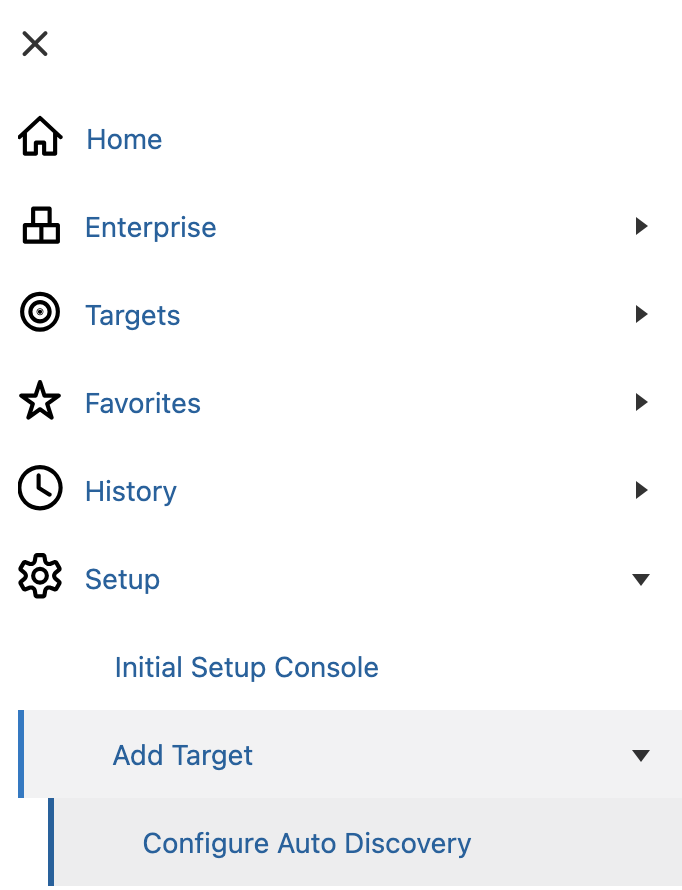

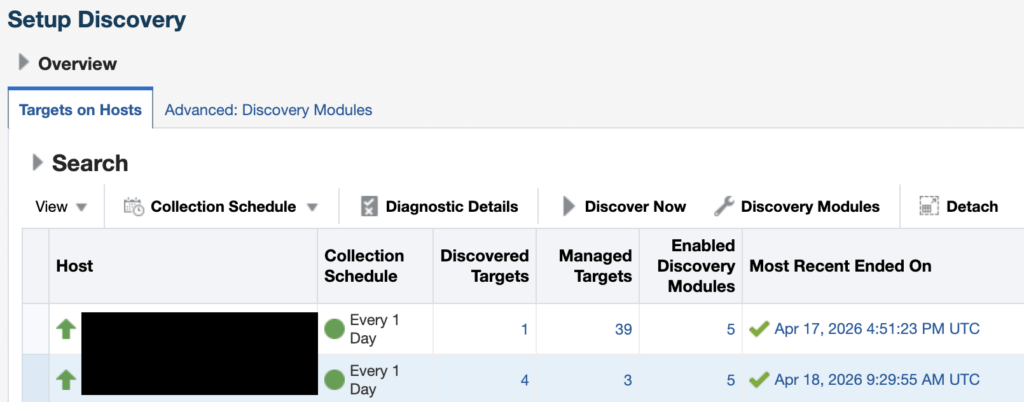

Configure GoldenGate monitoring in the Enterprise Manager

Configure GoldenGate monitoring in the Enterprise Manager

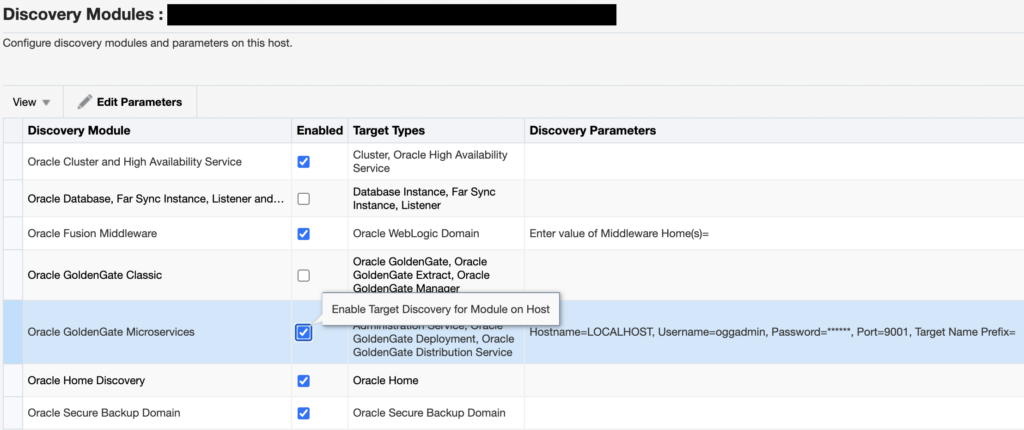

Once the plug-in is correctly deployed on the OMS host and on the GoldenGate host agent, you can configure the module. I will only cover the configuration for the Microservices Architecture. Go to the Setup > Add Target > Configure Auto Discovery tab.

Choose the correct agent host, and click on Discovery Modules.

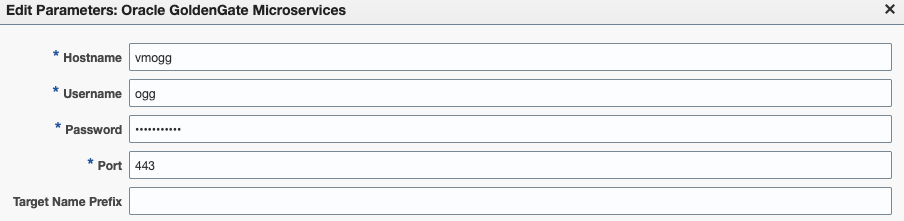

Enable the Oracle GoldenGate Microservices module, click on it, and then on Edit Parameters.

If you deployed GoldenGate with a reverse proxy, set up the plug-in as such.

If you deployed GoldenGate with a port for each service, enter the service manager port (7809, by default).

Warning : if your installation is secured with certificates, make sure to follow the instructions I gave in a blog to avoid EM-90000 errors when discovering new targets.

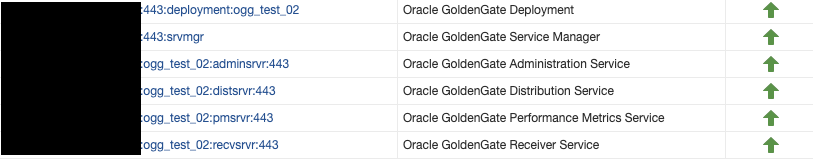

Once this is done, just go back to the Configure Auto Discovery section, click on the correct host, and then click on Discover Now. Then, go back to the Configure Auto Discovery section. You should now see a greater number of targets in the Discovered targets section.

If the number of targets did not increase, despite a successful discovery, check the blog linked above.

Click on the number of targets to jump to the Auto Discovery Results section. Select the newly discovered Service Manager target, and click on Promote. Once the target is promoted, you should see the new GoldenGate targets being monitored by the Enterprise Manager !

L’article Install and configure OEM plug-in for GoldenGate est apparu en premier sur dbi Blog.

World Password Day and Oracle Security

Posted by Pete On 11/05/26 At 12:37 PM

Securing Data in Oracle without Cost Options

Posted by Pete On 05/05/26 At 11:25 AM