Yann Neuhaus

Oracle Database 26ai Client and SQLNET.EXPIRE_TIME

We have been facing one issue at one of our customer where the Oracle Client connections remained opened for days blocking some avaloq JobNetz. We have been doing some tests and we could fortunately find a solution resolving the problem thanks to Oracle Database 26ai supporting now SQLNET.EXPIRE_TIME on the client side. Through this blog, I would like to share with you the problem and then the tests that have been performed helping us to conclude to a solution.

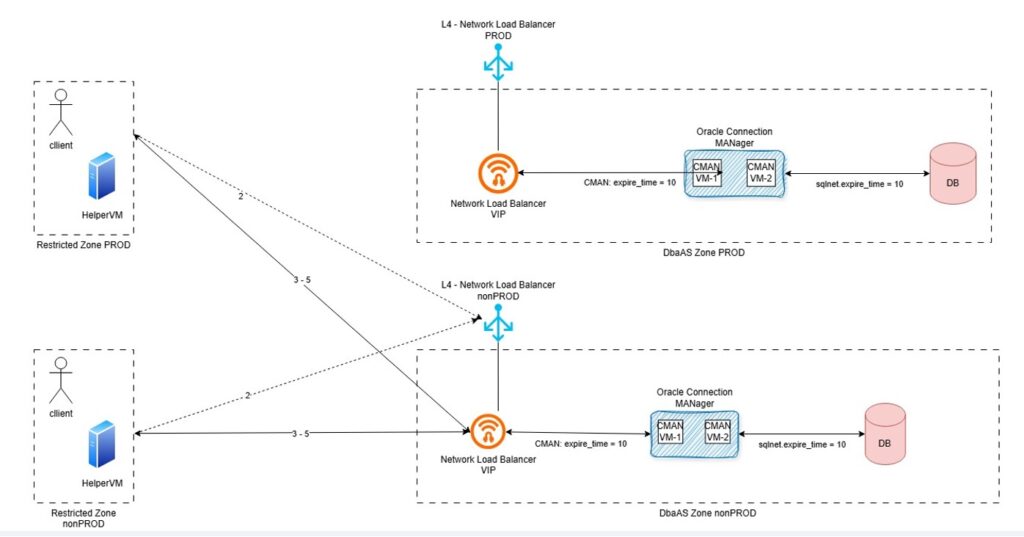

Environment and problem descriptionAt our customer environment, client connection run from the HelperVM does not establish database connections directly to the database listener. The connection goes through the Network Load Balancer, so called NLB, and the Oracle Connection Manager, so called CMAN.

The diagram below describes the database connection establishment process.

This is how it works.

- 1 – Client seeks for connection details (ideally, get the connection details from Oracle Directory Service)

- 2 – Client connects to Network Load Balancer

- 3 – Network Load Balancer “forwards” the request to Oracle Connection MANager using Virtual IP

- 4 – Oracle Connection MANager acts as a rule-based firewall and ensure the database target service is running on the “white listed targets (next_hop)

- 5 – Oracle Database establish connectivity upon credential validate (Oracle listener acts in between). The listener hands the connection over to the Oracle CMAN gateway process, which passes data back and forth between the client and the db-server and collects statistics.

We could observed per reverse engineering technique that the TCP connection established between the Oracle client and Oracle CMAN works upon Network Load Balancer Virtual IP.

The Network Load Balancer needs for Session persistence “statefullnes” to be enabled. This means that once the connection is established, the NLB “remembers” established connections and fails them over in case of planned downtime.

We have been facing some broken connectivity issue. Checking Linux socket connection with linux ss command (# ss -nop) we could see TCP connection hungs between client and NLB virtual IP (CNAME DNS entry). On CMAN and DB-Server side the connection were already cleaned up as per Dead Connection Detection configuration. We can see in the diagram that EXPIRE_TIME is setup with a value of 10 minutes on the CMAN side and the listener configuration from the VM Cluster database.

The connection was still opened on the client side because:

- The client was still waiting on a result which would never come

- TCP connection to the NLB Virtual IP was still existing albeit closed with the CMAN and database listener

- TCP connection to the NLB would remain alive for days

The problem is that by default Oracle client does not enable TCP Keepalive, which is an expected behavior. Oracle expects the keepalive to be set on the server side. The “Dead Connection Detection” will then be enforced for all clients.

TCP Keepalive should then be managed in our case on the client side. And we are currently running Oracle 19c Client.

EXPIRE_TIME handled on Oracle 19c ClientOracle 19c client does not come with SQLNET.EXPIRE_TIME aka “Oracle dead connection detection”, unless hacked over connection string hidden (unsupported) parameter ENABLE_BROKEN.

See following blog from a one of my former colleagues:

sqlnet-expire_time and enablebroken

And what about Oracle 26ai Client? Let’s do some test…

Installation of Oracle 26ai ClientOn the lab, I will use the VM called bastion to act as the client. The bastion has already an Oracle 19c Client installed. I’m going to installed new Oracle 26ai Client on it.

First we need to download the client version, which can be done from the following website:

https://www.oracle.com/database/technologies/oracle26ai-linux-downloads.html

I will install Oracle 26ai Client version in /opt/oracle.

[root@bastion oracle]# pwd /opt/oracle [root@bastion oracle]# ls -ld client* drwxr-xr-x. 52 oracle oinstall 4096 Jun 6 2024 client19c drwxr-xr-x. 47 oracle oinstall 4096 Nov 11 2025 client_21c [root@bastion oracle]#

I will first unzip the downloaded oracle zip file.

[oracle@bastion oracle]$ pwd /opt/oracle [oracle@bastion oracle]$ unzip -q LINUX.X64_2326100_client.zip

I will then rename the client installation directory:

[oracle@bastion oracle]$ ls -ld client* drwxr-xr-x. 5 oracle oinstall 90 Jan 17 13:59 client drwxr-xr-x. 52 oracle oinstall 4096 Jun 6 2024 client19c drwxr-xr-x. 47 oracle oinstall 4096 Nov 11 2025 client_21c [oracle@bastion oracle]$ mv client client26ai [oracle@bastion oracle]$ [oracle@bastion oracle]$ ls -ld client* drwxr-xr-x. 52 oracle oinstall 4096 Jun 6 2024 client19c drwxr-xr-x. 47 oracle oinstall 4096 Nov 11 2025 client_21c drwxr-xr-x. 5 oracle oinstall 90 Jan 17 13:59 client26ai [oracle@bastion oracle]$

I will prepare the response file for the command line installation.

[oracle@bastion client26ai]$ cp -p response/client_install.rsp response/client_install_custom.rsp [oracle@bastion client26ai]$ vi response/client_install_custom.rsp [oracle@bastion client26ai]$ diff response/client_install.rsp response/client_install_custom.rsp 22c22 UNIX_GROUP_NAME=oinstall 26c26 INVENTORY_LOCATION=/opt/oracle/oraInventory 30c30 ORACLE_HOME=/opt/oracle/client26ai 34c34 ORACLE_BASE=/opt/oracle 48c48 oracle.install.client.installType=Administrator [oracle@bastion client26ai]$

And I will run the Oracle 26ai Client installation.

[oracle@bastion client26ai]$ pwd /opt/oracle/client26ai [oracle@bastion client26ai]$ ls -ltrh total 24K -rwxrwx---. 1 oracle oinstall 500 Feb 6 2013 welcome.html -rwxr-xr-x. 1 oracle oinstall 8.7K Jan 17 12:38 runInstaller drwxr-xr-x. 4 oracle oinstall 4.0K Jan 17 12:38 install drwxr-xr-x. 15 oracle oinstall 4.0K Jan 17 13:37 stage drwxr-xr-x. 2 oracle oinstall 82 May 21 16:13 response [oracle@bastion client26ai]$ ./runInstaller -silent -responseFile /opt/oracle/client26ai/response/client_install_custom.rsp Starting Oracle Universal Installer... Checking Temp space: must be greater than 415 MB. Actual 5025 MB Passed Checking swap space: must be greater than 150 MB. Actual 4095 MB Passed Preparing to launch Oracle Universal Installer from /tmp/OraInstall2026-05-21_04-16-17PM. Please wait ... [WARNING] [INS-32016] The selected Oracle home contains directories or files. ACTION: To start with an empty Oracle home, either remove its contents or specify a different location. ********************************************* Package: compat-openssl10-1.0.2 (x86_64): This is a prerequisite condition to test whether the package "compat-openssl10-1.0.2 (x86_64)" is available on the system. Severity: IGNORABLE Overall status: VERIFICATION_FAILED Error message: PRVF-7532 : Package "compat-openssl10(x86_64)-1.0.2" is missing on node "bastion" Cause: A required package is either not installed or, if the package is a kernel module, is not loaded on the specified node. Action: Ensure that the required package is installed and available. ----------------------------------------------- [WARNING] [INS-13014] Target environment does not meet some optional requirements. CAUSE: Some of the optional prerequisites are not met. See logs for details. /opt/oraInventory/logs/installActions2026-05-21_04-16-17PM.log. ACTION: Identify the list of failed prerequisite checks from the log: /opt/oraInventory/logs/installActions2026-05-21_04-16-17PM.log. Then either from the log file or from installation manual find the appropriate configuration to meet the prerequisites and fix it manually. The response file for this session can be found at: /opt/oracle/client26ai/install/response/client_2026-05-21_04-16-17PM.rsp You can find the log of this install session at: /opt/oraInventory/logs/installActions2026-05-21_04-16-17PM.log The installation of Oracle Client 26ai was successful. Please check '/opt/oraInventory/logs/silentInstall2026-05-21_04-16-17PM.log' for more details. Successfully Setup Software with warning(s). [INS-10115] All configuration tools were previously ran successfully, no further configuration is required. [oracle@bastion client26ai]$

And the Oracle 26ai Client is now installed.

Prepare target databaseI will create a user on the target lab PDB, named TESTZ_TMR_003I, in order to establish sqlplus connection and test the EXPIRE_TIME configuration.

I will create a user test01 and grant the connect permissions.

[oracle@svl-oat ~]$ echo $ORACLE_SID

CDB001I

[oracle@svl-oat ~]$ sqlplus / as sysdba

SQL*Plus: Release 19.0.0.0.0 - Production on Thu May 21 14:30:39 2026

Version 19.23.0.0.0

Copyright (c) 1982, 2023, Oracle. All rights reserved.

Connected to:

Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production

Version 19.23.0.0.0

SQL> show pdbs

CON_ID CON_NAME OPEN MODE RESTRICTED

---------- ------------------------------ ---------- ----------

2 PDB$SEED READ ONLY NO

3 TESTZ_APP_006I READ WRITE NO

4 RCLON_TMR_003I MOUNTED

5 RCLON_TMR_002I MOUNTED

6 RCLON_TMR_001I MOUNTED

7 CLONZ_TMR_002I MOUNTED

8 TESTZ_TMR_003I READ WRITE NO

10 TESTZ_APP_004I READ WRITE NO

11 CLONZ_APP_001I READ WRITE NO

12 RCLON_APP_003I READ WRITE NO

SQL> alter session set container=TESTZ_TMR_003I;

Session altered.

SQL> create user test01 identified by "test_expire";

User created.

SQL> grant connect to test01;

Grant succeeded.

SQL>

Test connection with Oracle 26ai Client

I will first set the ORACLE_HOME variable on the appropriate client directory.

[oracle@bastion client26ai]$ echo $ORACLE_HOME /opt/oracle/client19c [oracle@bastion client26ai]$ export ORACLE_HOME=/opt/oracle/client26ai [oracle@bastion client26ai]$ echo $ORACLE_HOME /opt/oracle/client26ai [oracle@bastion client26ai]$

I will update the PATH variable to get tnsping and sqlplus binary from the appropriate Oracle 26ai Client.

[oracle@bastion client26ai]$ echo $PATH /usr/share/Modules/bin:/usr/local/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/opt/oracle/client19c/bin:/opt/oracle/sqlcl-24.1.0.087.0929//bin [oracle@bastion client26ai]$ export PATH=/opt/oracle/client26ai/bin [oracle@bastion client26ai]$ echo $PATH /opt/oracle/client26ai/bin [oracle@bastion client26ai]$

I will check that the appropriate tnsping and sqlplus is taken.

[oracle@bastion client26ai]$ which sqlplus /opt/oracle/client26ai/bin/sqlplus [oracle@bastion client26ai]$ which tnsping /opt/oracle/client26ai/bin/tnsping

I checked that the connection to target PDB is working.

[oracle@bastion ~]$ tnsping svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com TNS Ping Utility for Linux: Version 23.26.1.0.0 - Production on 21-MAY-2026 16:28:56 Copyright (c) 1997, 2026, Oracle. All rights reserved. Used parameter files: /opt/oracle/client19c/network/admin/sqlnet.ora Used EZCONNECT adapter to resolve the alias Attempting to contact (DESCRIPTION=(CONNECT_DATA=(SERVICE_NAME=testz_tmr_003i.db.jewlab.oraclevcn.com))(ADDRESS=(PROTOCOL=tcp)(HOST=X.X.1.135)(PORT=1521))) OK (0 msec) [oracle@bastion ~]$

The TNS_ADMIN used is from the 19c Oracle client directory, which is absolutely not a problem.

[oracle@bastion ~]$ echo $TNS_ADMIN /opt/oracle/client19c/network/admin [oracle@bastion ~]$Test sqlplus connection with Oracle 26ai Client

As I can see on my client side, I do not have any sqlplus connection running right now.

[opc@bastion ~]$ ss -nop | grep 1521 [opc@bastion ~]$

I will generate a sqlplus connection.

[oracle@bastion client26ai]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 23.26.1.0.0 - Production on Thu May 21 16:34:23 2026 Version 23.26.1.0.0 Copyright (c) 1982, 2025, Oracle. All rights reserved. Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

And I can see that I have got a sqlplus connection with no timer/keepalive.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:51404 X.X.1.135:1521 [opc@bastion ~]$

I will now configure Oracle Dead Connection Detection with SQLNET.EXPIRE_TIME parameter set in the client sqlnet.ora with a value of 1 minute.

[oracle@bastion ~]$ cd $TNS_ADMIN [oracle@bastion admin]$ /usr/bin/grep -i expire sqlnet.ora [oracle@bastion admin]$ [oracle@bastion admin]$ /usr/bin/vi sqlnet.ora [oracle@bastion admin]$ /usr/bin/grep -i expire sqlnet.ora SQLNET.EXPIRE_TIME=1 [oracle@bastion admin]$

I will run a new sqlplus connection.

[oracle@bastion admin]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 23.26.1.0.0 - Production on Thu May 21 16:41:06 2026 Version 23.26.1.0.0 Copyright (c) 1982, 2025, Oracle. All rights reserved. Last Successful login time: Thu May 21 2026 16:34:23 +02:00 Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

I can now see that I have got a connection configured with a timer and keep alive remaining of 38s.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:62868 X.X.1.135:1521 timer:(keepalive,38sec,0) [opc@bastion ~]$

Let’s configure the EXPIRE_TIME with a value of 15 minutes.

[oracle@bastion admin]$ /usr/bin/vi sqlnet.ora [oracle@bastion admin]$ /usr/bin/grep -i expire sqlnet.ora SQLNET.EXPIRE_TIME=15

I run a new sqlplus connection.

[oracle@bastion admin]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 23.26.1.0.0 - Production on Thu May 21 16:43:25 2026 Version 23.26.1.0.0 Copyright (c) 1982, 2025, Oracle. All rights reserved. Last Successful login time: Thu May 21 2026 16:42:29 +02:00 Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

And I now have got a connection configured with a timer and keep alive remaining of 14min.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:60630 X.X.1.135:1521 timer:(keepalive,14min,0) [opc@bastion ~]$

So, all good Oracle 26ai Client is supporting Dead Connection Detection with SQLNET.EXPIRE_TIME parameter.

Let’s test it with Oracle 19c ClientWe can easily confirm again that Oracle 19c Client does not support Dead Connection Detection on the client side.

Let’s move back to Oracle 19c Client home.

[oracle@bastion admin]$ export PATH=/opt/oracle/client19c/bin [oracle@bastion admin]$ which sqlplus /opt/oracle/client19c/bin/sqlplus

Run a sqlplus connection.

[oracle@bastion admin]$ sqlplus test01/test_expire@svl-oat:1521/testz_tmr_003i.db.jewlab.oraclevcn.com SQL*Plus: Release 19.0.0.0.0 - Production on Thu May 21 16:48:09 2026 Version 19.3.0.0.0 Copyright (c) 1982, 2019, Oracle. All rights reserved. Last Successful login time: Thu May 21 2026 16:46:07 +02:00 Connected to: Oracle Database 19c EE High Perf Release 19.0.0.0.0 - Production Version 19.23.0.0.0 SQL>

And check connection configuration.

[opc@bastion ~]$ ss -nop | grep 1521 tcp ESTAB 0 0 X.X.0.89:64078 X.X.1.135:1521 [opc@bastion ~]$

There is no timer/keepalive handled with Oracle 19c Client.

To wrap up…Oracle Database 26ai Client is now supporting Dead Connection Detection on the client side. For our customer configuration this will help the client to check every X minutes (EXPIRE_TIME configured value) for Dead Connection. So if the CMAN and listener connections have already died and if for any reason the Network Load Balancer is still keeping the connection with the client, the client will close the connection after X minutes.

L’article Oracle Database 26ai Client and SQLNET.EXPIRE_TIME est apparu en premier sur dbi Blog.

Azure Bootcamp Switzerland 2026 edition

Today I attended the Azure Bootcamp Switzerland event in Bern. Here is a summary of what I saw and what I learned in the sessions.

The opening keynote was about Azure Sovereign Architecture where the presenter gave us an update on the current Azure/Microsoft projects. We also had an explanation on how sovereignty works.

Then I joined a session titled “Time Bombs In Entra ID – How Well Are Your Entra ID Apps Managed?”. The speaker explained to us how Azure App registration and service principal really work. He also gave us some advice on best practices when using this kind of Azure/Entra resource.

Before the lunch break I joined a session on how some architects resolved the “multiple teams needed to deploy something” problem. They automated the deployment with CI/CD and Terragrunt. They did a demo on how they use their code and how they make infrastructure changes with it.

After the lunch, I chose to go in a more network oriented presentation. The topic was how to get rid of VPN by using an Azure service called Global Secure Access. Even though I’m not convinced that we can get rid of VPNs, this option could be something for highly Microsoft infrastructure as it uses the Microsoft backbone for all the network routing.

The last two sessions I attended sessions on Azure Policy. The topics were first using code to deploy Azure policies, as it’s a better way to have them identical in multiple environments and as it as also faster than using the Azure interface, which is slow. The second one was about using conditional access as safer alternative for securing Azure tenants with policies. This method is quite interesting but requires a paid version of Entra to be activated.

Finally, for the closing keynote, we had a presentation about an application developed by a Swiss company that helps emergency services coordinate. It’s allowing call centers to locate and contact closest to scene savers and organize their deployment.

Once again, I’m glad that could attend this event. I learned quite a bunch of things and could also refresh my memory on some other topics. The sessions are long enough to detail a topic and the speakers are always performing well.

L’article Azure Bootcamp Switzerland 2026 edition est apparu en premier sur dbi Blog.

Reduce downtime when refreshing your non-production databases using Multitenant

You probably refresh your non-production Oracle databases with production data from time to time or on a regular basis. Without Multitenant, the most common procedure to do this refresh is a DUPLICATE FROM BACKUP with RMAN. The drawback is the unavailability of the database being refreshed during the DUPLICATE. You first need to remove the old version of the database, then start the DUPLICATE and wait until it’s finished. If you have Enterprise Edition and enough CPU, you can lower the time needed for the refresh by allocating a sufficient number of channels. But with a small number of CPU (which is normal for a non-production server), or eventually with Standard Edition (single channel RMAN operations only), a multi-TB database refresh can take several hours to complete. And if it fails for some reasons, you need to retry the refresh, extending even more the downtime.

Multitenant brought new possibilities for refreshing a database, and my favorite one is a CREATE PLUGGABLE DATABASE from a database link (DB link). It’s dead easy compared to a DUPLICATE FROM BACKUP on a non-CDB database. And you can lower the downtime to the very minimum. Here is how I did this for several projects.

How to lower the downtime to the minimum when refreshing a non-production PDB?You probably know that one of the advantage of a pluggable database is the easiness of changing its name. You just need to stop the PDB, rename it, and restart it. You can then use this technique to refresh a PDB under a temporary name and let the actual PDB available during the refresh. Once the refresh is finished, drop or rename the actual PDB, and rename the newest one to its target name. Even if your refresh takes hours, your downtime is limited to a couple of seconds/minutes.

Step 1: add an additional grant for source PDB’s administratorThe PDB administrator on the source database must have the CREATE PLUGGABLE DATABASE privilege:

ssh oracle@p01-srv-ora

. oraenv <<< P19PMT

sqlplus / as sysdba

Alter session set container=P19_ERP;

grant create pluggable database to SYSERP;

exitThe target server must have a TNS entry to the source PDB (production). If your source PDB and its container are protected by a Data Guard configuration, dont’t forget to add both addresses:

ssh root@t01-srv-ora

su – oracle

. oraenv <<< D19PMT

vi $ORACLE_HOME/network/admin/tnsnames.ora

…

P19_ERP =

(DESCRIPTION =

(LOAD_BALANCE = OFF)

(FAILOVER = ON)

(ADDRESS_LIST =

(ADDRESS = (PROTOCOL = TCP)(HOST = p01-srv-ora)(PORT = 1521))

(ADDRESS = (PROTOCOL = TCP)(HOST = p02-srv-ora)(PORT = 1521))

)

(CONNECT_DATA =

(SERVER = DEDICATED)

(SERVICE_NAME = P19_ERP)

)

)

tnsping P19_ERP

…

A DB link is required on the target container:

ssh root@t01-srv-ora

su – oracle

. oraenv <<< D19PMT

sqlplus / as sysdba

CREATE DATABASE LINK P19_ERP CONNECT TO SYSERP IDENTIFIED BY "*************" USING 'P19_ERP';

select count(*) from dual@P19_ERP;

COUNT(*)

----------

1

exitBasically, refresh will have 5 main tasks:

- create a new PDB with a temporary name _NEW on the target container from the source PDB

- start the new PDB for its correct registration in the container

- run an optional script for modifying production data (masking, disabling tasks, …)

- stop and rename the current PDB to _OLD, then start it again

- stop and rename the new PDB to its target name and start it again

Task 2 is needed because you cannot rename a PDB immediately after creation. You first need to open it, then close it for being able to change its name.

Let’s create 2 scripts on the target server, one shell script and one SQL script:

vi /home/oracle/scripts/refresh_D19_ERP.sh

#!/bin/bash

export ORACLE_SID=D19PMT

export REFRESH_LOG=/home/oracle/scripts/log/refresh_D19_ERP_`date +%d_%m_%Y-%H_%M_%S`.log

export ORACLE_HOME=`cat /etc/oratab | grep $ORACLE_SID | awk -F ':' '{print $2;}'`

date >> $REFRESH_LOG

$ORACLE_HOME/bin/sqlplus / as sysdba @/home/oracle/scripts/refresh_D19_ERP.sql >> $REFRESH_LOG

date >> $REFRESH_LOG

exit 0

vi /home/oracle/scripts/refresh_D19_ERP.sql

set timing on

show pdbs

alter pluggable database D19_ERP_OLD close immediate;

Drop pluggable database D19_ERP_OLD including datafiles;

show pdbs

create pluggable database D19_ERP_NEW from P19_ERP@P19_ERP ;

show pdbs

alter pluggable database D19_ERP_NEW open;

show pdbs

alter session set container=D19_ERP_NEW;

@/home/oracle/scripts/post_refresh_D19_ERP.sql

alter session set container=CDB$ROOT;

alter pluggable database D19_ERP close immediate;

alter pluggable database D19_ERP rename global_name to D19_ERP_OLD;

alter pluggable database D19_ERP_OLD open;

show pdbs

alter pluggable database D19_ERP_NEW close immediate;

alter pluggable database D19_ERP_NEW rename global_name to D19_ERP;

Alter pluggable database D19_ERP open;

Alter pluggable database D19_ERP save state;

show pdbs

exitIt does the job, although these are very basic scripts: further controls could be added to trap errors, manage services, and so on.

Step 5 : schedule the refreshScheduling can be done through the crontab, for example every evening at 11.30PM:

crontab -l | grep D19_ERP | grep refresh

30 23 * * * sh /home/oracle/scripts/refresh_D19_ERP.sh

This is definitely a smart solution as soon as you have enough space on disk to have 2 copies of the PDB. It’s quite reliable and ticks all the boxes where I deployed these scripts.

L’article Reduce downtime when refreshing your non-production databases using Multitenant est apparu en premier sur dbi Blog.

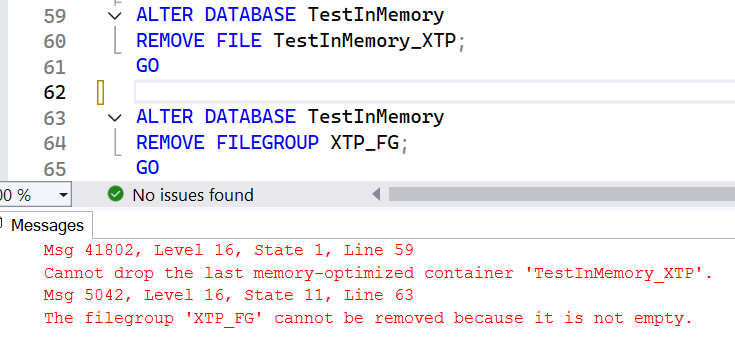

OGG-08502 Path not found error from OGG Receiver Service

Recently, after a successful migration to GoldenGate 26ai, a customer complained that he was seeing a lot of the following error in the ggserr.log file of a GoldenGate deployment (I replaced the names for the purpose of this blog).

2026-05-18T14:32:35.948+0200 ERROR OGG-08502. Oracle GoldenGate Receiver Service for Oracle: Path path21 not found.More precisely, in that case, path21 is a distribution path sending trail files from deployment ogg_test_02 to ogg_test_01. And the error shown above appeared in the log file of the ogg_test_01 deployment.

While this error did not seem to indicate any operational issue in the replication, after checking on multiple environments, I confirmed that it appears everywhere. So what is happening exactly ?

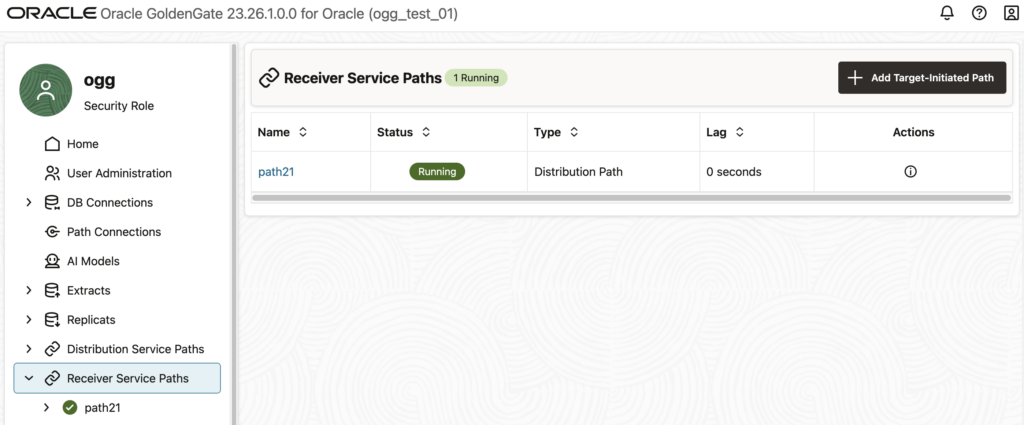

If you get this error and do not know where it comes from, log in to the web UI of the affected deployment, and go to the Receiver Service Paths tab. You should see a list of the distribution paths that are connecting to your deployments. The example below shows the path21 that is mentioned in the error.

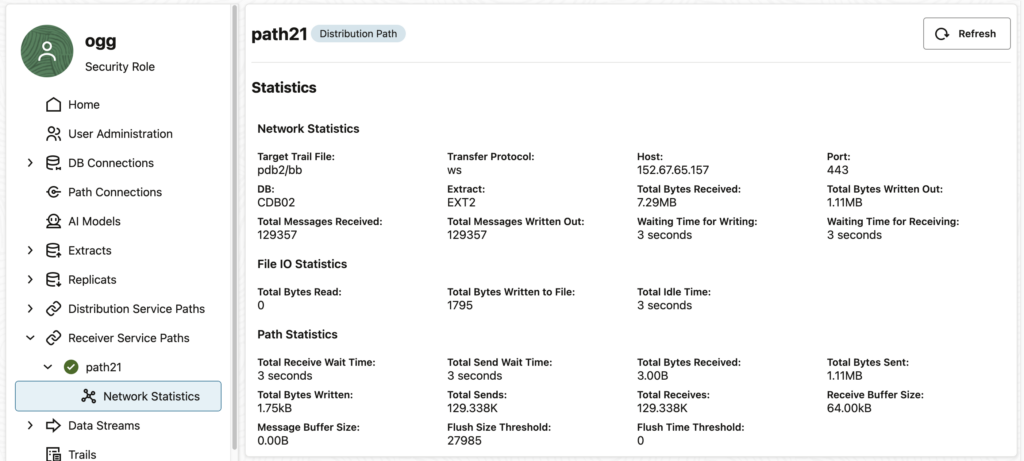

If you click on this path… Nothing happens ! And by “nothing”, I mean “nothing abnormal”. In fact, the statistics are properly displayed (see below), and there is no error shown to the user. However, if you look at your ggserr.log file you will see that the error given above appears.

At first glance, this might not seem like a huge issue, because if you don’t click on the receiver path, you will not get the error. However, in the log file of the customer, the error appeared regularly. Every minute, to be precise.

Why do I get this error even when I’m not accessing the web UI ?Luckily, when debugging this issue, I started by putting the target in a blackout in the Oracle Enterprise Manager. To my surprise, the error was gone during the blackout and reappeared right after.

In this case, the Enterprise Manager Plug-in for Oracle GoldenGate is monitoring the status of the deployment every minute and generates the error in the process.

When looking at the targets in the OEM, there is no error. Again, no operational impact.

Does it depend on the way you create the distribution path ?GoldenGate offers multiple ways of managing deployments : REST API, adminclient, or the web UI. Unfortunately, some bugs (and some features…) mean that you should avoid managing some objects with some of these tools (read why you shouldn’t create profiles through the adminclient, for instance).

In this specific case, all distribution path creation methods lead to the same error in the log file. It doesn’t matter whether you create the distribution path with the adminclient, the REST API or the web UI. They will all lead to this error.

Let’s dig a bit to see what is happening behind the scenes. By looking at the restapi.log file (read my blog on how to analyze REST API logs efficiently), we can see the full error:

2026-05-18 09:08:58.402+0000 ERROR|RestAPI.recvsrvr | Request #9: {

"context": {

"httpContextKey": 140097141801744,

"verbId": 2,

"verb": "GET",

"originalVerb": "GET",

"uri": "/services/v2/targets/path21",

"protocol": "http",

"headers": {

...

},

"host": "vmogg",

"securityEnabled": false,

"authorization": {

"authUserName": "ogg",

"authUserRole": "Security",

"authMode": "Cookie"

},

"requestId": 8,

"uriTemplate": "/services/{version}/targets/{path}",

"catalogUriTemplate": "/services/{version}/metadata-catalog/path"

},

"isScaRequest": true,

"content": null,

"parameters": {

"uri": {

"path": "path21",

"version": "v2"

},

"query": {

"WindowRef": "%2Fservices%2Fv2%2Fcontent%2F%23%2FrecvsrvrPaths%2Fpath21%2FpathNetworkStats"

}

}

}

Response: {

"context": {

...

},

"isScaResponse": true,

"content": {

"$schema": "api:standardResponse",

"links": [

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21",

"mediaType": "application/json"

},

{

"rel": "self",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21",

"mediaType": "application/json"

}

],

"messages": [

{

"$schema": "ogg:message",

"title": "Path path21 not found",

"code": "OGG-08502",

"severity": "ERROR",

"issued": "2026-05-18T09:08:58Z",

"type": "https://www.rfc-editor.org/rfc/rfc9110.html#name-status-codes"

}

]

}

}The issue comes from the following endpoint : /services/v2/targets/path21. It is described in the documentation under Retrieve an existing Oracle GoldenGate Collector Path. But looking at another endpoint described in Get a list of distribution paths, we get the following response:

{

"$schema": "api:standardResponse",

"links": [

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets",

"mediaType": "text/html"

},

{

"rel": "self",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets",

"mediaType": "text/html"

},

{

"rel": "describedby",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/metadata-catalog/targets",

"mediaType": "application/schema+json"

}

],

"messages": [],

"response": {

"$schema": "ogg:collection",

"items": [

{

"links": [

{

"rel": "parent",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets",

"mediaType": "application/json"

},

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21_ogg26dist2_7811",

"mediaType": "application/json"

}

],

"$schema": "ogg:collectionItem",

"name": "path21",

"status": "running",

"targetInitiated": false

}

]

}

}Here, we see that the endpoint associated with the path21 object is not recvsrvr/v2/targets/path21 but recvsrvr/v2/targets/path21_ogg26dist2_7811. And looking at this second endpoint, we do not get an error.

{

"$schema": "api:standardResponse",

"links": [

{

"rel": "canonical",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21_ogg26dist2_7811",

"mediaType": "text/html"

},

{

"rel": "self",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/targets/path21_ogg26dist2_7811",

"mediaType": "text/html"

},

{

"rel": "describedby",

"href": "https://vmogg/services/ogg_test_01/recvsrvr/v2/metadata-catalog/path",

"mediaType": "application/schema+json"

}

],

"messages": [],

"response": {

"name": "path21",

"status": "running",

"$schema": "ogg:distPath",

"source": {

"uri": "trail://localhost:7811/services/v2/sources?trail=pdb2/bb"

},

"target": {

"$schema": "ogg:distPathEndpoint",

"uri": "ws://vmogg/services/v2/targets?trail=pdb2/bb"

},

"options": {

"network": {

"appOptions": {

"appFlushBytes": 27985,

"appFlushSecs": 1

},

"socketOptions": {

"tcpOptions": {

"ipDscp": "DEFAULT",

"ipTos": "DEFAULT",

"tcpNoDelay": false,

"tcpQuickAck": true,

"tcpCork": false,

"tcpSndBuf": 16384,

"tcpRcvBuf": 131072

}

}

}

}

}

}The problem is that it was never decided for path21 to be referred to as path21_ogg26dist2_7811 internally. And it looks like GoldenGate does not know about it either… So until the bug is corrected, you will have to filter this OGG-08502 Path not found error out of the ggserr.log file if you use it for monitoring.

L’article OGG-08502 Path not found error from OGG Receiver Service est apparu en premier sur dbi Blog.

What being an external consultant really changes

When people think about consultants, they usually focus on expertise. “They bring experience, frameworks, and best practices.”

That’s true, of course. However, that is not the most impactful aspect of the role.

The real shift happens somewhere less visible: positioning. As an outsider, you don’t just join a team.

You become something different. Over time, I’ve come to think of it as operating within a “shadow team.”

This invisible layer changes how you navigate politics, truth, and influence.

Let’s unpack that.

As an employee, you’re clearly part of the organization.

However, when you’re an external consultant, it’s a different story.

You sit inside delivery teams while remaining outside the organization’s long-term structure. This dual positioning creates what I call a shadow team.

You collaborate closely with internal stakeholders, influence decisions without owning them, and observe dynamics that others are too immersed in to see.

You’re close enough to matter, yet distant enough to stay objective.

This reshapes everything.

Every organization has internal politics, including priorities, power structures, historical tensions, and unwritten rules. The larger the organization, the more politics there are.

Employees must live within that system.

Consultants, on the other hand, can often see the system more clearly because they aren’t fully bound by it.

This doesn’t mean you’re outside of politics, though.

It means:

You can identify misalignments more quickly, notice when decisions are driven by structure, not logic and spot friction between teams that others consider “normal.”

But here’s the key difference:

- You are less constrained by long-term consequences.

- An employee may avoid challenging a decision due to its potential impact on their career.

- However, a consultant can raise the concern because their role is to add clarity, not preserve equilibrium.

- Still, this doesn’t mean ignoring politics. It means navigating them consciously without being controlled by them.

One of the most powerful—and most fragile—assets of being an external consultant lies in the neutrality that people attribute to you.

You are not:

- Competing for a promotion

- Defending a department

- Protecting past decisions

This creates a rare opportunity. You can become a trusted bridge between stakeholders

When done right, people will:

- Share concerns they wouldn’t voice internally

- Ask for your opinion as a “safe” perspective

- Use you to validate or challenge ideas

However, neutrality is not automatic, it must be earned and can easily be lost.

You lose it when:

- You align too strongly with one stakeholder

- You start defending internal logic instead of questioning it

- You behave like an insider too quickly

The best consultants maintain a delicate balance:

They are close enough to build trust and distant enough to stay credible.

Truth vs. Diplomacy: walking the tightropeThis is where the role becomes truly challenging.

As a consultant, you are often expected to:

- Tell the truth

- Challenge assumptions

- Highlight risks

However, you are also expected to:

- Maintain relationships

- Respect stakeholders

- Keep the project moving forward

These two expectations often conflict with each other.

The naive approach: “Just be brutally honest.”

This approach quickly fails. Brutality destroys trust.

The safe approach: “Say what people want to hear.”

This makes you irrelevant.

The real skill is delivering truth in a way that can be heard.

That means:

- Frame issues in terms of impact, not fault.

- Ask questions instead of making accusations.

- Adapt your message to your audience.

For example, rather than saying, “This process isn’t working at all”

A more measured approach might be: “I see a few risks associated with this process. Could we go over them together?”

The observation is the same.

However, the outcome is different.

Being a consultant isn’t just about knowledge. It’s also about positioning. You have a clearer view, speak more freely, and connect across sides.

However, our profession is based on a paradox. We must be objective enough to provide sound advice, yet also be fully committed to the task at hand. Additionally, we must offer honest feedback without hurting the client’s feelings or losing their trust.

At dbi services, we’re passionate about striking that delicate balance, whether the subject is ECM or any other area of our expertise. Learn more about us here.

L’article What being an external consultant really changes est apparu en premier sur dbi Blog.

Install and configure OEM plug-in for GoldenGate

If you are licensed for the GoldenGate Management Pack, using the Enterprise Manager plug-in for GoldenGate improves monitoring and management of your deployments. And after migrating to the Microservices Architecture, you should definitely update your plug-in and rediscover all targets. Let’s see how to do all that here.

In this blog, I will use the latest version of the Enterprise Manager (24ai) and monitor GoldenGate 26ai deployments. The overall workflow is the same for other versions of the Enterprise Manager and GoldenGate, provided OGG is in the Microservices Architecture.

Here are the main steps to monitor GoldenGate targets from the Enterprise Manager:

- Update the catalog in the Enterprise Manager

- Deploy the plug-in on the management server

- Deploy the plug-in on the agent

- Configure the discovery module

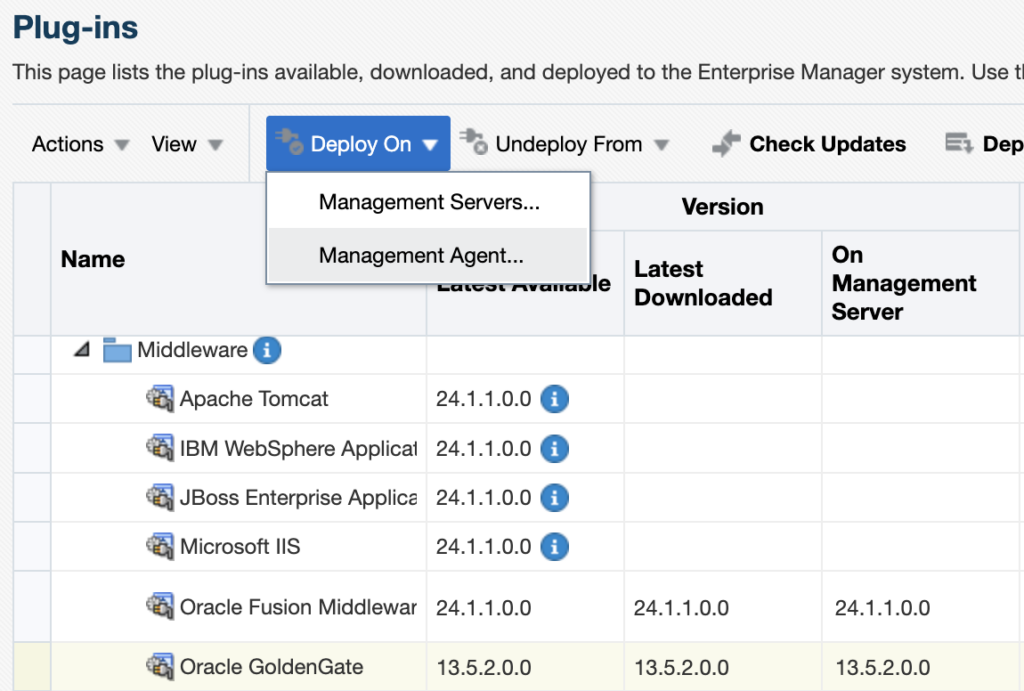

- Promote the new targets

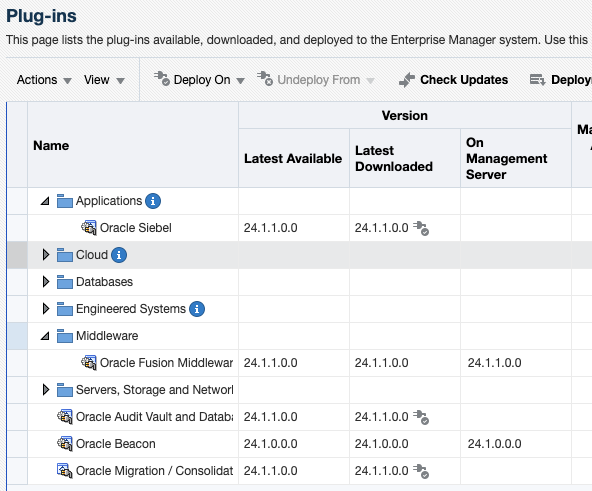

Before attempting to install the plug-in, make sure it is not already installed in your environment. To check this, go to Setup > Extensibility > Plug-ins, and expand the Middleware section. If you do not see any line named Oracle GoldenGate, it means the plug-in is not installed yet.

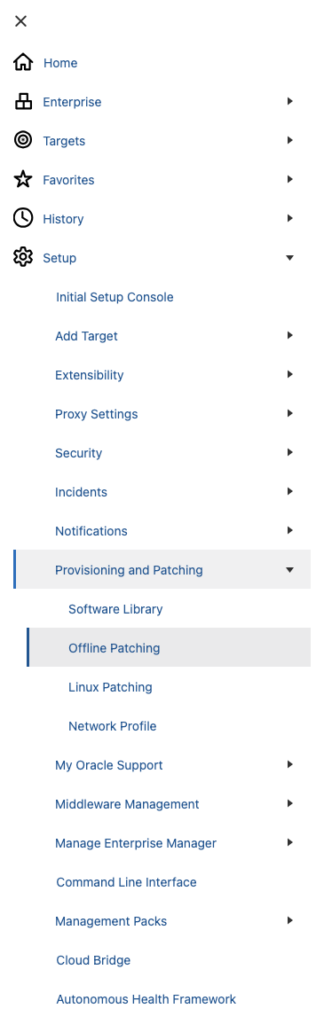

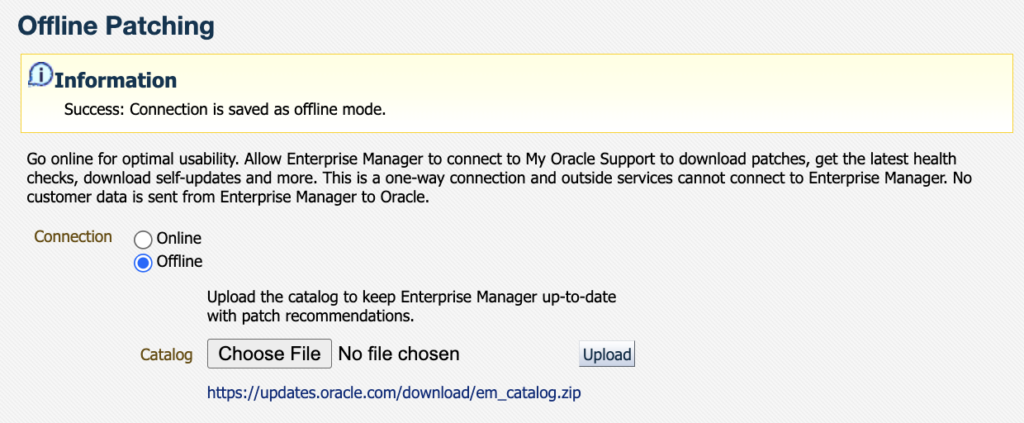

Since most OEM environments do not have access to the Oracle Support directly, we’ll download the plug-in in offline mode. To do so, go to the Setup > Provisioning and Patching > Offline Patching section.

Once in the Offline Patching section, make sure Offline is selected for the connection and download the catalog file as instructed from an environment with access to the Oracle support website. Transfer it to where you have access to the OEM UI, and upload it.

Once the catalog is uploaded, you should see the following information message.

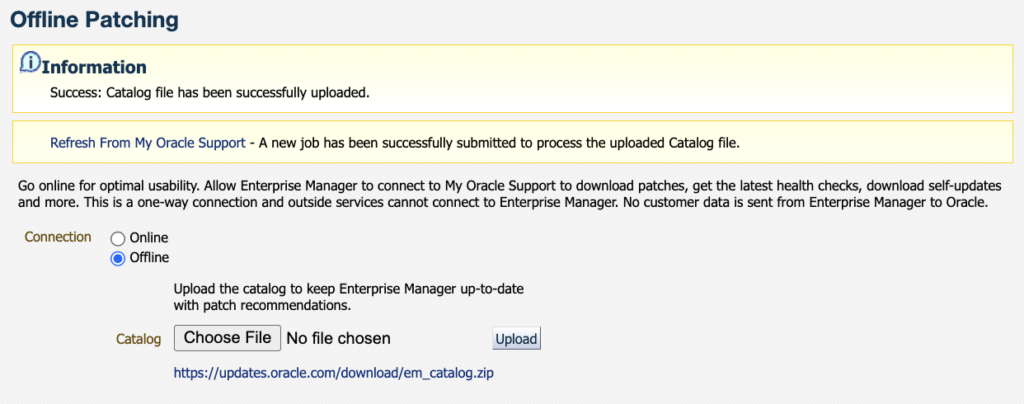

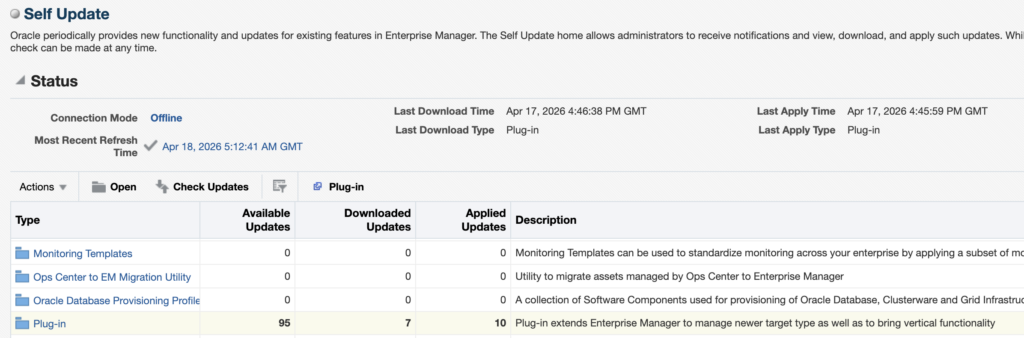

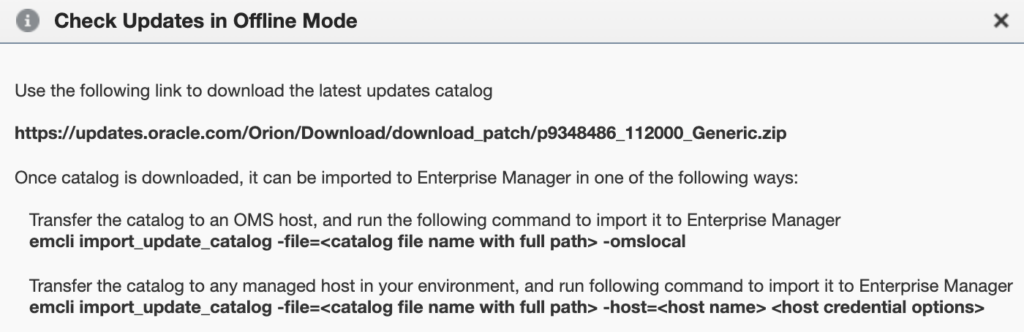

Then, go to Setup > Extensibility > Self Update and click on Check Updates.

You should see the following pop-up appear, with a link from where you will be able to download the OEM Self Update catalog file. For reference, the one I had when writing this blog was the following : https://updates.oracle.com/Orion/Download/download_patch/p9348486_112000_Generic.zip

As instructed, transfer this patch to the OMS host and import it with emcli and the import_update_catalog action. You can also import it from another managed host. It should take around twenty seconds to import everything.

oracle@oem24:~/ [oem24] emcli import_update_catalog -file=/tmp/p9348486_112000_Generic.zip -omslocal

Processing catalog for Diagnostic Tools

Processing update: Diagnostic Tools - AHFFI 25.1.0.1.0 for Linux

Processing update: Diagnostic Tools - AHF 25.5.0.0.0 for HP

[...]

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of GoldenGate Microservices Architecture, in addition to the GoldenGate Classic Architecture

[...]

Successfully uploaded the Self Update catalog to Enterprise Manager. Use the Self Update Console to view and manage updates.

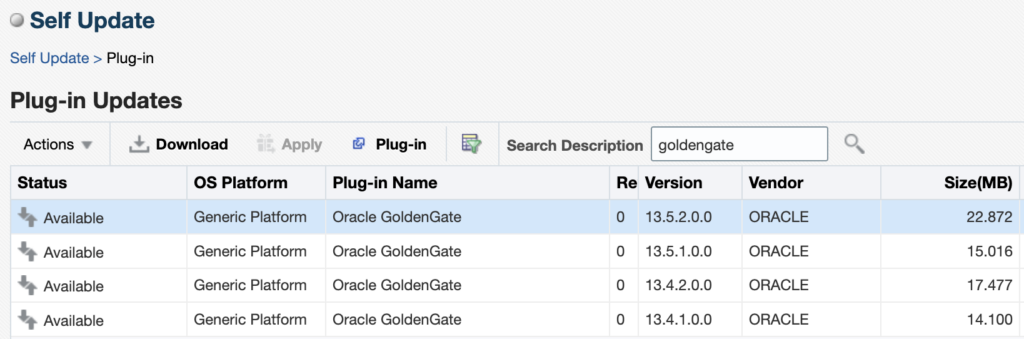

Time taken for import catalog is 17.289 seconds.When this is done, go back to the Self Update page, click on the Plug-In section. You will see the different versions of GoldenGate that are available. When I’m writing this blog, the latest version of the plug-in is 13.5.2.0.0 (the latest patch released in January 2026, 13.5.2.0.6, will be a topic for another blog). Click on the latest version and then on Download.

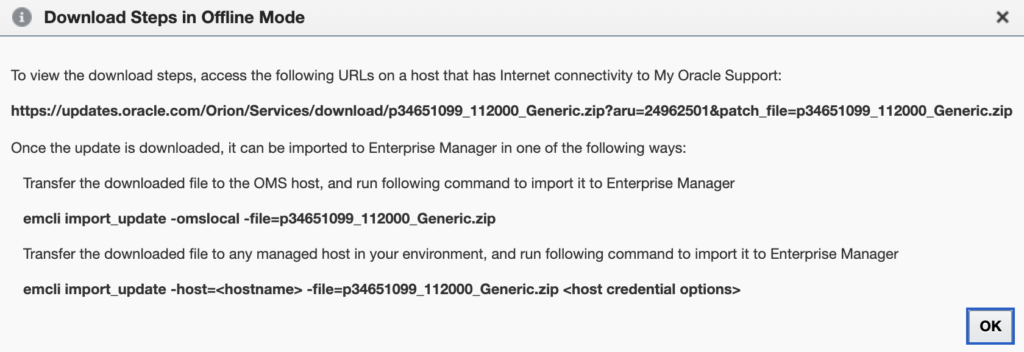

The following pop-up gives you the link from which you should download the plug-in update file. In my case, it was https://updates.oracle.com/Orion/Services/download/p34651099_112000_Generic.zip?aru=24962501&patch_file=p34651099_112000_Generic.zip.

Once the file is downloaded, import it in the same way as before with the catalog, but this time with the emcli import_update action.

oracle@oem24:~/ [oem24] emcli import_update -omslocal -file=/tmp/p34651099_112000_Generic.zip

Processing update: Plug-in - GoldenGate Plug-in now supports monitoring of Oracle GoldenGate Microservices, in addition to the Oracle GoldenGate Classic

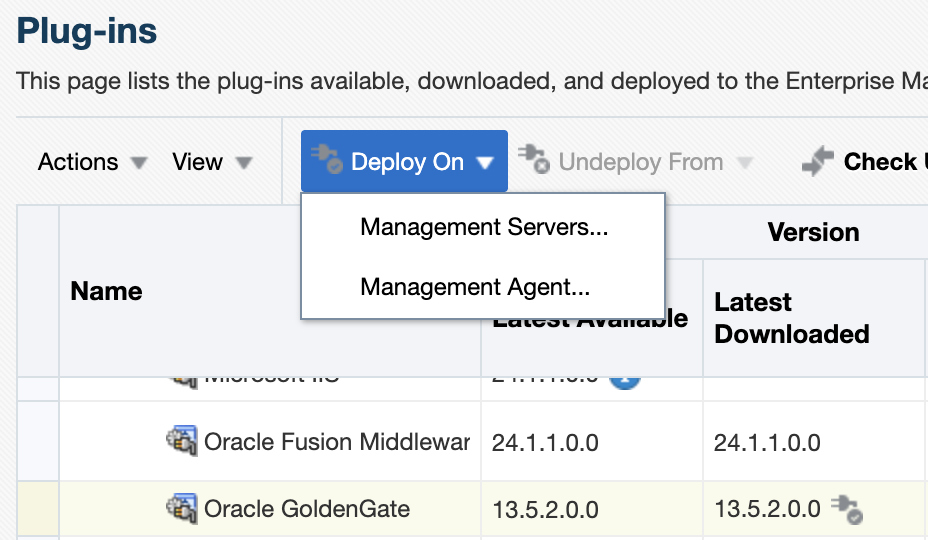

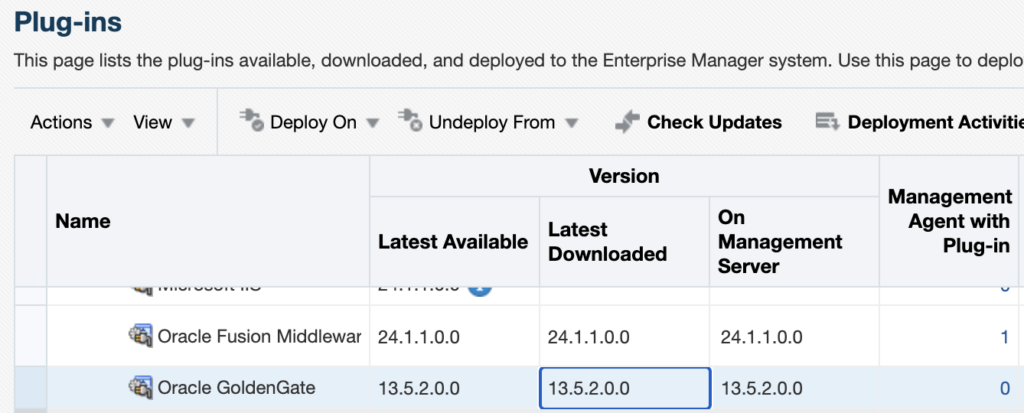

Successfully uploaded the update to Enterprise Manager. Use the Self Update Console to manage this update.Once this is done, go back to the Setup > Extensibility > Plug-in tab and expand the Middleware section. You should now see Oracle GoldenGate, and 13.5.2.0.0 as the downloaded version.

Warning : Deploying the plug-in on the management server will temporarily restart OMS components and briefly interrupt monitoring operations. To deploy the plug-in, you have two options:

- Deploying the plug-in from the web UI.

- Deploying the plug-in from the CLI.

From the web UI, click on the Oracle GoldenGate plug-in, then on Deploy On, and deploy the plug-in on the Management Servers.

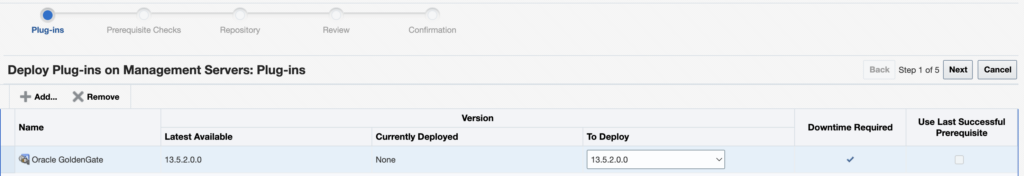

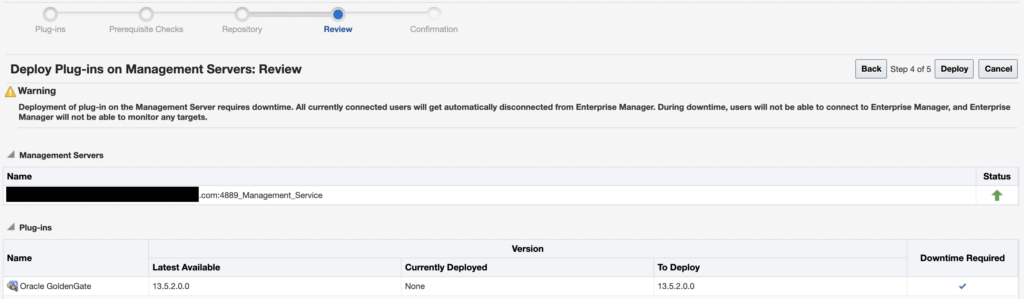

Make sure the correct version of the plug-in is chosen (13.5.2.0.0), and click on Next to run the prerequisite checks.

Once the checks are successfully completed, click on Next.

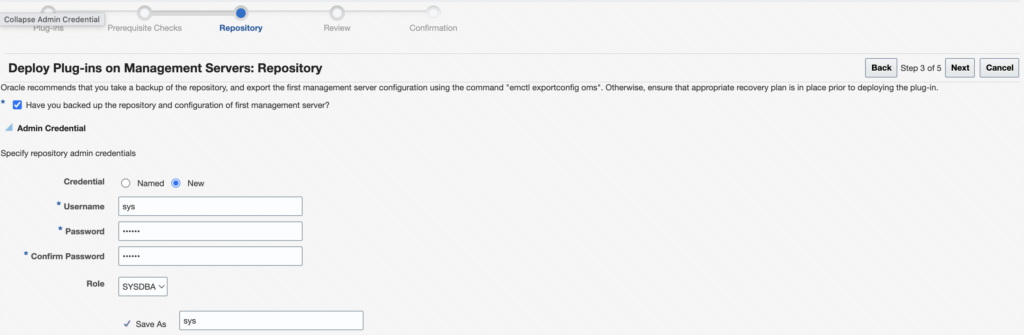

You should now select the repository credentials for the OEM. You should either use new credentials (if it’s a new environment) or use existing named credentials. Click on Next.

Once everything is done, click on Deploy.

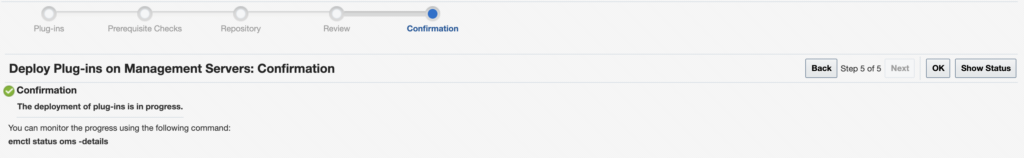

As instructed, you can check the status of the deployment with the emctl status oms -details command.

oracle@oem24:~/ [oem24] emctl status oms

Oracle Enterprise Manager 24ai Release 1

Copyright (c) 1996, 2024 Oracle Corporation. All rights reserved.

WebTier is Up

Oracle Management Server is Down

This is due to the following plug-ins being deployed on the management server or undeployed from it:

----------------------------------------

Plugin name: : Oracle GoldenGate

Version: : 13.5.2.0.0

ID: : oracle.fmw.gg

----------------------------------------Alternatively, you can deploy the plug-in with the following command, using the oracle.fmw.gg ID for the plug-in and the latest 13.5.2.0.0 version.

emcli deploy_plugin_on_server -plugin="oracle.fmw.gg:13.5.2.0.0"Once the plug-in is deployed on the Management Server, you can check again in the web UI : the latest version should be in the On Management Server section.

Deploy the plug-in on the agent

Deploy the plug-in on the agent

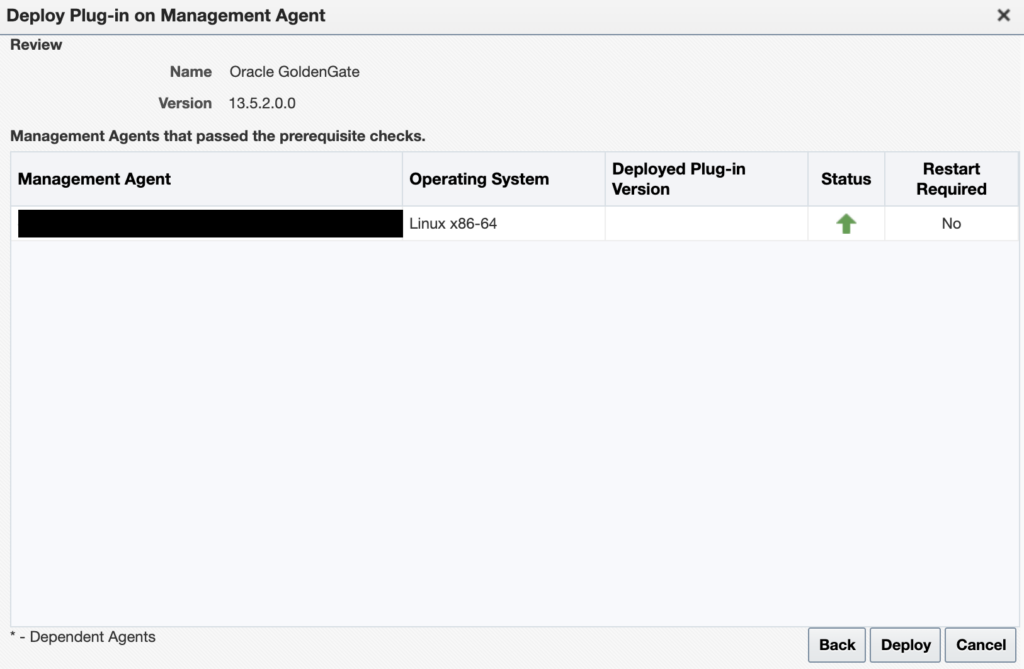

For each GoldenGate host where an OEM agent is running, deploy the plug-in. To do so, from the web UI, click on the Oracle GoldenGate plug-in, then on Deploy On, and select Management Agent.

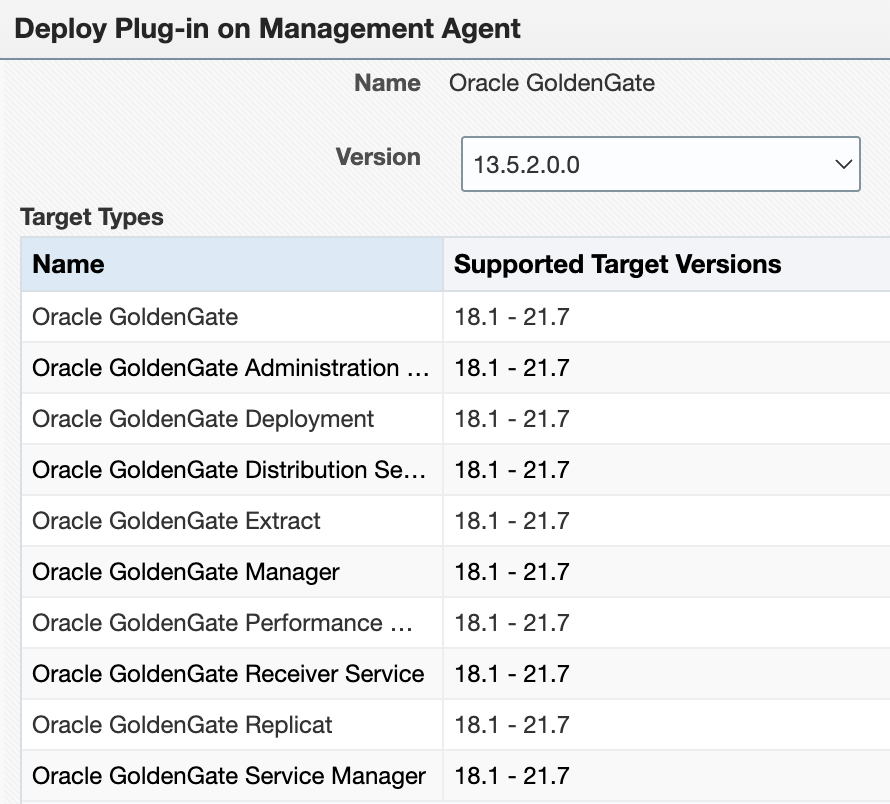

There is currently a bug with the Supported Target Versions. No matter your patch level, you will not see the latest versions of GoldenGate. Do not worry about this yet. Just make sure 13.5.2.0.0 is selected.

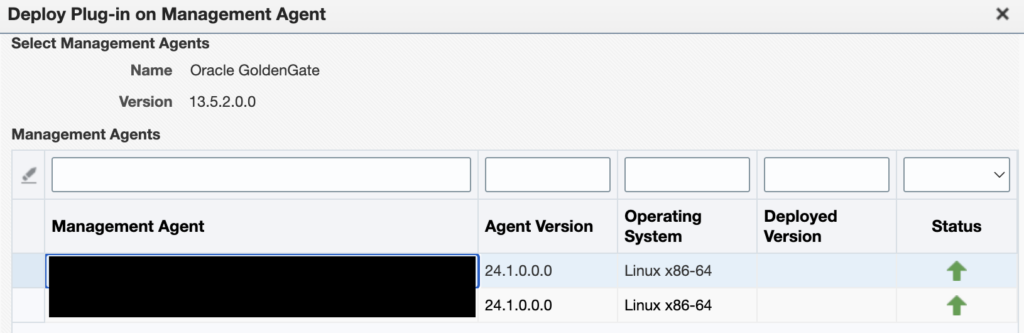

Then, select the agent on which you want to deploy the plug-in.

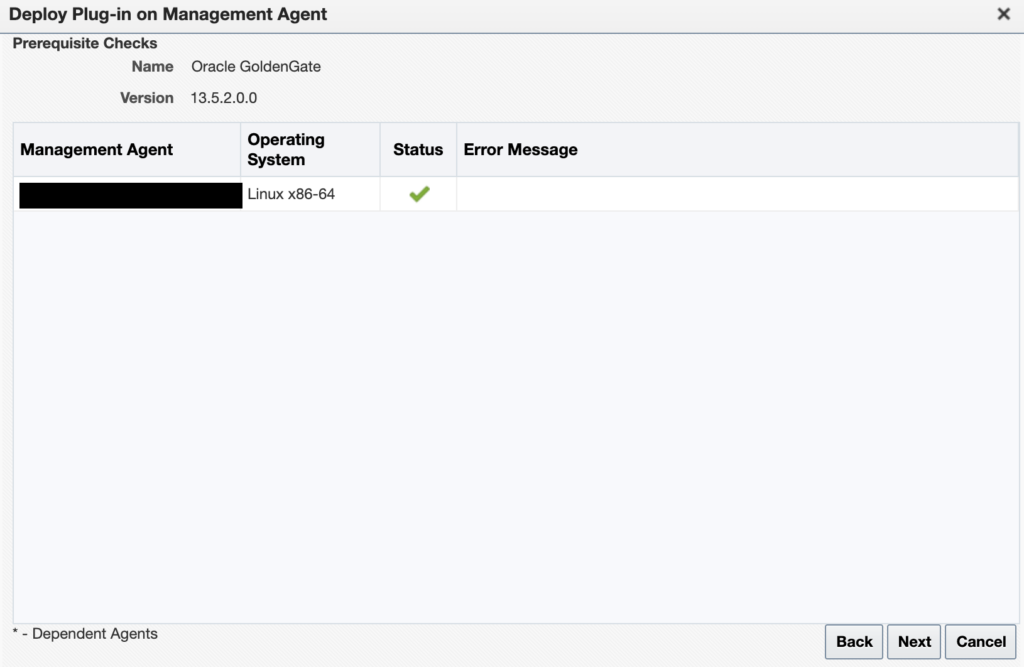

Let the prerequisite checks run…

And once everything is ready, click on Deploy.

You can check that everything is running properly with the emcli get_plugin_deployment_status command.

Configure GoldenGate monitoring in the Enterprise Manager

Configure GoldenGate monitoring in the Enterprise Manager

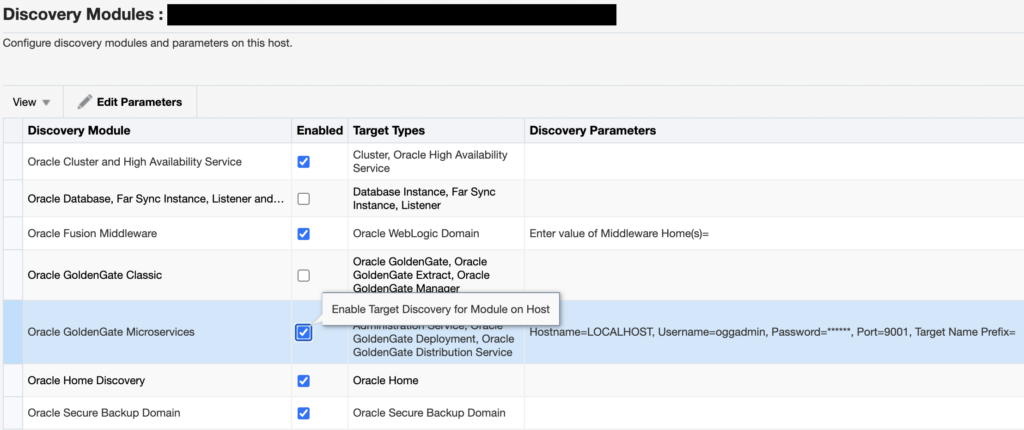

Once the plug-in is correctly deployed on the OMS host and on the GoldenGate host agent, you can configure the module. I will only cover the configuration for the Microservices Architecture. Go to the Setup > Add Target > Configure Auto Discovery tab.

Choose the correct agent host, and click on Discovery Modules.

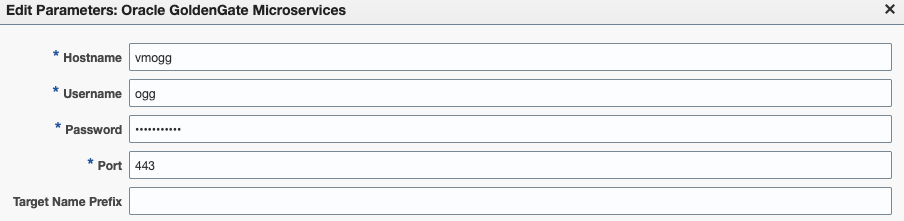

Enable the Oracle GoldenGate Microservices module, click on it, and then on Edit Parameters.

If you deployed GoldenGate with a reverse proxy, set up the plug-in as such.

If you deployed GoldenGate with a port for each service, enter the service manager port (7809, by default).

Warning : if your installation is secured with certificates, make sure to follow the instructions I gave in a blog to avoid EM-90000 errors when discovering new targets.

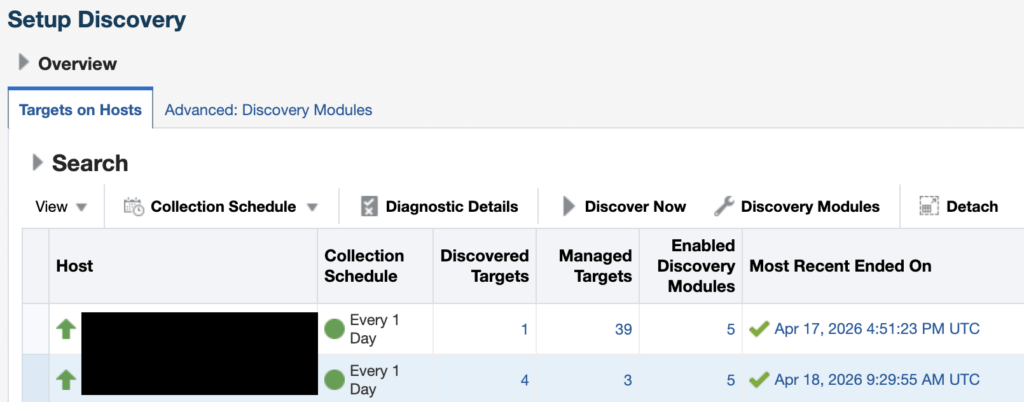

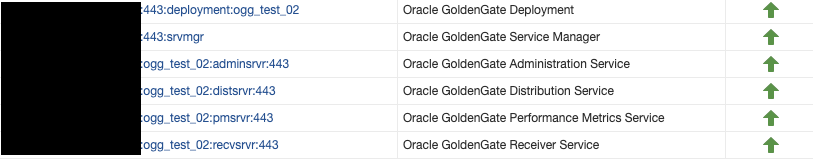

Once this is done, just go back to the Configure Auto Discovery section, click on the correct host, and then click on Discover Now. Then, go back to the Configure Auto Discovery section. You should now see a greater number of targets in the Discovered targets section.

If the number of targets did not increase, despite a successful discovery, check the blog linked above.

Click on the number of targets to jump to the Auto Discovery Results section. Select the newly discovered Service Manager target, and click on Promote. Once the target is promoted, you should see the new GoldenGate targets being monitored by the Enterprise Manager !

L’article Install and configure OEM plug-in for GoldenGate est apparu en premier sur dbi Blog.

Customer case study – automating SQL Server TLS Encryption with Ansible and Certificates (Architecture)

When working with SQL Server environments, securing client connections can become an important requirement, especially when TLS encryption must be implemented using certificates. In this context, a customer asked us to develop an Ansible playbook and role to automate the configuration of TLS for SQL Server. The certificates are generated from the customer PKI and provided as PEM files containing the server certificate, the private key, and the certificate chain.

However, some extractions and conversions are required before these certificates can be used on Windows and configured for SQL Server.

Here, the idea is to propose a solution (the architecture) that prepares the certificate, imports it on the SQL Server host, and configures SQL Server to use it.

We will also see how to separate the preparation and activation steps in order to reduce the impact on the SQL Server service.

In this blog post, we will describe the global approach and the Ansible logic used to implement certificate-based TLS encryption for SQL Server.

Implementation logicBefore configuring TLS encryption on SQL Server, the first point was to understand the certificate format provided by the customer PKI.

In our case, the generated file is a <machine>.pem file. This file contains the server certificate used for TLS, the private key and the certificate chain with the intermediate and root certificates.

As this format cannot be directly used as-is on the Windows side for SQL Server, some extraction and conversion steps are required.

The general idea is to use the Ansible control node as a working area.

The PEM file is first copied into a temporary folder where the different parts of the certificate are extracted:

- the leaf certificate

- the intermediate certificate

- the root certificate

- the private key

These elements are then used to build a PFX file which can be imported on the Windows SQL Server host.

The PFX is installed in the LocalMachine\My certificate store while the intermediate and root certificates are imported into the appropriate Windows certificate stores.

The implementation has been designed around three different execution modes: stage, activate, and full.

The stage mode is used to prepare the certificate without any impact on the SQL Server service. It copies the PEM file, performs the extractions, builds the PFX file, copies it to the managed Windows node and imports the certificates into the Windows certificate stores. No registry change is performed, and the SQL Server service is not restarted. This mode is useful when we want to prepare the server in advance before switching SQL Server to the new certificate.

The activate mode assumes that the certificate is already present on the Windows server. Its role is to configure SQL Server to use the installed certificate and depending on the selected option, restart the SQL Server service or leave the change pending until the next planned reboot.

This can be useful when the certificate activation must be aligned with an existing maintenance window, for example during monthly OS patching.

The full mode executes the complete configuration from end to end. It performs the extraction and conversion steps, imports the certificates, grants the required permissions, configures SQL Server to use the expected certificate, and restarts the SQL Server service only if required. To avoid unnecessary impact, the role relies on the certificate thumbprint. If the expected certificate is already configured, no change is applied and the SQL Server service is not restarted. This behavior is important for idempotency.

For example, if the full mode is executed after an activate mode, nothing should be changed if the certificate is already the correct one. The same logic applies if the playbook is executed by mistake while the certificate has not been renewed.

Another point to manage is the restart of the SQL Server service. SQL Server loads the certificate configuration when the service starts. Therefore, when a new certificate is configured, the change is only effective after a restart of the SQL Server service.

For this reason the role should provide an option to control whether the restart is performed immediately or postponed to the next planned reboot.

We also have to consider DNS aliases. The standard use case is to generate a certificate containing at least the short name and the FQDN of the SQL Server host in the subjectAltName. If DNS aliases are used by client applications, they can also be added to the certificate SAN.

For example:

[alt_names]

DNS.1 = A-WS2022-2.lab.local

DNS.2 = A-WS2022-2Finally, the customer confirmed that the private key included in the PEM file is not encrypted.

This simplifies the conversion process to PFX, but it also means that the PEM file must be handled carefully during the Ansible execution, especially in temporary folders and during file transfers. With this approach, the role provides a controlled way to prepare, activate, or fully configure TLS encryption for SQL Server while keeping the impact on the SQL Server service under control.

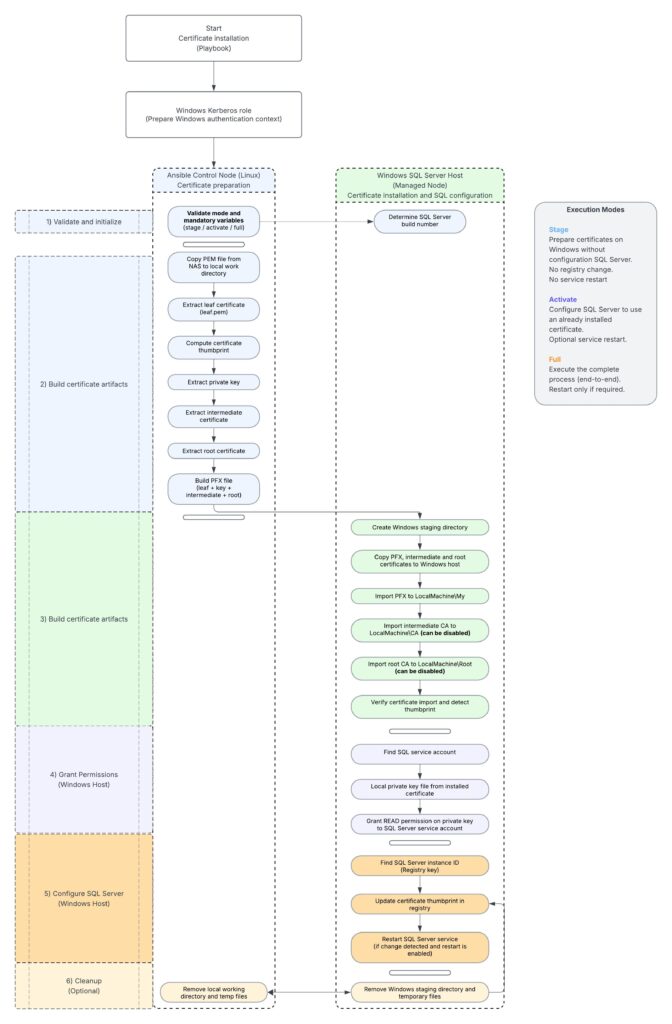

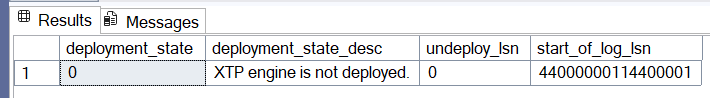

Logical workflowThe complete workflow can be represented as follows:

Architecture summary

Architecture summary

The certificate manipulation is performed on the Ansible control node.

The Windows certificate import and SQL Server configuration are performed on the managed Windows SQL Server host.

This separation is useful because the PEM processing and PFX generation are handled with Linux tools such as OpenSSL while the certificate installation, private key permissions, registry configuration and SQL Server restart are handled through Windows modules and PowerShell. The design also supports a controlled deployment approach.

The certificate can first be staged without service impact then activated later during a maintenance window.

The full mode can be used when the complete implementation must be executed in a single run. The use of the certificate thumbprint is important for idempotency. It allows the role to detect whether SQL Server is already configured with the expected certificate and avoids unnecessary service restarts when no change is required.

RemarksFor certain reasons we do not disclose the code of the created role.

Thank you. Amine Haloui

L’article Customer case study – automating SQL Server TLS Encryption with Ansible and Certificates (Architecture) est apparu en premier sur dbi Blog.

How Row Goal shapes your SQL Server query strategy by hunting for pierogis

SQLDay 2026 took place this week, from May 11th to 13th, in Wroclaw. Among the featured speakers was Erik Darling, who delivered both a main session and a full-day workshop dedicated to SQL Server performance. During his presentations, he emphasized a concept that is not always widely understood, known as the Row Goal.

The purpose of this article is to recap Erik’s key observations and to introduce this topic, which can serve as a powerful lever for query optimization.

A quick culinary detour and why pierogis matterIn order to understand the explanations below, one key concept must be understood: the Pierogi.

“Pierogi are filled dumplings made from unleavened dough, popular in Polish cuisine and enjoyed worldwide, with various savory and sweet fillings” [1], [2].

To be honest, this has nothing to do with our technical topic, but this dish discovered during this trip is so good that I simply had to include it in this blog.

Filling the aisles and designing our databaseIn this article, we will use a custom-made database simulating a Polish supermarket selling pierogis. Unfortunately, there aren’t many left, and the product distribution is not uniform. In fact, pierogis account for much less than 1% of the supermarket’s total stock.

Here is the script to create the DB, along with its article reference table and inventory:

USE master;

GO

IF EXISTS (SELECT * FROM sys.databases WHERE name = 'PierogiMart')

DROP DATABASE PierogiMart;

GO

CREATE DATABASE PierogiMart;

GO

USE PierogiMart;

GO

CREATE TABLE Articles (

ArticleID INT IDENTITY(1,1) PRIMARY KEY,

ArticleName VARCHAR(50) NOT NULL,

Price DECIMAL(10, 2) NOT NULL

);

CREATE TABLE Inventory (

ReferenceID INT IDENTITY(1,1) PRIMARY KEY,

ArticleID INT NOT NULL,

ValidityDate DATETIME NOT NULL,

Quantity INT NOT NULL,

CONSTRAINT FK_Article FOREIGN KEY (ArticleID) REFERENCES Articles(ArticleID)

);

GO

INSERT INTO Articles (ArticleName, Price)

VALUES

('Pierogi', 12.50),

('Pasta', 8.00),

('Sandwich', 6.50),

('Quiche', 9.00);

GO

INSERT INTO Inventory (ArticleID, ValidityDate, Quantity)

SELECT TOP 100000

2,

DATEADD(DAY, ABS(CHECKSUM(NEWID())) % 365, '2025-01-01'),

ABS(CHECKSUM(NEWID())) % 100

FROM sys.all_columns a CROSS JOIN sys.all_columns b;

INSERT INTO Inventory (ArticleID, ValidityDate, Quantity)

SELECT TOP 10000

3,

DATEADD(DAY, ABS(CHECKSUM(NEWID())) % 365, '2025-01-01'),

ABS(CHECKSUM(NEWID())) % 100

FROM sys.all_columns a CROSS JOIN sys.all_columns b;

INSERT INTO Inventory (ArticleID, ValidityDate, Quantity)

SELECT TOP 50000

4,

DATEADD(DAY, ABS(CHECKSUM(NEWID())) % 365, '2025-01-01'),

ABS(CHECKSUM(NEWID())) % 100

FROM sys.all_columns a CROSS JOIN sys.all_columns b;

INSERT INTO Inventory (ArticleID, ValidityDate, Quantity)

SELECT TOP 10

1,

'2026-12-31',

5

FROM sys.all_columns;

GO

We are also including a few indexes to simulate a real-world use case and to support our queries, ensuring we get realistic execution plans:

CREATE NONCLUSTERED INDEX IDX_INV_QUANT ON [dbo].[Inventory] ([Quantity]) include (ArticleID)

CREATE NONCLUSTERED INDEX IDX_INV_VALIDITY on [dbo].[Inventory] ([ValidityDate]) include (ArticleID)

CREATE NONCLUSTERED INDEX IDX_INV_ART on [dbo].[Inventory] (ArticleID)

Normally, the SQL Server optimizer seeks to minimize the total cost of processing all data for a query. However, if it knows that you only need a specific number of rows (for example, via a TOP, FAST(N), or EXISTS clause), it changes its strategy.

The Row Goal is this specific row target that pushes the optimizer to favor a plan capable of delivering the first few rows as quickly as possible, even if that same plan would be catastrophic for processing the entire table.

TOP(N): Hunting for the best PierogiTo illustrate the definition above, let’s search for the pierogis with the furthest expiration dates.

Note that the IDX_INV_VALIDITY index supports this query:

SELECT

A.ArticleName,

A.Price,

I.ValidityDate

FROM Articles A

INNER JOIN Inventory I ON A.ArticleID = I.ArticleID

WHERE A.ArticleName = 'Pierogi'

order by I.ValidityDate desc;

SELECT top 10

A.ArticleName,

A.Price,

I.ValidityDate

FROM Articles A

INNER JOIN Inventory I ON A.ArticleID = I.ArticleID

WHERE A.ArticleName = 'Pierogi'

order by I.ValidityDate desc

The difference between these two queries is that one requests only the first 10 rows, while the other requests all matching rows. However, this simple distinction is not merely applied when displaying the results; this condition is pushed deeper into the execution plan to influence the choice of operators (Nested Loop, Hash Join, Merge Join) further down the tree.

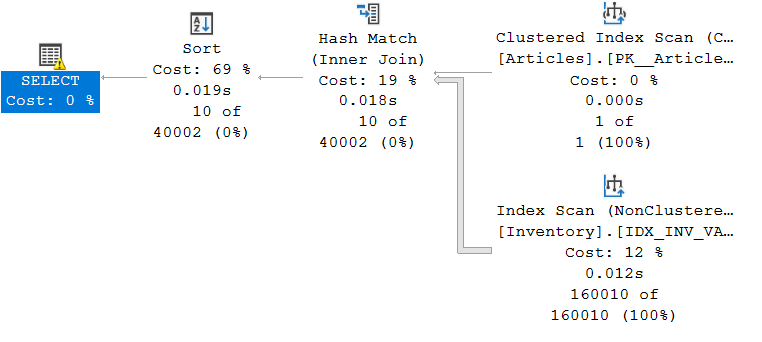

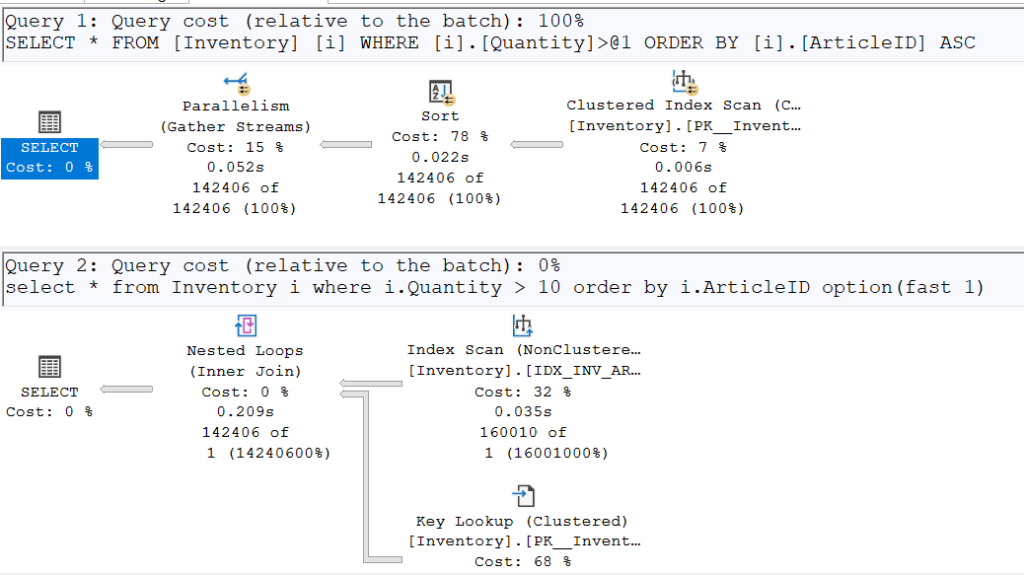

For the first query, here is the resulting plan:

As we can see, the optimizer chose a Hash Join given the volume of data to be joined. A Hash Match implies that all the data must be read in order to produce the desired result.

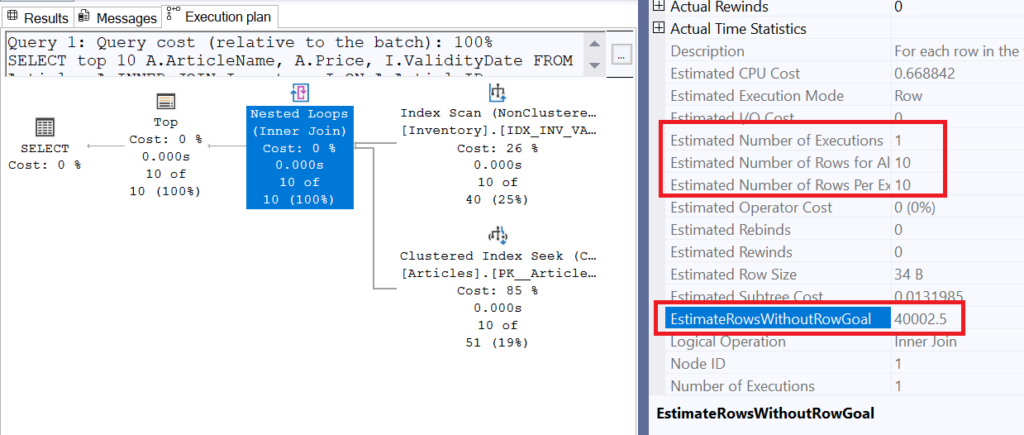

For the second query, here is the execution plan:

We can see that this time, the optimizer chose a Nested Loop, which takes each row from the reference table (Inventory) and joins them with the Articles table. This operation can be very time-consuming if a large number of rows must be processed. However, this is where EstimateRowsWithoutRowGoal comes into play. The value of this property is 40’002.5; this means that in a case where a subset of rows was not specifically required, the optimizer would have estimated the number of rows returned by this operator at that value. We can see, however, that the estimation actually used is 10 rows for one execution, a value clearly derived from the TOP(10).

In summary, adding the TOP(10) allowed the optimizer to use a less expensive join for a small amount of data, even though the TOP operator is located at the very end of the execution plan (since a plan is read from right to left).

As explained previously, the EXISTS clause has a cardinality of 1 because the very first row meeting the internal condition is enough to validate the case. This triggers a Row Goal, as the optimizer must estimate how many rows it will need to read to satisfy (or not) this condition.

Note: In cases where the condition is never met, the optimizer’s plan can become highly inefficient; for full details, see Erik Darling’s blog [here].

We will now observe this behavior with the following query, varying the internal condition of the EXISTS clause by testing one highly selective (discriminant) case and another much less so.

SELECT

A.ArticleName,

A.Price

FROM Articles A

WHERE not EXISTS (

SELECT 1/0

FROM Inventory I

WHERE I.ArticleID = A.ArticleID

AND I.Quantity > 10 -- vs 98

);

As you may have noticed, I am looking here for products that maintain a certain quantity for every possible consumption date. My goal, of course, is to avoid depleting the stocks of these excellent Polish pierogis so that everyone can enjoy them!

The case where we want to ensure that all existing quantities for an item are greater than 10 is very difficult to satisfy; based on the statistics available to the optimizer, all items have 10 or more units in stock, except for the pierogis!

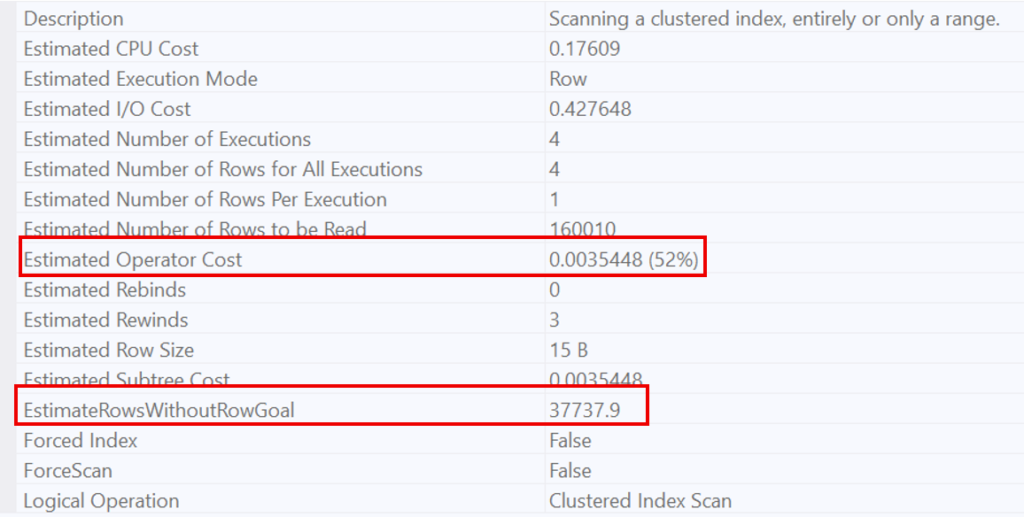

Since this condition is so widespread, the optimizer knows it will have to scan a large number of rows to find a single case where the condition is not met. This is why it opts for a Scan. This behavior is evidenced by the estimated number of rows to be read (160’010, which represents the entire table).

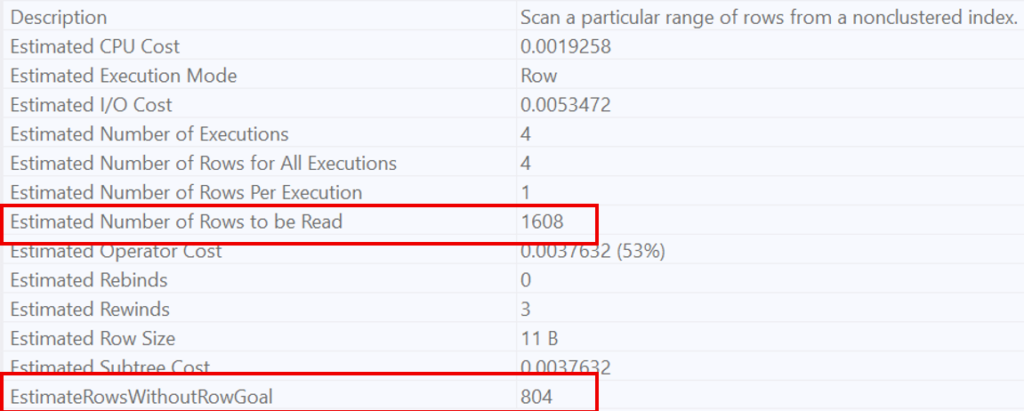

On the other hand, for a very restrictive condition (quantity > 98), the optimizer recognizes that this condition is highly selective. This is why it favors a Nested Loop, estimating that only 1’608 rows will be necessary to prove the non-existence of the condition.

In summary, EXISTS forces the optimizer to estimate the number of rows required to find a single occurrence that proves whether a condition is met or not, thereby triggering a local optimization of the execution plan.

The OPTION(FAST N) hint allows you to manually introduce the Row Goal concept into a query. This hint does not limit the total number of results returned; instead, it optimizes the execution plan to retrieve the first N rows as quickly as possible (potentially at the expense of performance for the remaining rows).

In our example below, we have two identical queries retrieving items with a quantity greater than 10. However, the second one uses an execution plan optimized to return the first row as fast as possible (just to make sure no one steals the last available pierogi from the top of the pile!).

select * from Inventory i

where i.Quantity > 10

order by i.ArticleID

select * from Inventory i

where i.Quantity > 10

order by i.ArticleID option(fast 1)

Once again, the plans diverge. To retrieve a single row, the IDX_INV_ART index (which already contains sorted ArticleIDs) is used. It performs a Seek on the smallest ArticleID to check if it satisfies the condition of having a quantity greater than 10.

However, by enabling SET STATISTICS TIME ON, we can see that the second execution plan is slower than the first when returning all requested rows (250ms vs. 204ms). While the gap is not massive due to the small table size, the difference is nonetheless observable.

To conclude, the Row Goal is a double-edged sword; brilliant when you only need a quick glimpse of your data, but it can become a real performance trap if the optimizer’s “bet” fails.

Fortunately, if you find that SQL Server is making bad decisions by being too optimistic, you can take back control. By using the hint OPTION (USE HINT ('DISABLE_OPTIMIZER_ROWGOAL')), you force the optimizer to stop daydreaming and focus on the actual cost of the query. It’s the ultimate tool to ensure your execution plan doesn’t end up as messy as a dropped plate of pierogis!

L’article How Row Goal shapes your SQL Server query strategy by hunting for pierogis est apparu en premier sur dbi Blog.

SQL Server Snapshot Backup and Restore with Proxmox ZFS – REST API with SQL Server 2025 (3/3)

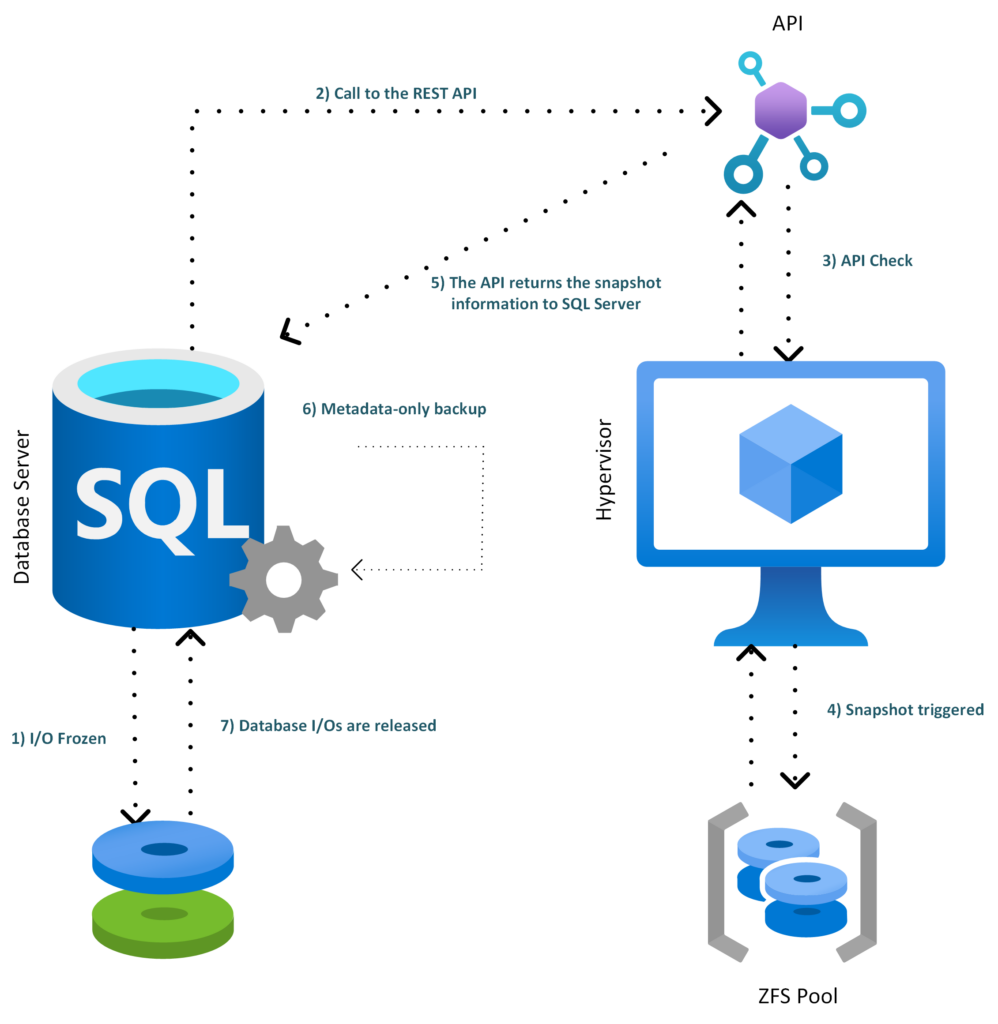

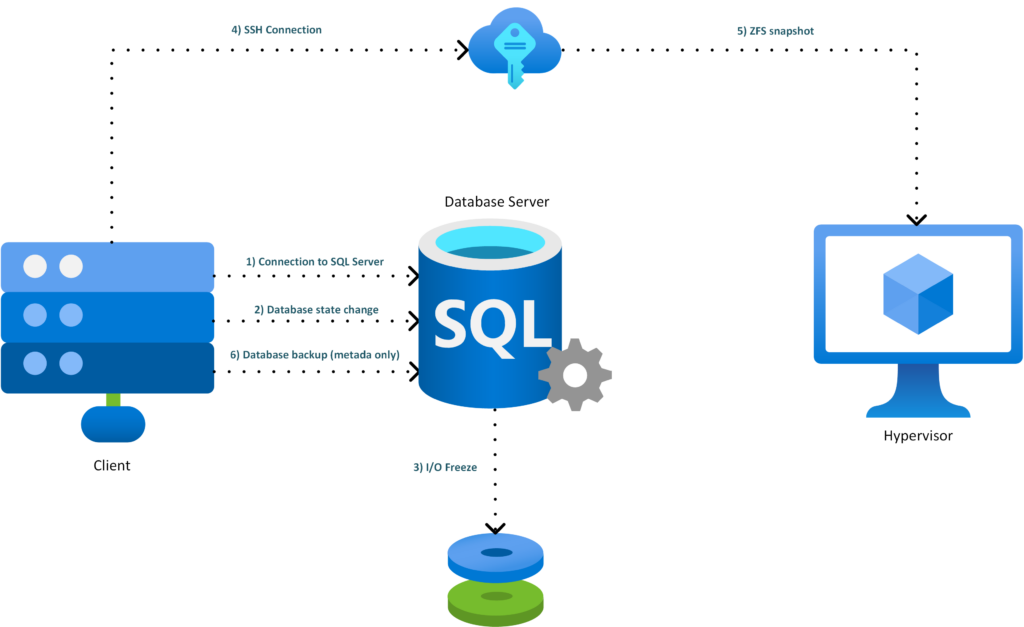

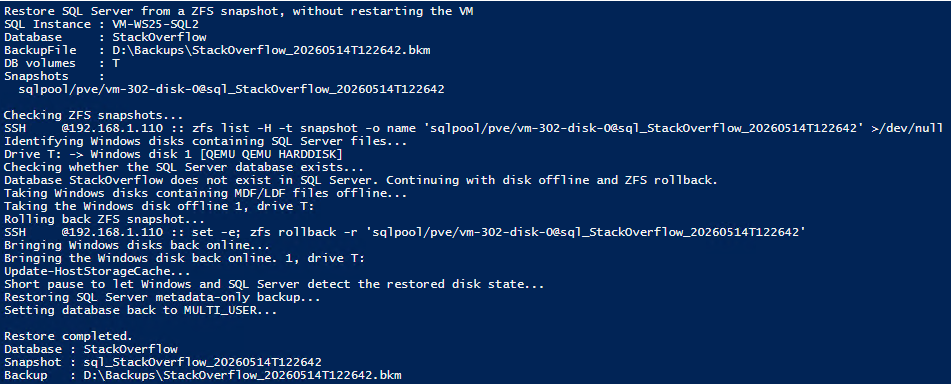

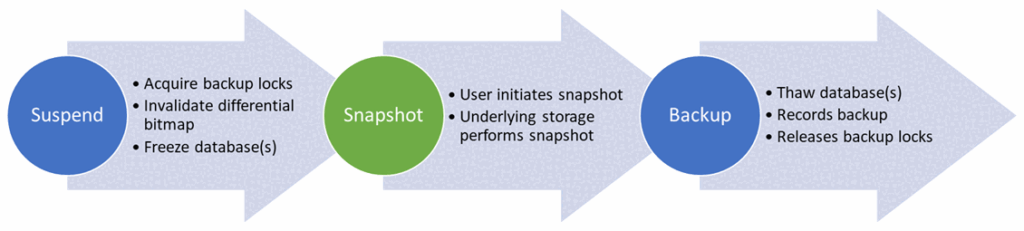

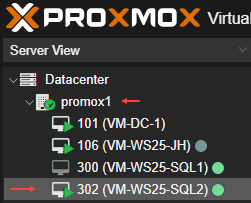

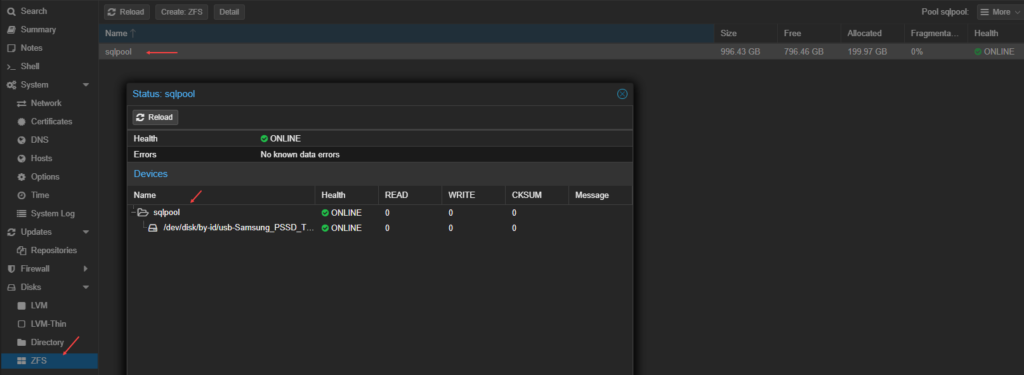

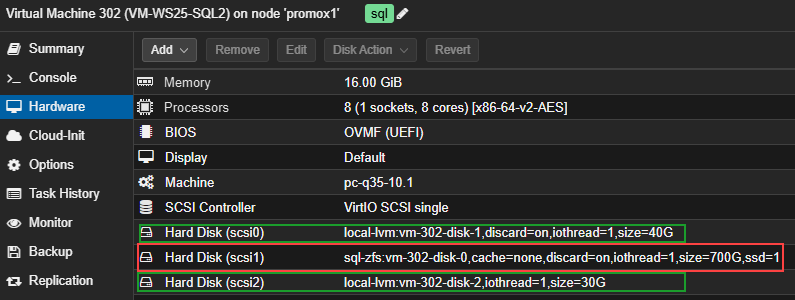

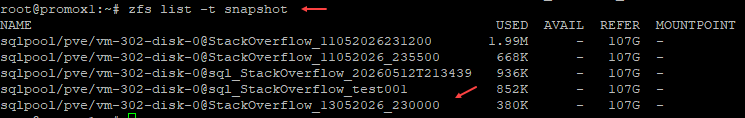

The proposed architecture consists in adding a small internal REST API on the Proxmox server in order to expose a controlled ZFS snapshot operation. SQL Server 2025 can then call this API through sp_invoke_external_rest_endpoint, instead of running SSH commands directly or relying on an external tool.

The role of the API is deliberately limited: it receives a snapshot request, checks that the requested zvol is authorized, and then runs the zfs snapshot command on the Proxmox side. An allowlist is used to restrict the ZFS volumes that can be accessed. This prevents a REST call from being able to manipulate any dataset on the server.

With this approach, we can reproduce a behavior close to what an enterprise storage array provides, but using Proxmox and ZFS. It is important to note that Proxmox does not natively provide the same level of integration as Pure Storage for SQL Server snapshots. Pure Storage provides dedicated mechanisms and integrations. In our case, we need to build a specific orchestration layer. The REST API therefore acts as an adapter between SQL Server, which drives the snapshot backup workflow, and ZFS, which actually performs the storage-level snapshot.

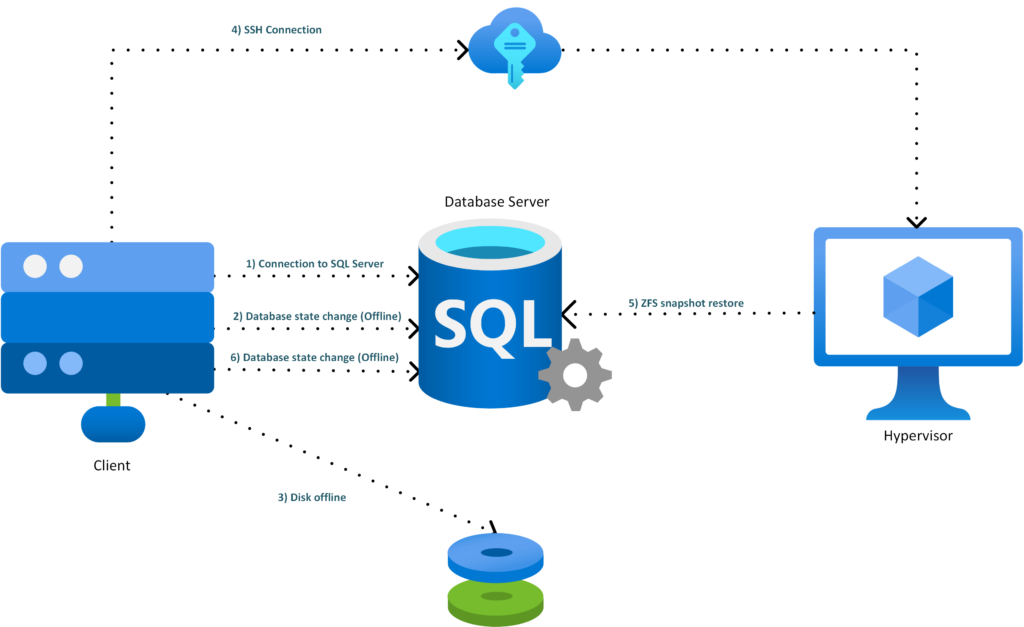

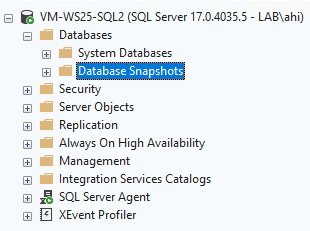

ArchitectureHere is a global overview of the architecture:

- SQL Server freezes the database I/Os

- SQL Server 2025 calls the internal REST API

- The REST API validates the request and checks the zvol allowlist

- The API triggers the ZFS snapshot on Proxmox

- The API returns the snapshot information to SQL Server

- SQL Server creates the metadata-only backup

- The database I/Os are released

REST API implementation

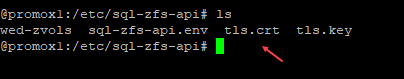

REST API implementation

Under Proxmox, we install the required packages:

apt update

apt install -y python3-venv sudo opensslWe create a dedicated user:

useradd --system \

--home /opt/sql-zfs-api \

--shell /usr/sbin/nologin \

sqlsnapWe create the following folders:

mkdir -p /opt/sql-zfs-api

mkdir -p /etc/sql-zfs-apiWe declare the authorized zvol :

cat >/etc/sql-zfs-api/allowed-zvols <<'EOF'

sqlpool/pve/vm-302-disk-0

EOFWe create a root-only allowlist:

chown root:root /etc/sql-zfs-api/allowed-zvols

chmod 600 /etc/sql-zfs-api/allowed-zvolsThen we create the secured ZFS helper. This script is executed as root through sudo, but it rejects any dataset that is not defined in the allowlist.

cat >/usr/local/sbin/sql-zfs-helper <<'EOF'

#!/usr/bin/env bash

set -euo pipefail

ALLOW_FILE="/etc/sql-zfs-api/allowed-zvols"

LOCK_FILE="/run/sql-zfs-helper.lock"

die() {

echo "$*" >&2

exit 1

}

exec 9>"$LOCK_FILE"

flock -n 9 || die "another snapshot operation is already running"

[[ -r "$ALLOW_FILE" ]] || die "allowlist not readable: $ALLOW_FILE"

mapfile -t ALLOWED_DATASETS < <(grep -Ev '^\s*(#|$)' "$ALLOW_FILE")

is_allowed() {

local ds="$1"

local allowed

for allowed in "${ALLOWED_DATASETS[@]}"; do

[[ "$ds" == "$allowed" ]] && return 0

done

return 1

}

valid_snapname() {

[[ "$1" =~ ^[A-Za-z0-9_.:-]{1,120}$ ]]

}

ACTION="${1:-}"

shift || true

case "$ACTION" in

snapshot)

SNAPNAME="${1:-}"

shift || true

valid_snapname "$SNAPNAME" || die "invalid snapshot name: $SNAPNAME"

[[ "$#" -ge 1 ]] || die "no zvol specified"

[[ "$#" -le 8 ]] || die "too many zvols"

SNAPSHOTS=()

for DS in "$@"; do

is_allowed "$DS" || die "dataset not allowed: $DS"

/sbin/zfs list -H -t volume -o name "$DS" >/dev/null 2>&1 || die "zvol not found: $DS"

FULLSNAP="${DS}@${SNAPNAME}"

if /sbin/zfs list -H -t snapshot -o name "$FULLSNAP" >/dev/null 2>&1; then

die "snapshot already exists: $FULLSNAP"

fi

SNAPSHOTS+=("$FULLSNAP")

done

/sbin/zfs snapshot "${SNAPSHOTS[@]}"

/sbin/zfs hold sqlsnap "${SNAPSHOTS[@]}"

printf '{"status":"ok","snapshots":['

SEP=""

for S in "${SNAPSHOTS[@]}"; do

printf '%s"%s"' "$SEP" "$S"

SEP=","

done

printf ']}\n'

;;

list)

/sbin/zfs list -H -t snapshot -o name -r sqlpool | grep '@sql_' || true

;;

*)

die "usage: sql-zfs-helper snapshot SNAPNAME ZVOL [ZVOL...]"

;;

esac

EOF

chown root:root /usr/local/sbin/sql-zfs-helper

chmod 750 /usr/local/sbin/sql-zfs-helper

We only allow the helper through sudo:

cat >/etc/sudoers.d/sql-zfs-helper <<'EOF'

sqlsnap ALL=(root) NOPASSWD: /usr/local/sbin/sql-zfs-helper *

EOF

chmod 440 /etc/sudoers.d/sql-zfs-helper

visudo -cf /etc/sudoers.d/sql-zfs-helperWe install the FastAPI API:

python3 -m venv /opt/sql-zfs-api/venv

/opt/sql-zfs-api/venv/bin/pip install fastapi "uvicorn[standard]"We create the application file:

cat >/opt/sql-zfs-api/app.py <<'EOF'

import os

import re

import json

import socket

import secrets

import subprocess

from datetime import datetime, timezone

from fastapi import FastAPI, Header, HTTPException

from pydantic import BaseModel, Field

API_KEY = os.environ.get("SQL_ZFS_API_KEY", "")

ALLOW_FILE = "/etc/sql-zfs-api/allowed-zvols"

SNAP_RE = re.compile(r"^[A-Za-z0-9_.:-]{1,120}$")

app = FastAPI(title="SQL ZFS Snapshot API", version="1.0.0")

class SnapshotRequest(BaseModel):

database: str = Field(..., min_length=1, max_length=128)

vmid: int = 302

snapname: str = Field(..., min_length=1, max_length=120)

zvols: list[str] = Field(..., min_length=1, max_length=8)

def load_allowed_zvols() -> set[str]:

with open(ALLOW_FILE, "r", encoding="utf-8") as f:

return {

line.strip()

for line in f

if line.strip() and not line.strip().startswith("#")

}

def check_api_key(x_sqlsnap_key: str | None) -> None:

if not API_KEY:

raise HTTPException(status_code=500, detail="API key not configured")

if not x_sqlsnap_key:

raise HTTPException(status_code=401, detail="missing API key")

if not secrets.compare_digest(x_sqlsnap_key, API_KEY):

raise HTTPException(status_code=403, detail="invalid API key")

@app.get("/health")

def health():

return {

"status": "ok",

"host": socket.gethostname(),

"utc": datetime.now(timezone.utc).isoformat(),

}

@app.post("/v1/sql-zfs/snapshot")

def create_snapshot(

req: SnapshotRequest,

x_sqlsnap_key: str | None = Header(default=None, alias="x-sqlsnap-key"),

):

check_api_key(x_sqlsnap_key)

if not SNAP_RE.fullmatch(req.snapname):

raise HTTPException(status_code=400, detail="invalid snapname")

allowed = load_allowed_zvols()

for zvol in req.zvols:

if zvol not in allowed:

raise HTTPException(status_code=403, detail=f"zvol not allowed: {zvol}")

cmd = [

"sudo",

"/usr/local/sbin/sql-zfs-helper",

"snapshot",

req.snapname,

*req.zvols,

]

try:

completed = subprocess.run(

cmd,

text=True,

stdout=subprocess.PIPE,

stderr=subprocess.PIPE,

timeout=30,

check=False,

)

except subprocess.TimeoutExpired:

raise HTTPException(status_code=504, detail="zfs snapshot timeout")

if completed.returncode != 0:

raise HTTPException(

status_code=500,

detail={

"error": completed.stderr.strip(),

"stdout": completed.stdout.strip(),

},

)

snapshots = [f"{zvol}@{req.snapname}" for zvol in req.zvols]

return {

"status": "ok",

"database": req.database,

"vmid": req.vmid,

"snapname": req.snapname,

"snapshots": snapshots,

"media_description": "zfs|" + socket.gethostname() + "|" + ";".join(snapshots),

}

EOF

chown -R root:root /opt/sql-zfs-api

chmod 755 /opt/sql-zfs-api

chmod 644 /opt/sql-zfs-api/app.py

We configure and generate the key:

APIKEY="$(openssl rand -hex 32)"

echo "$APIKEY"We create the environment file:

cat >/etc/sql-zfs-api/sql-zfs-api.env <<EOF

SQL_ZFS_API_KEY=$APIKEY

EOF

chown root:root /etc/sql-zfs-api/sql-zfs-api.env

chmod 600 /etc/sql-zfs-api/sql-zfs-api.envWe need to save the generated key.

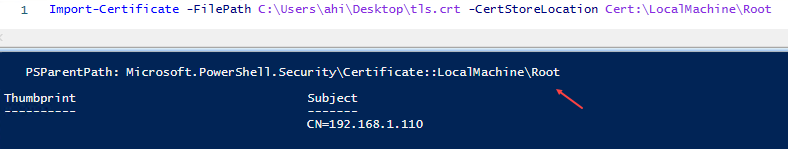

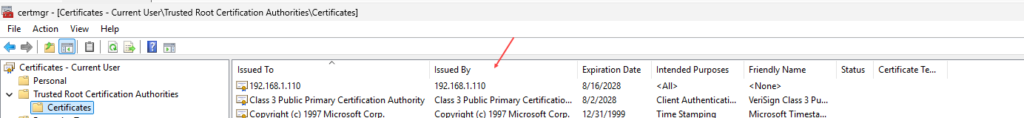

Next, we enable HTTPS. SQL Server sp_invoke_external_rest_endpoint calls HTTPS endpoints, and the documentation specifies that only HTTPS endpoints with TLS are supported.

openssl req -x509 -newkey rsa:4096 -sha256 -days 360 -nodes \

-keyout /etc/sql-zfs-api/tls.key \

-out /etc/sql-zfs-api/tls.crt \

-subj "/CN=promox1" \

-addext "subjectAltName=DNS:promox1,IP:192.168.1.110"

chown root:sqlsnap /etc/sql-zfs-api/tls.key /etc/sql-zfs-api/tls.crt

chmod 640 /etc/sql-zfs-api/tls.key

chmod 644 /etc/sql-zfs-api/tls.crtThe /etc/sql-zfs-api/tls.crt certificate must be imported into the Windows trusted root certification authorities on the SQL Server side. Otherwise, the HTTPS call may fail.

We create the systemd service:

cat >/etc/systemd/system/sql-zfs-api.service <<'EOF'

[Unit]

Description=SQL Server to ZFS Snapshot API

After=network-online.target

Wants=network-online.target

[Service]

User=sqlsnap

Group=sqlsnap

WorkingDirectory=/opt/sql-zfs-api

EnvironmentFile=/etc/sql-zfs-api/sql-zfs-api.env

ExecStart=/opt/sql-zfs-api/venv/bin/uvicorn app:app --host 0.0.0.0 --port 8443 --ssl-keyfile /etc/sql-zfs-api/tls.key --ssl-certfile /etc/sql-zfs-api/tls.crt

Restart=on-failure

RestartSec=3

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable --now sql-zfs-api

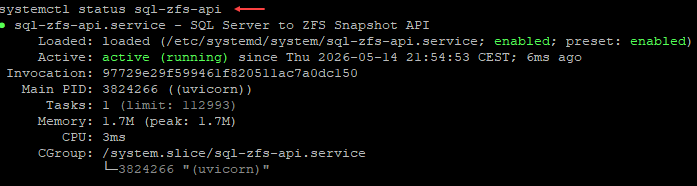

systemctl status sql-zfs-api

We check the status of our API:

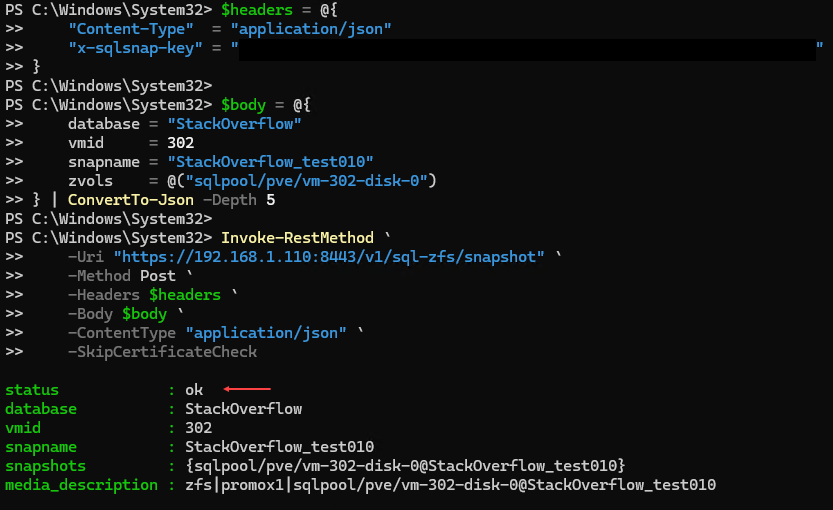

It is possible to call the API in PowerShell using Invoke-RestMethod with PowerShell 7:

$headers = @{

"Content-Type" = "application/json"

"x-sqlsnap-key" = "MyKey"

}

$body = @{

database = "StackOverflow"

vmid = 302

snapname = "StackOverflow_test010"

zvols = @("sqlpool/pve/vm-302-disk-0")

} | ConvertTo-Json -Depth 5

Invoke-RestMethod `

-Uri "https://192.168.1.110:8443/v1/sql-zfs/snapshot" `

-Method Post `

-Headers $headers `

-Body $body `

-ContentType "application/json" `

-SkipCertificateCheck

This gives:

Test from SQL Server

Test from SQL Server

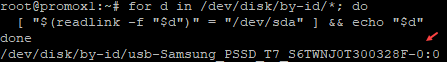

A certificate was generated on Proxmox and it needs to be imported on the SQL Server host. In my case, it was located here:

I then imported it on Windows Server:

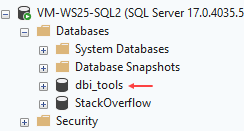

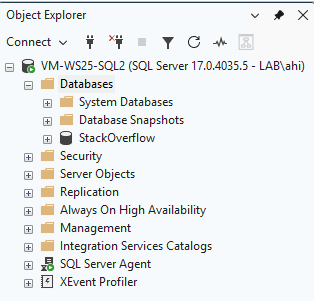

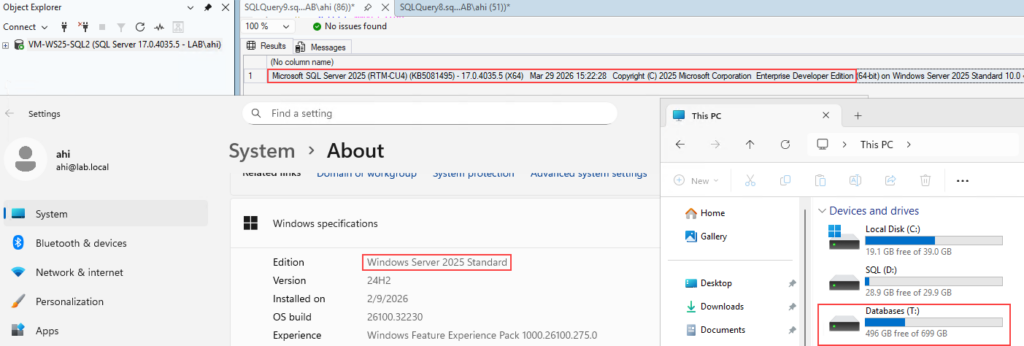

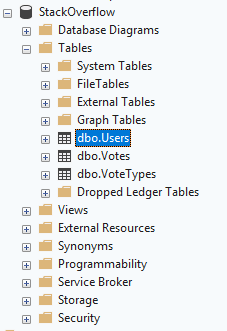

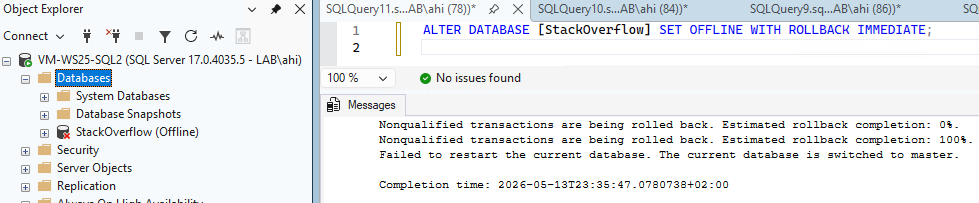

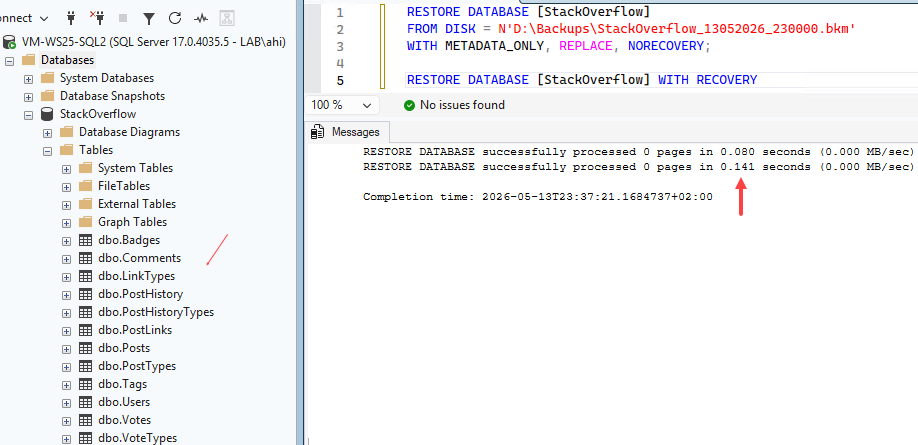

For testing purposes, I created something simple. On the SQL Server side, we can create a database that will be used to store our future stored procedure. This procedure will allow us to interact with the API. In my case, I created a database called dbi_tools:

This database will contain a credential. In our case, the DATABASE SCOPED CREDENTIAL is used to securely store the authentication information required to call the REST API from SQL Server. This allows us, for example, to protect the API key:

USE [dbi_tools]

GO

IF NOT EXISTS (

SELECT 1

FROM sys.symmetric_keys

WHERE name = '##MS_DatabaseMasterKey##'

)

BEGIN

CREATE MASTER KEY ENCRYPTION BY PASSWORD = 'MyStrongPassword_%99';

END

GO

CREATE DATABASE SCOPED CREDENTIAL [https://192.168.1.110:8443/v1/sql-zfs/snapshot]

WITH

IDENTITY = 'HTTPEndpointHeaders',

SECRET = '{"x-sqlsnap-key":"MyAPIKey"}';

GOWe then create a stored procedure to encapsulate the code used to call the API:

USE dbi_tools;

GO

CREATE OR ALTER PROCEDURE dbo.usp_BackupDatabase_WithZfsSnapshot

@DatabaseName sysname,

@BackupDirectory nvarchar(4000) = N'D:\Backups\'

AS

BEGIN

SET NOCOUNT ON;

DECLARE @Url nvarchar(4000) =

N'https://192.168.1.110:8443/v1/sql-zfs/snapshot';

DECLARE @Vmid int = 302;

DECLARE @ZvolsJson nvarchar(max) =

N'["sqlpool/pve/vm-302-disk-0"]';

DECLARE @Stamp varchar(20) =

REPLACE(REPLACE(CONVERT(varchar(19), SYSUTCDATETIME(), 126), '-', ''), ':', '') + 'Z';

DECLARE @SafeDbName nvarchar(128) =

REPLACE(REPLACE(REPLACE(@DatabaseName, N' ', N'_'), N'[', N''), N']', N'');

DECLARE @SnapName nvarchar(128) =

CONCAT(N'sql_', @SafeDbName, N'_', @Stamp);

DECLARE @BackupFile nvarchar(4000) =

CONCAT(@BackupDirectory, N'\', @SafeDbName, N'_', @Stamp, N'.bkm');

DECLARE @Payload nvarchar(max) =

(

SELECT

@DatabaseName AS [database],

@Vmid AS [vmid],

@SnapName AS [snapname],

JSON_QUERY(@ZvolsJson) AS [zvols]

FOR JSON PATH, WITHOUT_ARRAY_WRAPPER

);

DECLARE @ReturnCode int;

DECLARE @Response nvarchar(max);

DECLARE @SnapshotList nvarchar(max);

SELECT @SnapshotList =

STRING_AGG(CONCAT([value], N'@', @SnapName), N';')

FROM OPENJSON(@ZvolsJson);

DECLARE @MediaDescription nvarchar(max) =

CONCAT(N'zfs|promox1|', @SnapshotList);

DECLARE @Sql nvarchar(max);

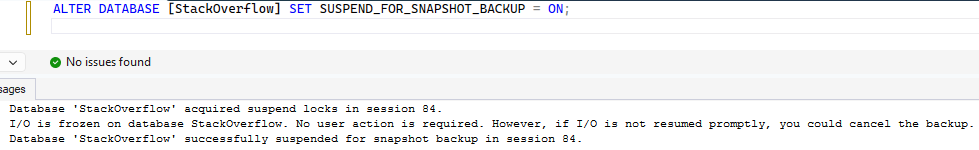

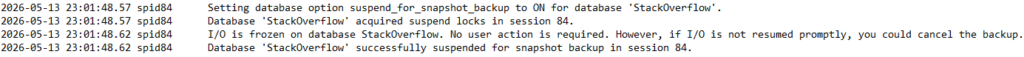

BEGIN TRY

SET @Sql =

N'ALTER DATABASE ' + QUOTENAME(@DatabaseName) +

N' SET SUSPEND_FOR_SNAPSHOT_BACKUP = ON;';

EXEC sys.sp_executesql @Sql;

EXEC @ReturnCode = sys.sp_invoke_external_rest_endpoint

@url = @Url,

@method = N'POST',

@headers = N'{"Content-Type":"application/json","Accept":"application/json"}',

@payload = @Payload,

@credential = [https://192.168.1.110:8443/v1/sql-zfs/snapshot],

@timeout = 30,

@response = @Response OUTPUT;

IF @ReturnCode <> 0

BEGIN

DECLARE @Err nvarchar(max) =

CONCAT(N'ZFS snapshot API failed. ReturnCode=', @ReturnCode, N' Response=', @Response);

THROW 51001, @Err, 1;

END;

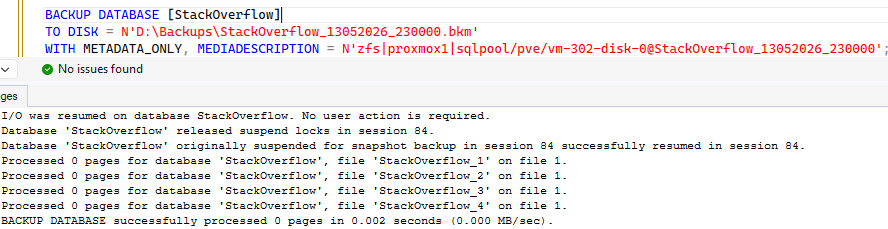

SET @Sql =

N'BACKUP DATABASE ' + QUOTENAME(@DatabaseName) + N'

TO DISK = @BackupFile

WITH METADATA_ONLY,

FORMAT,

MEDIANAME = @MediaName,

MEDIADESCRIPTION = @MediaDescription,

NAME = @BackupName;';

EXEC sys.sp_executesql

@Sql,

N'@BackupFile nvarchar(4000),

@MediaName nvarchar(128),

@MediaDescription nvarchar(max),

@BackupName nvarchar(128)',

@BackupFile = @BackupFile,

@MediaName = @SnapName,

@MediaDescription = @MediaDescription,

@BackupName = @SnapName;

SELECT

@DatabaseName AS database_name,

@SnapName AS zfs_snapshot_name,

@SnapshotList AS zfs_snapshots,

@BackupFile AS metadata_backup_file,

@MediaDescription AS media_description,

@Response AS api_response;

END TRY

BEGIN CATCH

IF DATABASEPROPERTYEX(@DatabaseName, 'IsDatabaseSuspendedForSnapshotBackup') = 1

BEGIN

SET @Sql =

N'ALTER DATABASE ' + QUOTENAME(@DatabaseName) +

N' SET SUSPEND_FOR_SNAPSHOT_BACKUP = OFF;';

EXEC sys.sp_executesql @Sql;

END;

THROW;

END CATCH

END;

GO

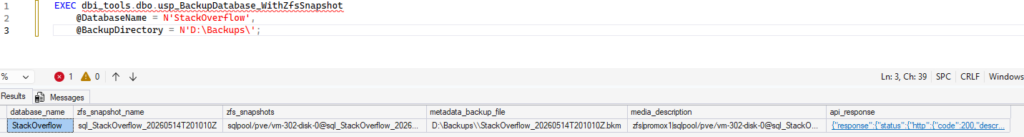

We then call the stored procedure:

EXEC dbi_tools.dbo.usp_BackupDatabase_WithZfsSnapshot

@DatabaseName = N'StackOverflow',

@BackupDirectory = N'D:\Backups\';

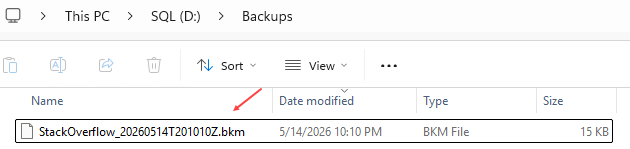

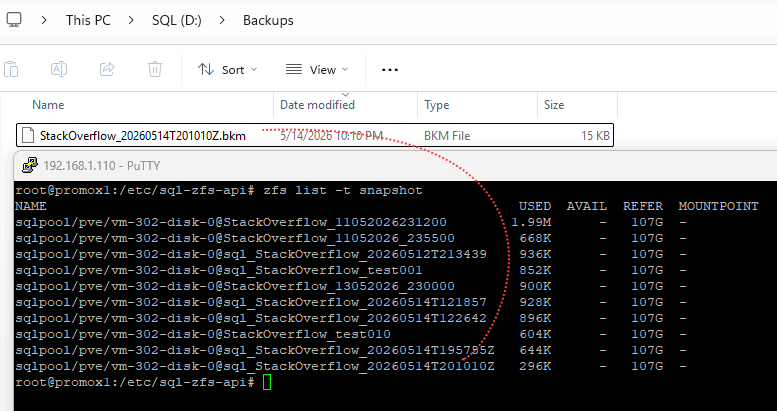

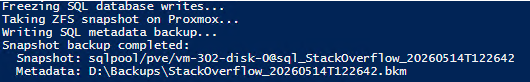

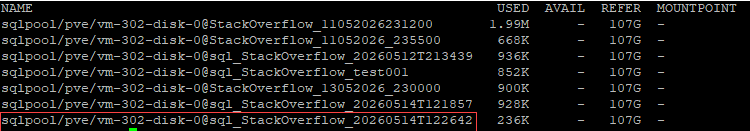

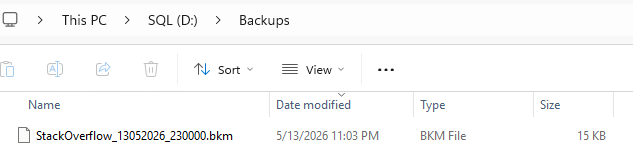

The backup was generated :

References

References

sp_invoke_external_rest_endpoint

Thank you. Amine Haloui

L’article SQL Server Snapshot Backup and Restore with Proxmox ZFS – REST API with SQL Server 2025 (3/3) est apparu en premier sur dbi Blog.

SQL Server Snapshot Backup and Restore with Proxmox ZFS – Powershell implementation (2/3)