Re: huge CPU load after UEK upgrade

Date: Thu, 19 Aug 2021 12:56:13 +0300

Message-ID: <CA+riqSUxN82VJKY2-wR=EcnjVR_T5Bw2pGvpNkXd2wRAgeFU2g_at_mail.gmail.com>

Thanks a lot Tanel for the valuable information.

mpstat will show me 0 load for %irq , on average each core is around 15-20%

in %usr space and 35-40% in %sys space.

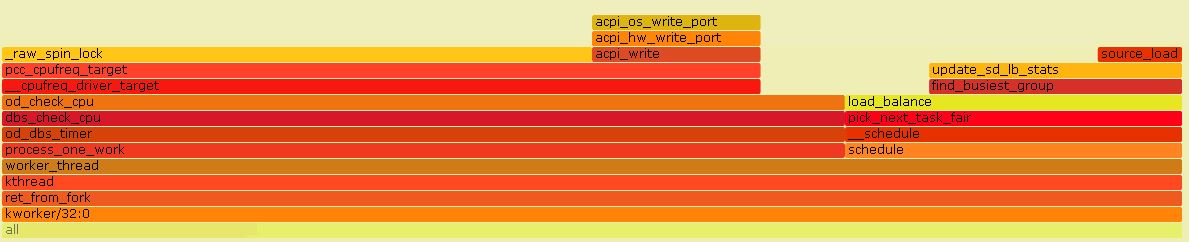

By looking into a section of FG it looks like *kworker *is the culprit

for *_raw_spin_lock

I`m somehow at a dead end as I`m not able to request a bug fix for it as

this is OL6 . Any ideas on what I can do as a workaround?

În mie., 18 aug. 2021 la 10:48, Tanel Poder <tanel_at_tanelpoder.com> a scris:

> Sorry, been swamped and haven't had time to lurk around oracle-l threads

and *and *acpi_os_write_port. *

> :-)

>

> The kworker threads do asynch I/O stuff in Linux kernel (among other

> stuff). So, when using libaio, you'd see kworkers involved in I/Os and

> apparently asmlib also ends up using kworkers under the hood (I never

> looked into asmlib in detail, but the psn/kstack output indicates kworker

> use too).

>

> I'd say it's some asmlib/kernel bug, especially if you didn't do any

> hardware changes, like this one:

>

> *Oracle Linux : OL6 Running On Exadata Observes A Particular CPU Comsumes

> High CPU Under Low System Load by kworker and ksoftirqd (Doc ID 2645898.1)

> - "Known issue with kernel version 4.1.12-124.24.3.el6uek.x86_64 and

> lower." *

>

> Sometimes various interrupt storms & issues can also cause this weird

> behavior - *mpstat* would show how much (CPU) time you spend on

> hardware/software interrupts.

>

> If you click on some of the stack sections on the very busy looking

> left-side of the *FG_UEK4_all_30seconds.jpg* screenshot, what do you see?

>

> Note that the intel_idle and related stack frames always show up anyway

> when you use perf -a, as that option reports all *CPU* time of all CPUs

> (including CPU idle time, which is not the same as a process/thread idle

> time, essentially it's CPU's time spent in

> no-work/low-power-suspend/poweroff states). This is different from perf

> record -p behavior as -p would only show on-CPU samples of a process/thread

> and not show off-CPU samples *of a process/thread*.

>

> As you see lots of *_raw_spin_lock* contention (CPU time spent to acquire

> a spinlock in that function), look up (with the FlameGraph search box, like

> I did in my blog entry), who calls that function? That would tell you which

> type of spinlock has that contention.

>

> Also the fact that you see lots of *acpi_os_write_port* calls is weird.

> As so frequently interacting with hardware via I/O ports (instead of DMA)

> so that this *acpi_os_write_port* function shows up in the top CPU users,

> also indicates some sort of a kernel<->hardware communication issue... see

> what functions are its parents, grandparents, it may provide extra insight.

>

> --

> Tanel Poder

> https://tanelpoder.com/events

>

>

> On Fri, Aug 13, 2021 at 9:54 AM Laurentiu Oprea <

> laurentiu.oprea06_at_gmail.com> wrote:

>

>> quite suspicious is that running :

>> perf record -F 99 -g a sleep 30

>> and looking in FG looks like the word "idle" is a big chunk of it.

>>

>> În vin., 13 aug. 2021 la 11:25, Laurentiu Oprea <

>> laurentiu.oprea06_at_gmail.com> a scris:

>>

>>> Thanks a lot for all the answers. Taking in order of what I tried:

>>>

>>> -> booting with acpi=off will apparently make the system see just 1 cpu

>>> core / booting with acpi=ht I`ll not have the single cpu issue but my

>>> load/slowness issue remains there -> not sure what I can explore more on

>>> this subject

>>>

>>> -> On this particular rac cluster I have around 32 databases, I observed

>>> that starting just 8 instances on the upgrade node will not generate a

>>> significant CPU load, starting 12 or more will make the situation far worse

>>> (the other 2 nodes with UEK3 are running perfectly well with all instances

>>> up) . So I started just 8 instances and I wrote a test query (simple select

>>> with group by, all significant ash time being spent on CPU) that executed

>>> on the upgraded node will run in 68 seconds while on non-upgraded node will

>>> run in 38. I sampled with psn the spid of the session while running the

>>> statement and also I sampled with perf and generated a flamegraph.

>>>

>>> From FG I might conclude that all the calls are identical from a

>>> distribution perspective is just that each call takes longer time .

>>> From PSN Looks like the majority of samples are on CPU with no kstack

>>> (-) or retin_carefull(), is just that on nodes running UEK3 is running

>>> faster so less samples are collected.

>>>

>>> I`m starting to think that there is actually a general slowness running

>>> UEK4 compared with UEK3 (and maybe seeing those kworker process is just a

>>> side effect) While the system is slow the kstack for kworkers is just:

>>> ret_from_fork() ->kthread -> worker_thread() )

>>>

>>> Basically I`m out of ideas, so any suggestions are very welcomed :)

>>>

>>> Thank you.

>>>

>>>

>>>

>>>

>>> În joi, 12 aug. 2021 la 20:29, Tanel Poder <tanel_at_tanelpoder.com> a

>>> scris:

>>>

>>>> Oh, UEK 4 is Linux kernel 4.1.x, I don't recall if that bug/problem

>>>> exists there, but you can still use the techniques from my blog entry to

>>>> drill down deeper. One of the main questions is - is your Linux system load

>>>> high due to on-CPU threads or threads in D state. perf record -g would help

>>>> you drill down more into the CPU usage and from the full stack traces you'd

>>>> see which kernel functions are trying to get that spinlock you already saw

>>>> contention on, but if it's mostly D state sleeping threads, then

>>>> /proc/PID/stack or psnapper would tell you more.

>>>>

>>>> On Thu, Aug 12, 2021 at 1:26 PM Tanel Poder <tanel_at_tanelpoder.com>

>>>> wrote:

>>>>

>>>>> Hi,

>>>>>

>>>>> Run psnapper or just sudo cat /proc/PID/stack for some of the Oracle &

>>>>> kworker processes (that are in D state) and check if you have

>>>>> *wbt_wait* in the stack. UEK 4 (kernel 4.14) has a Linux kernel write

>>>>> throttling bug/problem and lots of kworkers showing up is one of the

>>>>> symptoms. I've written about drilling down into high Linux system load in

>>>>> general here:

>>>>>

>>>>>

>>>>> https://tanelpoder.com/posts/high-system-load-low-cpu-utilization-on-linux/

>>>>>

>>>>> Incidentally, this is one of the problem scenarios I cover at the

>>>>> upcoming "troubleshooting very complex Oracle performance problems" virtual

>>>>> "conference" (where all speakers are me -

>>>>> https://tanelpoder.com/events ;-)

>>>>>

>>>>> --

>>>>> Tanel Poder

>>>>>

>>>>>

>>>>> On Thu, Aug 12, 2021 at 2:41 AM Laurentiu Oprea <

>>>>> laurentiu.oprea06_at_gmail.com> wrote:

>>>>>

>>>>>> Hello everyone,

>>>>>>

>>>>>> I know this is more of a linux question but maybe someone had a

>>>>>> similar issue.

>>>>>>

>>>>>> I started to upgrade the kernel version from UEK3 to UEK4 on servers

>>>>>> (rac 2/3 nodes) running OL6. I had no issue upgrading the kernel on virtual

>>>>>> machines.

>>>>>>

>>>>>> When I upgraded the first rac node located on physical hardware,

>>>>>> after starting the oracle instances the cpu load went crazy, the whole

>>>>>> system was far slower. For example the cpu load on the other rac nodes with

>>>>>> UEK3 was 4/3/3/ while the upgraded node load was 107/104/77 with only half

>>>>>> the instances started, this workload being created even if the instances

>>>>>> had no significant workload (they were just started)

>>>>>>

>>>>>> I observed that with the new kernel version when oracle

>>>>>> instances start there is quite a big number of kworker processes. Perf top

>>>>>> will show me as overhead

>>>>>> 17.24% [kernel] _raw_spin_lock

>>>>>> 4.50% [kernel] acpi_os_write_port

>>>>>>

>>>>>> Does anyone have any idea on what could be the issue?

>>>>>>

>>>>>> THanks a lot.

>>>>>>

>>>>>

-- http://www.freelists.org/webpage/oracle-lReceived on Thu Aug 19 2021 - 11:56:13 CEST

(image/jpeg attachment: FG_UEK4_1_kworker_section.JPG)